TCP Keepalives failing over NAT

-

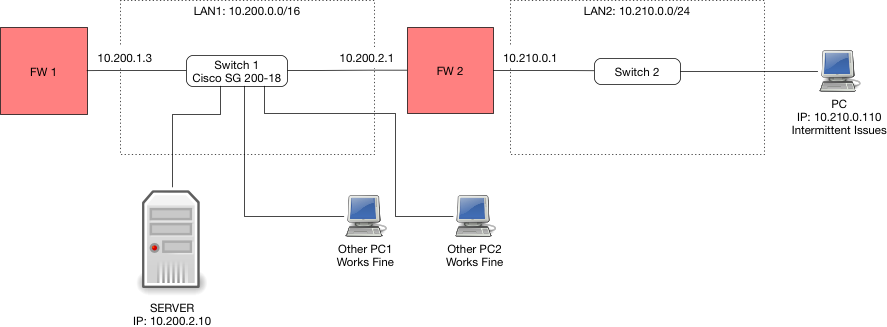

" FW1' LAN1 IP 10.200.1.3 is the default GW for all LAN1 devices."

You run into an asymmetrical mess without your nat sure..

"4. Clearly SERVER would not know how to reach 10.210.0.0/24 now, so I added a (non default) GW on FW1 pointing to 10.200.2.1, and added a static route that directs traffic destined for 10.210.0.0/24 to that GW 10.200.2.1."

Asymmetrical mess!!!

Your downstream network should be connected to fw1 via a transit network, no devices other than routers/ firewall should sit on a transit. If hosts sit on a transit then you need to use host routing to tell the how where to go so you do not run into asymmetrical issues. Yes one way to work around is via nat - but this is not a good or suggest solution but a lazy work around that has its own issues!! Downstream routers should always be connected to upstream routers via a transit network. Now you do not have to nat to work around the asymmetrical issues.. And easier to route and control, etc. etc. etc..

Clean your sniff to remove the sql info.. Just leave the keepalive stuff.

But I would suggest you sniff on the sql server to validate it actually gets the keep alives that you can clearly see pfsense putting on the wire!! So while you can try and blame pfsense - its only function is to put the packets on the wire.. It has nothing to do with if the device answers or how fast it answers, etc.

Even the acks that seem to be working early on in the the trace shows that your getting retrans.. You can see from the seq and ack numbers.. And the

[Reassembly error, protocol TCP: New fragment overlaps old data (retransmission?)]But again - pfsense only job is to move the traffic.. The device actually getting it or responding to it fast enough to not have it generate a retrans has noting to do with pfsense..

If your timestamps are to believe the packets are are moving across pfsense that is natting them in 0.000004 seconds.. So the delay is not the issue..

It would be far easier to look into the sniffs if they were actual pcaps.. Do you need help on filtering out the non keepalive traffic?

-

Thanks John - this is already super helpful. Let me try and set up a dump on SRV1. I am sure I can figure out how to run tcpdump to filter on TCP keep alive. If not I'll ask. Will get post it once the error happens again.

-

Ok it happened again, 17 Aug at approx 10:07:35 CDT, again at 10:08:16 CDT and again at 10:10:46 CDT (Central US Time). It is around the three RSTs in the trace file.

I captured a trace using netsh on the windows SERVER box (on LAN1), a trace on FW2's LAN1 interface and FW2's LAN2 interface. Since netsh cannot filter on anything more than protocol and IP, I could not filter only on keepalives. So I used wireshark with this filter:

(tcp.analysis.keep_alive_ack or tcp.analysis.keep_alive or tcp.flags.reset==1) and tcp.port==1433 and frame.number > 33000

I exported the displayed results as pcap. Clearly the frames will now have some gaps so the data you see is not exactly how it appears to me. I also included a txt export for each that resembles what I see better as this is based on the displayed results. I also included a summary txt export as before. Hope together they help.

Take note that IPs are different. SERVER is on 10.200.2.6, FW2 LAN1 is 10.200.2.16, FW2 LAN2 is 10.210.0.1, CLIENT PC is 10.210.0.100.

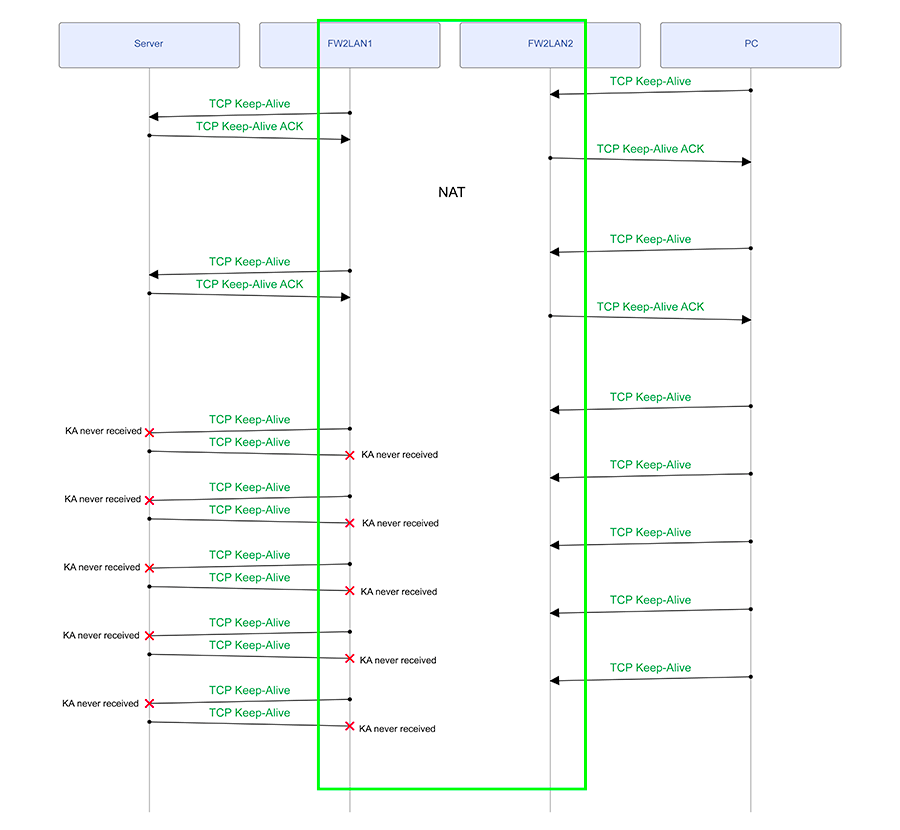

I see TCP keep-alives working fine, then suddenly the FW2 keeps on receiving keep alive requests from CLIENT PC, which it NATs to SERVER, however SERVER does not seem to be receiving them, it is however transmitting them to FW2 as well - not ACKING the keep-alives as it is not receiving them. What is your assessment? If you need the full files I can possibly send them to you privately.

-

"however SERVER does not seem to be receiving them"

I have not had chance to look at your sniffs yet… But from this statement sounds like fw2 is sending the traffic on - but not being seen on the server. If this case then you have issues in your network.. Since pfsense did what it suppose to and sent the traffic on.. Some real work issues working on now, but will be happy to take a look at the sniff when I get a chance..

-

But from this statement sounds like fw2 is sending the traffic on - but not being seen on the server. If this case then you have issues in your network.. Since pfsense did what it suppose to and sent the traffic on..

The other client machines are connected to the same Cisco SG200-18 switch as the server - so the path is really short (and the other clients work fine). FW2's LAN1 is connected to same switch. It has the latest firmware. (I updated the image to reflect this).

-

I have analyzed the packet traces and this is what I see. Take note that the packet captures were done on all lifelines shown here EXCEPT for the PC, but that is not important as the PC never has issues not receiving packets / sending packets. The issue seems to be on the LAN segment between SERVER and FW2LAN1.

-

"The issue seems to be on the LAN segment between SERVER and FW2LAN1."

Would make sense.. Can you check the layer 1 between, maybe move ports on your switch.. What specific switch you running and firmware? We had a really odd bug in cisco switch one time where all would work great and then all of sudden switch would not pass on packets that were inside specific vlan. You would see it enter the switch, but not leave..

Oddest freaking thing - took a bit to track it down. and work around until we updated the firmware was just add an svi on that vlan - even if not being used, since it was just a layer 2 vlan.

If you can sniff right on your switch on this specific vlan might give you some insight you should see the packets twice, once when they enter the switch and then again when they leave the switch.

-

Will see what I can do once my client gets back to me with the switch's details.

-

So the switch is a Cisco SG200-18, and was on firmware 1.3.7.18 (I was told it was latest, clearly it was not). I just updated it to 1.4.8.6 - so I guess I have to wait and see. Just weird that this is the only issue we have on this network segment - nothing else is broken (AFAIK).

PS: There are no VLANs configured on this switch - all nodes use the default VLAN. And as far as I can tell, even though this is a managed switch, nothing non standard has been configured.

-

So no - the firmware update fixed nothing, in fact, it seems worse now - these issues appear once every 1 - 2 hours now. I cannot do a packet capture on the switch. Not sure how to troubleshoot this further.

-

Any ideas?

-

Here is the thing your sniffing on the devices connected to the switch.. And you see 1 device send the packets, and the other device does not get the packets.. That really really points to the switch.. You don't have any patch panels between the devices right.

you have

device1 –- cable --- switch --- cable --- device2If your sniffing on 1 and 2, and you see 1 send but 2 does not get.. Its the switch or the cables - and have never seen a cable drop packets just now and then.. If they are bad they are normally bad! all the time. But to rule it out you could change the cables.

Have you changed the ports the devices are connected to on the switch?

-

you have

device1 –- cable --- switch --- cable --- device2That is my understanding, yes (I am remote troubleshooting with someone's help)

Have you changed the ports the devices are connected to on the switch?

Not yet, but I have asked the guy to attach a different Cisco switch to that switch, and have the server and client attached to the "new" switch - this should rule out the switch. Waiting to hear back from him.

-

yup that would do it as well… Let us know what happens! Suggest use new cables as well when you connect to new switch.

-

So after 1 week of having just the firewall and the server on the new switch, we see no transport related errors. So it is probably a very weird error inside that switch. We will be replacing it and see if that fixes the issue.

-

Glad to hear you have found something.. So taking no cisco support on the switch so you could open a tac case with cisco? sg200 pretty cheap.. Prob just throw it out ;)

-

No such luck I am afraid. The "new" switch is a SG200-26. Would love to understand what went wrong in that switch though. Almost like a messed up ARP table?