Poor performance Starlink/IP6 endpoint routing ip4

-

Hey,

New to starlink so trying to sort a few things out.

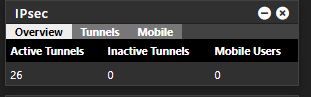

Starlink IP4 is CGNAT'ed so I've enabled IPv6 at both ends of my IPSEC tunnel - link is up and stable - yay! Datacentre to me.

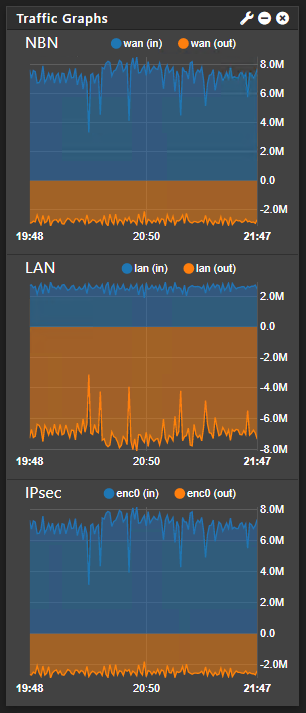

However the performance seems to be really poor compared to a 100mb Australia NBN connection (FTTN) vdsl service.

NBN ping time around 16ms - Starlink 40ms DC - ME

Both using hardware accel

P1: AES128-CGM AES Hash DH=2

P2: AES128-CGM no hashDC to NBN I achieve around 8MB/s

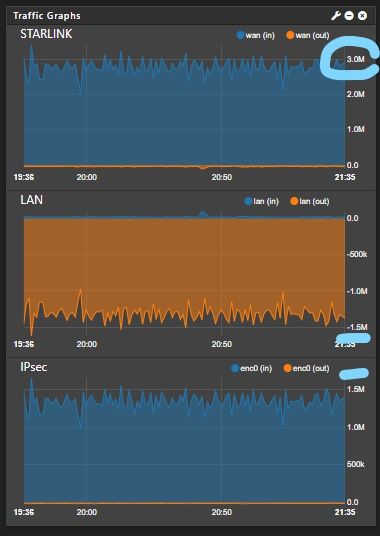

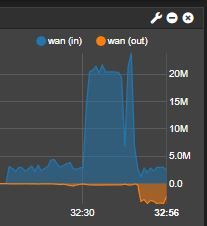

DC to starlink about 1.5MB/s however interestingly the bandwidth is calculated twice on the WAN IV6>IP4 maybe?

I have plenty of overhead ie a speed test while running shows extra downloads.

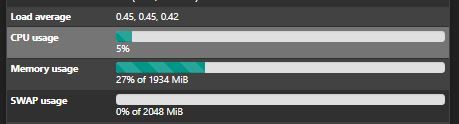

Nothing crazy with states/cpu/ram

I have other IPSEC tunnels behaving as you would expect.

Pretty much out of thoughts? Is the bandwidth doubling a bug? any ideas to improve site to site performance?

Asynchronous Cryptography is enabled both ends

-

MMS Clamping is set to 1350 at each end ping -f -l confirming 1350 are flowing unfragmented

-

Latency is so important if you want to share Files.

-

@nocling Don't forget window size as well. Some simple napkin math

16ms RTT with 128k window size gets you about 65.54 Mbps or 8.125MBps

40ms RTT with 128k window size gets you 26.21 Mbps or like 3.25 MBps

Pretty slick how the math pretty much lines up with exactly what your seeing.. Bump the window size up..

-

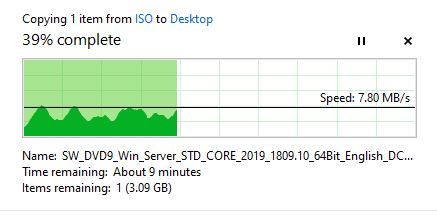

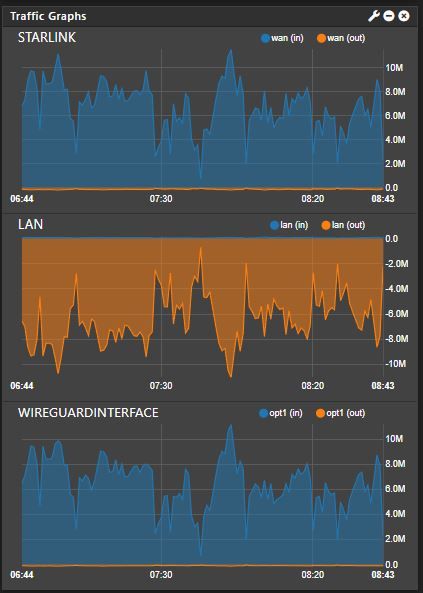

In the end I switched over to WireGuard - smashing it in around 6-8 MB/s. Tried everything with IPSec but gave up. I think I might have to investiage Wireguard further and switch the other VPNS over too.. The WireGuard seems to really forgiving of the StarLink latency/dropped packets.

Here is a file copy from a remote server to local along with 20x robocopy in the background doing file compares (no actual transfers)