iperf3: Slower transfer speeds between VLANs vs same VLAN

-

Hello all,

I use pfSense as my firewall/router and have a couple VLANs set (1 = Servers, 100 = LAN, 200 = WLAN, 201 = Guest WLAN). I also run Open vSwitch for my VMs (these are all running on my CentOS 7 box). Recently I noticed quite a speed difference when transferring files between VMs on different VLANs vs the same VLAN:

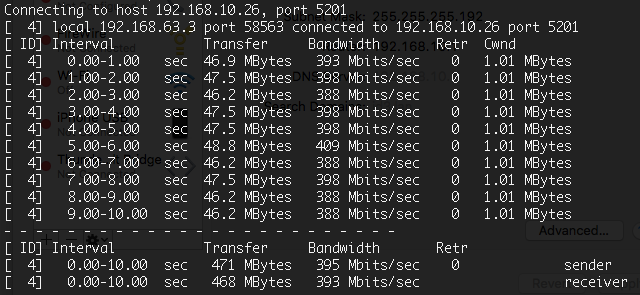

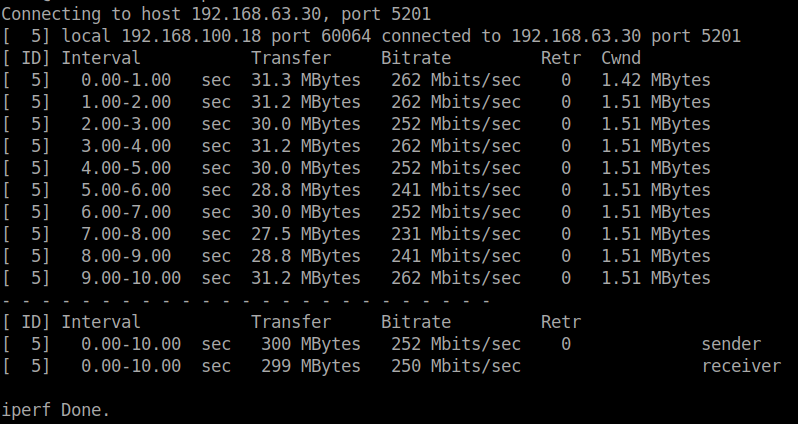

Different VLAN

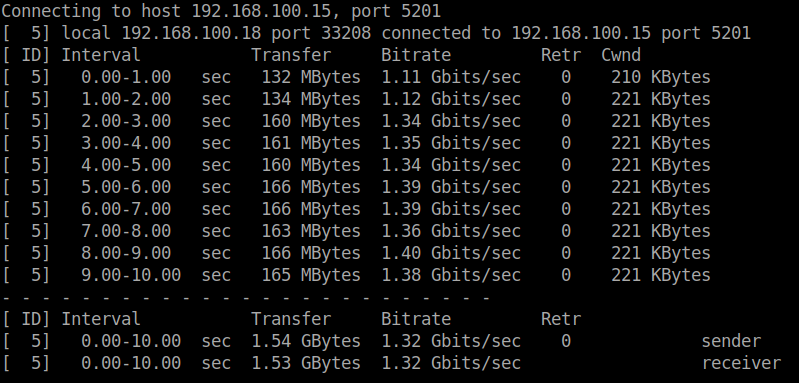

Same VLAN

Now I'm thinking it has to do with pfSense, however I could see it be an issue with Open vSwitch. So I was wondering if anyone else has run into this issue before and if so, how was it resolved. Any insight would be helpful. Thanks!

-

Well, yes. If the 2 computers are on the same VLAN, then they can communicate at the full LAN speed. If on different VLANs, then pfSense has to route between the VLANs. So, you've got the same data transmitted twice and since you're using VLANs, that twice is on the same wire. Passing through pfSense may also slow things a bit. Further, using VLANs will add an extra 4 bytes of overhead per frame. It all adds up.

-

@JKnott thanks for clearing that up. For whatever reason I did not know that. Guess it was left out during my Network+/Cisco training lol. I guess I'll just leave things the way they are.

-

If you want your inter-vlan traffic routed at wire speed, you're looking at a re-design... e.g.. moving your VLANS off PFsense to a L3 switch.

-

@marvosa I do have an L3 switch. However with my setup it would not be worth configuring. I'm looking at possibly removing all VLANs and setting up each subnet with it's own interface since I have a 4-port NIC (1 for WAN, 1 for MGMT, 1 for LAN, 1 for WLAN). Thanks for your input.

-

@simon_lefisch Well, even with 4 physical NIC's... what are you going to plug them into... 4 separate switches? I doubt it. You're going to be creating VLAN's regardless. The only question is... will they be layer 2 only or have SVI's providing routing for your internal network.

If you want to lean towards performance and do not require firewalling between VLANs... then enable routing on your L3 switch and move the VLANs from PFsense to your L3 switch... then all inter-vlan traffic will be handled by the switch at wire speed... and the only thing hitting your firewall will be traffic destined for the internet.

It all depends on your priorities and where you're planning on putting your NAS, servers, VM's, etc. Using 4 physical NIC's will definitely yield better performance than your current state, but may not get you where you want to be since inter-vlan traffic still has to traverse the firewall.

-

That doesn't explain op only getting 250mbit

That nic should be connecting at atleast 1 gigabit FULL DUPLEX.

This means sending and receiving at 1 gigabit simultaneously.So in reality OP should be getting around 940mbit/s if the hardware specs allow for it

-

If both VLANs are on the same wire, so that the traffic has to go one way to pfSense and then back again over the same wire, then the available bandwidth will be split between the 2 directions. Also, as I mentioned, just passing through pfSense will add the additional transmit time, even when the traffic does not pass through the same wire twice.. Without knowing the details, it's hard to say exactly what's happening. The OP mentioned different VMs on different VLANs. Are those VMs running in the same box? If so, then he could have the situation where the traffic is travelling both ways on the same wire, in that when VM A sends a packet to VM B, it has to go to pfSense on VLAN A and back on VLAN B, resulting in the same packet travelling twice over the same wire and through the same NICs. Of course, there could be additional factors too.

-

right; same cable , different wire

ethernet has seperate transmit and receive lines ... as i said full duplex.

simplied (ignoring ack's etc etc) pfsense receive-line-data go to cpu, and back out through the send-lines at line speed if the cpu isn't bottlenecked or other issue's present itself.in reality, like you said, there is some protocol overhead. but that overhead doesn't result in much slower throughput in real-life situations. I've ran dozens of iperf tests on baremetal and virtual pfsense instances with trunked vlans ... not once did it dip below 940mbit/s with 64k packet sizes

-

@heper said in iperf3: Slower transfer speeds between VLANs vs same VLAN:

ethernet has seperate transmit and receive lines ... as i said full duplex.

Not at gigabit. Gb Ethernet uses all 4 pairs in both direction, unlike 10 & 100 Mb, which used 1 pair in each direction.

-

Perhaps the OP can try an experiment with separate LANs vs VLANs, to see if it's a VLAN issue or routing.

While Ethernet is full duplex, there is more to it than just that. Normally, with TCP, you'd have data going one way and acks the other. So, with VLANs sharing the same wire, the data has to go out and back and likewise the acks. There also the pfSense performance to consider, etc. When on the same VLAN, pfSense is not at all involved in passing the traffic. I'm also assuming there's a switch in there somewhere. It's fine to speculate, but without actual tests, we don't know for sure. So, run some tests to see where the bottleneck is.

-

@JKnott said in iperf3: Slower transfer speeds between VLANs vs same VLAN:

The OP mentioned different VMs on different VLANs. Are those VMs running in the same box?

Yes I do have VMs the run all on the same box and are on different VLANs (ie; my fileserver and plexserver are on the VLAN 1 while the other two VMS are on VLAN 100), and yes I do have a managed switch (Netgear GS728TPv2).

I will eventually setup different LANs to test and see.

-

I just tried a simple experiment. I have a couple of VirtualBox VMs on a computer. I watched the Ethernet port with Wireshark and pinged one VM from the other. I did not see any packet captures of this, which shows that when connecting between VMs on the same box, the packets aren't even hitting the interface. This would result in much better performance than if the packets actually went over the wire and through the switch, let alone through pfSense. So, your comparing VM to VM in the same box is simply not a valid comparison with actually going out over the network.

-

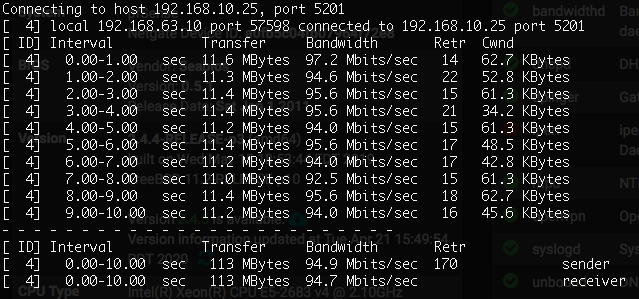

@JKnott so I just tested iperf from my VM host (192.168.63.2) to a laptop on VLAN 10 (192.168.10.25)

The 192.168.63.10 address is OVS. Is there a way for iperf to listen/send on a specific interface (an interface that is not bridged)?

EDIT: Nvm, saw that there is a bind option. However the speeds are the same.

-

It looks like there's a 100 Mb connection somewhere, not Gb.

. -

@JKnott Well I do have a device that only operates @100Mb. Would that effect everything else on the switch tho? I would think the specific port the device is connected to would run at that speed while everything else uses 1Gb.

-

Correct, only that particular port would be running at 100 Mb, but your test (above) shows you're getting just under 100 Mb, and far lower than you'd expect from a Gb port. Is the device you're testing with that 100 Mb device? If so, it will be the limiting factor, even if your computer is capable of Gb.

-

@JKnott no I was not testing with that device. However now that I think about it, I think the laptop NIC I tested with only runs 100Mb. I will have to double-check and report back. I may have to use my Mac which does run 1Gb on the NIC.

-

That could very well be it. Throughput will always be determined by the slowest device. Imagine you had 2 computers connected by a switch. If any of those 3 runs at 100 Mb, then that's the best you will do.

-

@JKnott Ok just confirmed that the laptop I used only runs @100Mb. Just tested again on my MacBook which is on VLAN 10 and the server is on VLAN 1.