Bring pfsense/suricata to its knees and eventually die?? No reboot options/recovery available?

-

Hi there.

See a lot of netmap errors under heavy load.

When keeping the load going over hours at a time, pfsense dies with no warning. no logs and no recovery option/auto boot.

So in a remote location, youre more or less fucked.

I want to run IDS/IPS inline mode, so something needs to be done.

It doesnt matter what NIC it is. Errors are still flowing like hell when line speed is reached. Currently 1gbps.

Manually altering loader.conf doesnt affect settings in suricata at all.

device netmap

dev.netmap.ixl_rx_miss_bufs: 0

dev.netmap.ixl_rx_miss: 0

dev.netmap.iflib_rx_miss_bufs: 0

dev.netmap.iflib_rx_miss: 0

dev.netmap.iflib_crcstrip: 1

dev.netmap.bridge_batch: 1024

dev.netmap.default_pipes: 0

dev.netmap.priv_buf_num: 4098

dev.netmap.priv_buf_size: 2048

dev.netmap.buf_curr_num: 163840

dev.netmap.buf_num: 163840

dev.netmap.buf_curr_size: 2048

dev.netmap.buf_size: 2048

dev.netmap.priv_ring_num: 4

dev.netmap.priv_ring_size: 20480

dev.netmap.ring_curr_num: 200

dev.netmap.ring_num: 200

dev.netmap.ring_curr_size: 36864

dev.netmap.ring_size: 36864

dev.netmap.priv_if_num: 2

dev.netmap.priv_if_size: 1024

dev.netmap.if_curr_num: 100

dev.netmap.if_num: 100

dev.netmap.if_curr_size: 1024

dev.netmap.if_size: 1024

dev.netmap.ptnet_vnet_hdr: 1

dev.netmap.generic_rings: 1

dev.netmap.generic_ringsize: 1024

dev.netmap.generic_mit: 100000

dev.netmap.generic_hwcsum: 0

dev.netmap.admode: 0

dev.netmap.fwd: 0

dev.netmap.txsync_retry: 2

dev.netmap.mitigate: 1

dev.netmap.no_pendintr: 1

dev.netmap.no_timestamp: 0

dev.netmap.verbose: 0

dev.netmap.ix_rx_miss_bufs: 0

dev.netmap.ix_rx_miss: 0

dev.netmap.ix_crcstrip: 0 -

hi,

The fact is that....

Suricata works without any problems, if it is well configured and the NIC is also well configured

(this is a long-term experience)So after all, what do you want because that’s the big question here: IDS (intrusion detection) or IPS (intrusion prevention)

@Cool_Corona "I want to run IDS/IPS inline mode,".....

Since you're yelling about netmap, I think IPS is the goal

https://forum.netgate.com/topic/109417/suricata-inline-versus-legacy-ips-moderecommend to your attention:

@bmeeks - https://forum.netgate.com/topic/143812/snort-package-4-0-inline-ips-mode-introduction-and-configuration-instructionsfor NICs:

https://calomel.org/freebsd_network_tuning.html@Cool_Corona "Manually altering loader.conf doesnt affect settings in suricata at all."

always place the NIC tuning in the loader.conf.local file (avoid FW update overwriting)!!!!

-

Everyone comes to the forum and yells about Suricata, but the actual problem is the netmap device itself. And that device is a kernel component of FreeBSD, not a part of Suricata.

When you use Suricata with Inline IPS Mode on pfSense, that in turn simply enables the option to activate the netmap device in the underlying Suricata binary by writing a parameter to the

suricata.yamlfile for the interface.Netmap started out with what appeared to be great promise, but things have never quite reached perfection in FreeBSD. For a long time it was the scarcity of NIC drivers that would support netmap. Then the netmap API changed a few times. Most recently FreeBSD decided to create a new wrapper API for kernel network driver interactions called iflib. And support for netmap was moved over to iflib with the idea being that so long as a NIC driver developer (the NIC vendors, actually) used the iflib API, then netmap support would be automatically handled. The new iflib API is in FreeBSD-12.

The situation on FreeBSD-11.3 (which is what pfSense 2.4.5 uses) is still confused as the NIC drivers have to adequately support netmap operation. There is also the limit of a single host ring on FreeBSD 11.3 while the NIC driver can have multiple rings. So think of that as a 4" pipe dumping water into a 1" pipe as the NIC's multiple rings try to stuff data back and forth over the single ring of the host stack. The host stack is required in a firewall such as pfSense because that's the path to

pf. The other way to use netmap that will use all NIC rings is to have a bridge from one physical interface to another one. This would be true inline mode and would require two physical interfaces on the firewall for "in" and "out" traffic. That's not how the current GUI code sets things up because it is not ideal for pfSense environments. -

IDS/IPS is depending on the action taken. Not the alert itself. No alert action is IDS and alert action is IPS.

And I run both on the NIDS.

Its running in Vmware on igb driver (esxi) on a dual port Intel 82576 adapter.

No alerts on normal behaviour but only under heavy load.

And does the load keep going, then it dies with no warning.

And does Snort work multithreaded and inline??

-

@Cool_Corona said in Bring pfsense/suricata to its knees and eventually die?? No reboot options/recovery available?:

And does Snort work multithreaded and inline??

No, Snort is not multithreaded, but that makes no meaningful difference until you hit 1 Gigabit/sec sustained. But it seems you do hit that barrier, so Snort would likely perform worse (but not by a whole lot).

However, Snort only supports Inline IPS Mode on pfSense-2.5 DEVEL because it needs changes to other subsystems that are currently only present in FreeBSD-12.1.

-

@Cool_Corona said in Bring pfsense/suricata to its knees and eventually die?? No reboot options/recovery available?:

And does Snort work multithreaded and inline??

Nope, Snort is no multi thread, Suricata Yes

-

@Cool_Corona said in Bring pfsense/suricata to its knees and eventually die?? No reboot options/recovery available?:

Its running in Vmware on igb driver (esxi) on a dual port Intel 82576 adapter.

So are you passing through the physical NIC to the pfSense virtual machine, or is your virtual machine actually using say the

emore1000driver. Or are you using thevmxnet3driver in the virtual machine?Running a VM adds another layer of complexity to the problems.

-

Using E1000 and the em driver and no passthrough.

-

@Cool_Corona said in Bring pfsense/suricata to its knees and eventually die?? No reboot options/recovery available?:

Using E1000 and the em driver and no passthrough.

Then several of the NIC-specific changes you were making (or at least some of the ones you listed in your first post) are useless since they apply to

ixandixlcards. You need to research the sysctl turning parameters available for theemande1000drivers and try tweaking those if you have not already.The netmap errors you are seeing in pfSense are coming from the virtual networking system and not your actual hardware NIC. However, be aware that there still could be problems with your hardware NIC and the ESXi driver, but those won't show up in the virtual machines. But netmap is NOT being used by ESXi.

All this is what I meant by my comment that virtualization adds another whole layer of potential issues to work through.

-

@bmeeks said in Bring pfsense/suricata to its knees and eventually die?? No reboot options/recovery available?:

@Cool_Corona said in Bring pfsense/suricata to its knees and eventually die?? No reboot options/recovery available?:

Using E1000 and the em driver and no passthrough.

Then several of the NIC-specific changes you were making (or at least some of the ones you listed in your first post) are useless since they apply to

ixandixlcards. You need to research the sysctl turning parameters available for theemande1000drivers and try tweaking those if you have not already.The netmap errors you are seeing in pfSense are coming from the virtual networking system and not your actual hardware NIC. However, be aware that there still could be problems with your hardware NIC and the ESXi driver, but those won't show up in the virtual machines. But netmap is NOT being using by ESXi.

So you want me to configure passthrough on the host??

-

@Cool_Corona said in Bring pfsense/suricata to its knees and eventually die?? No reboot options/recovery available?:

So you want me to configure passthrough on the host??

That depends on your pain threshold. If you do, then you will need to reconfigure your pfSense setup because your interface names will change from

emsomething to whatever the physical NIC you pass through needs. So all of your physical interface names change in pfSense, and that includes any defined VLANs since they have the physical interface in their names.I would first research

sysctltuning parameters for theemande1000NICs and see if tweaking those helps. And as @DaddyGo said, those changes go inloader.conf.localso they survive future updates. -

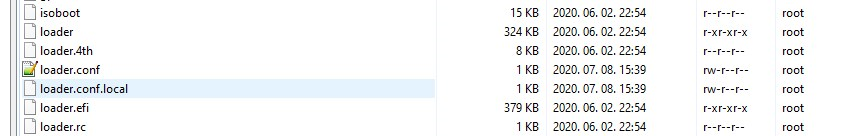

@bmeeks How to make the system aware of loader.conf.local??

AFAIK its using loader.conf and there is no such file in the folder?? Do I need to create and point loader.conf.local somewhere?

-

WinSCP or Putty, etc. (SSH) and just create it easily

+++edit:

I highly recommend it to you:

https://docs.netgate.com/pfsense/en/latest/hardware/tuning-and-troubleshooting-network-cards.html

-

Don't be mad at me, but I can’t miss that comment (I'm a friendly guy anyway

)

)The installation of all major IT tools, systems and networks (etc.) begins with reading the user manual (hand book).

I am writing this, because this is the loader.conf.local theme, a basic question in FreeBSD and thus also in pfSense.https://www.freebsd.org/doc/en_US.ISO8859-1/books/handbook/

https://docs.netgate.com/manuals/pfsense/en/latest/the-pfsense-book.pdf -

@Cool_Corona said in Bring pfsense/suricata to its knees and eventually die?? No reboot options/recovery available?:

@bmeeks How to make the system aware of loader.conf.local??

AFAIK its using loader.conf and there is no such file in the folder?? Do I need to create and point loader.conf.local somewhere?

I see @DaddyGo has already provided an answer, but I will add to it.

It is common practice on many Unix-type distros to use a *.local version of a configuration file. The OS will look for such a file, and if it sees it in the same place as the system version of that file (for example, in

/boot/), then it will append the contents of the *.local file onto the content in the parent file (the one without ".local" on the end).The purpose of *.local files is to allow user customizations to be added that survive operating system upgrades. During an upgrade, the regular

loader.conffile will get overwritten by a new version. But aloader.conf.localfile will not get overwritten. It is up to the user to create the *.local file when such a feature is needed.