ntopng eats half of my gigabit network

-

Hello!

I'm running ntopng and noticed that it eats about 500mbps of my gigabit connection. I have 8 cores assigned to pfSense and a dedicated network card (Intel PRO/1000). Looks like the system should be able to run it as it's only around 30% CPU usage in total.

I've checked several posts about ntopng, and while it says it hits hard on performance it seems it always comes back to CPU power, which I have plenty.

What I'm doing wrong here? Is this really a problem on my setup or usually ntopng will have that huge performance hit on a system no matter how many CPUs you got?

Thanks!!

-

Installed pfSense on same machine but bare-metal and it runs at full gigabit with ntopng.

I guess it's the VM who is slowing down things. I'm doing a passthrough of an Intel PRO/1000 4 nic ports so at least the network card shouldn't be the problem.

Anyone knows what could be happening?

-

What hypervisor? Mistly you will want to disable all hardware off loading when running in a VM.

https://docs.netgate.com/pfsense/en/latest/virtualization/index.html#guides

Steve

-

@stephenw10 hey thanks for your reply!

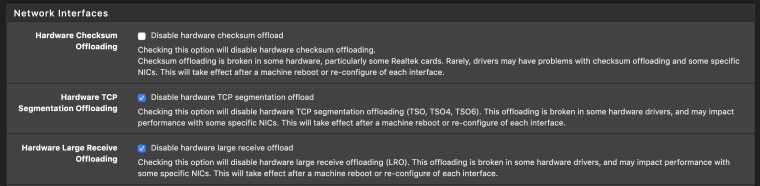

I'm using unRaid which I believe it uses KVM/QEMU. I'm doing passthrough of the NIC card. In any case I've tried this configuration I'm showing in the image and also tried enabling the first one (Disable hardware checksum offload) with no luck :/

-

Doesn't like promiscuous mode maybe? Try enabling that on the interfaces at the command line, while ntopng is disabled, and test again.

Steve

-

@stephenw10 ah! that's a good idea. Will find out how to do that and report back. Thanks!

-

Should be like:

ifconfig igb1 promiscSteve

-

@stephenw10 umm nope, I've tried but I get full gigabit with promiscuous mode enabled :(

-

With ntopng running?

-

@stephenw10 no, I've enabled promiscuous mode on igb1 with ntopng disabled and I get full gigabit. As soon as I enable ntopng I get ~500 megabits

-

Hmm, odd. You might want to try running bandwidthd just to confirm if you are hitting the same thing as this:

https://www.reddit.com/r/PFSENSE/comments/9suowo/why_does_enabling_bandwidthd_or_ntopng_cut_my/Seems likely.

Some subtlety in how interfaces are managed by the hypervisor...

Can you try it without the NICs in pass-through mode?Steve

-

@stephenw10 cool, will try that reddit thread as well!

Will also try with the passthrough mode disabled on the NICs! Thanks :)

-

@stephenw10 alright! I've been trying lots of things but no luck so far :/.

Enabling bandwidthd (with ntopng disabled) is even worse as I would get around 200mb and around 75% CPU usage. On the reddit thread doesn't specify if he's using passthrough so I would assume he's on virtio.

Now I've tried without the NICs in passthrough mode and I'm getting a bit better performance ~650mb with 30% CPU usage (I've also enabled the option to disable hardware checksum as these are virtual NICs).

I've also read that some other virtualization systems like Proxmox which also uses KVM needed to get the flow control disabled (tx off). Also tried with NIC Offload off and increased Rx and Tx Buffer to 2048 (all this on unRAID). Really weird that I'm getting better performance with virtual NICs vs using passthrough NICs.

The best I can get is 650mb using iperf3 with plenty of CPU power still available. I'm really stuck.

I even tried installing an OPNSense VM just to try to see if it is a pfSense thing but I'm unable to get past installation, always get an error.

-

Mmm, something odd happening there at some low level.

I assume you can see the full throughput in all cases without ntopng still?

Steve

-

@stephenw10 yes, I also think there's something on low level not working correctly. That's why I was trying to play with the TX, RX buffer, offload, flow control, etc.

In the case of using passthrough this shouldn't be an issue but still something else is like slowing down things when enabling ntopng.

I do always get full gigabit if ntopng is not running.

Thanks a lot for your help, really appreciate your time and effort Stephen.

-

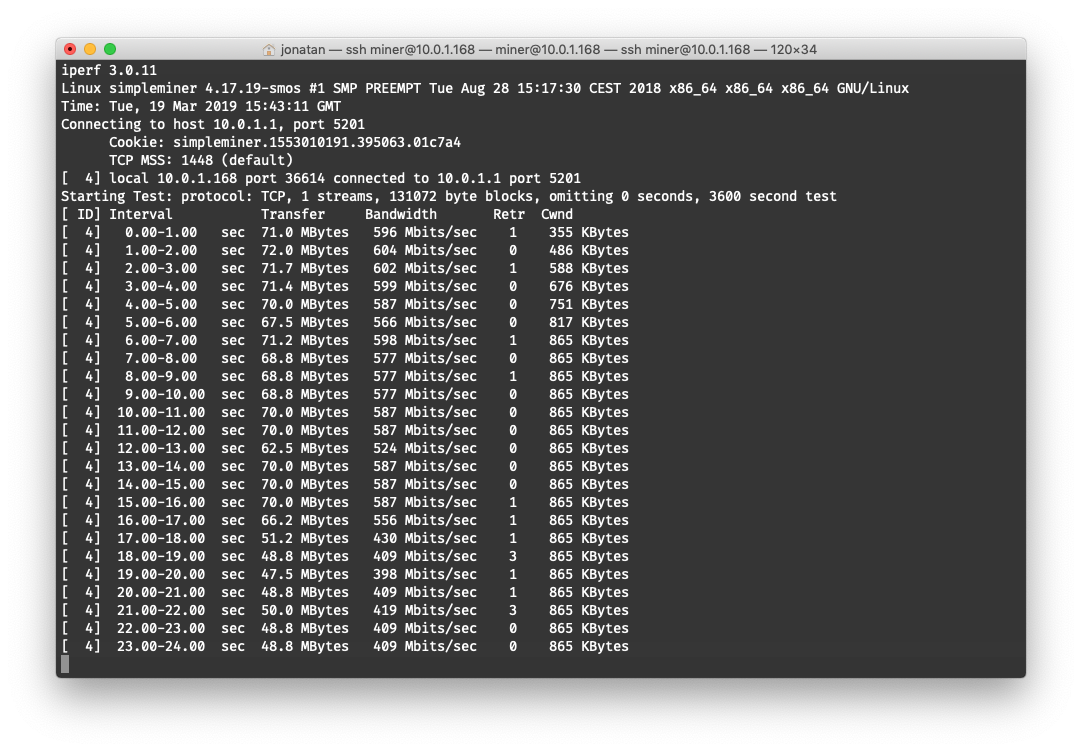

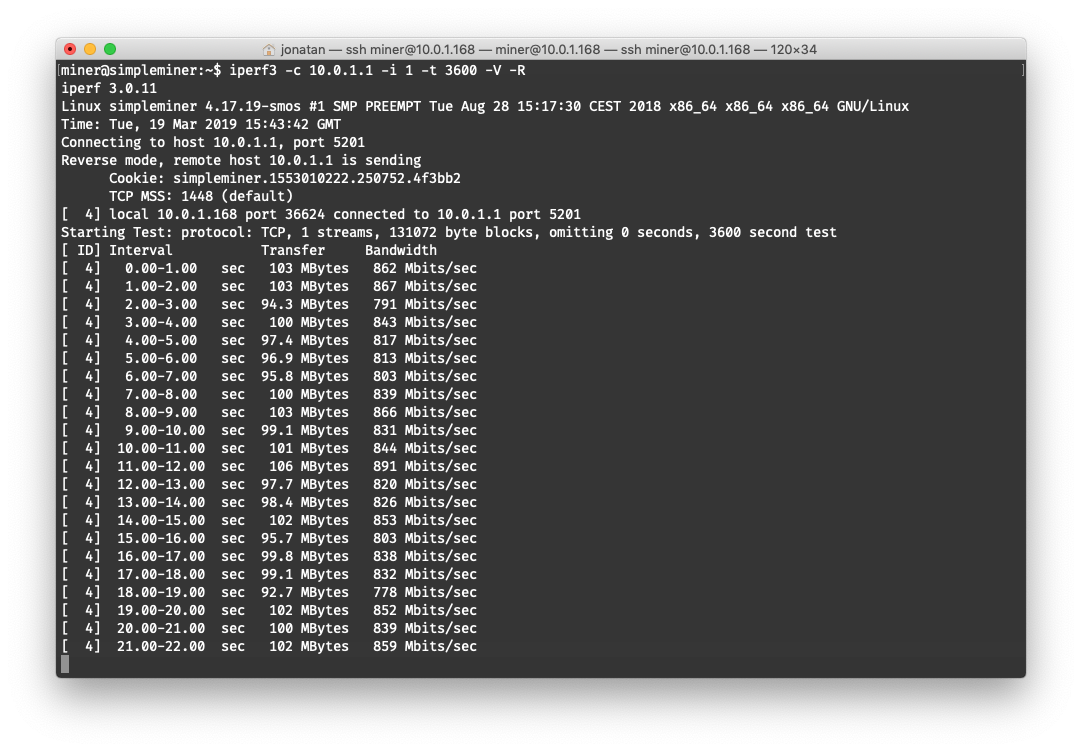

Another thing I noticed when using iperf3 is that when I create the server in the pfSense VM, as I said I get ~500 megabits but if I use the -R option (on client) I get quite a few more ~800 megabits

-

@bluepr0 said in ntopng eats half of my gigabit network:

Another thing I noticed when using iperf3 is that when I create the server in the pfSense VM

And again, do not run iperf on pfSense if you want to test routing throughput. Put the iperf server on a device connected to wan and run the client on a device connected LAN, or vice versa. Iperf itself needs a fair amount of resources, and those are then not available for pfSense if it runs on the same device.

-

@Grimson even if I have plenty of resources available? I'm getting around 70% of the CPU on idle. Thanks!

-

@bluepr0 said in ntopng eats half of my gigabit network:

@Grimson even if I have plenty of resources available? I'm getting around 70% of the CPU on idle. Thanks!

A PC is more than just the CPU. To explain it very basic there are communication lines between CPU and RAM, between CPU and the chipset on the mainboard and between all of them and the add-on cards. Each of these communication lines has it limits.

-

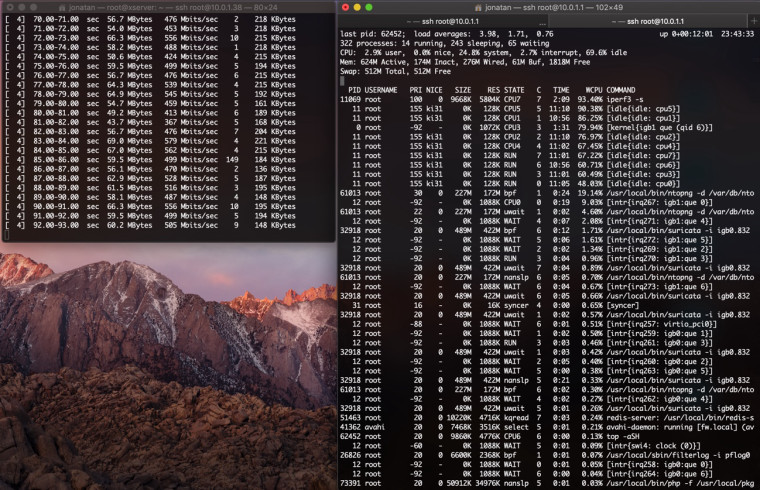

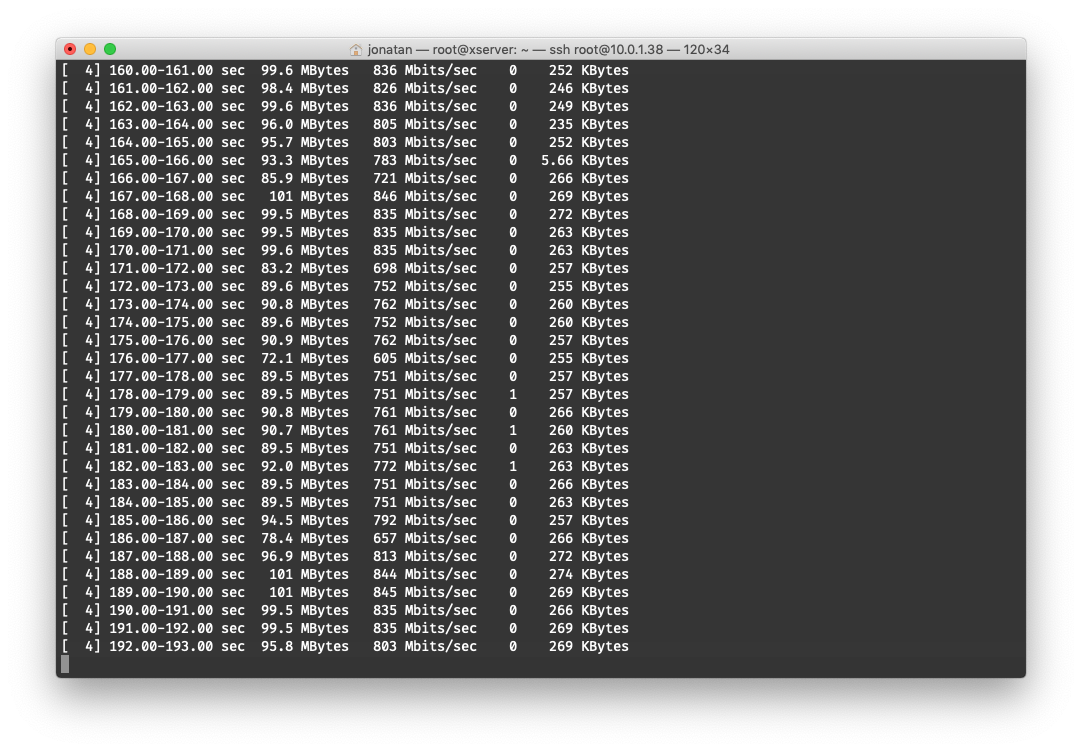

@Grimson I followed your advice and setup another subnet with the empty physical port I had on my 4 NIC card. Currently pfSense is on a VM but with passthrough this 4 port NIC card

Test was between TEST ----- pfSense ----- LAN and iperf3 running in different hosts

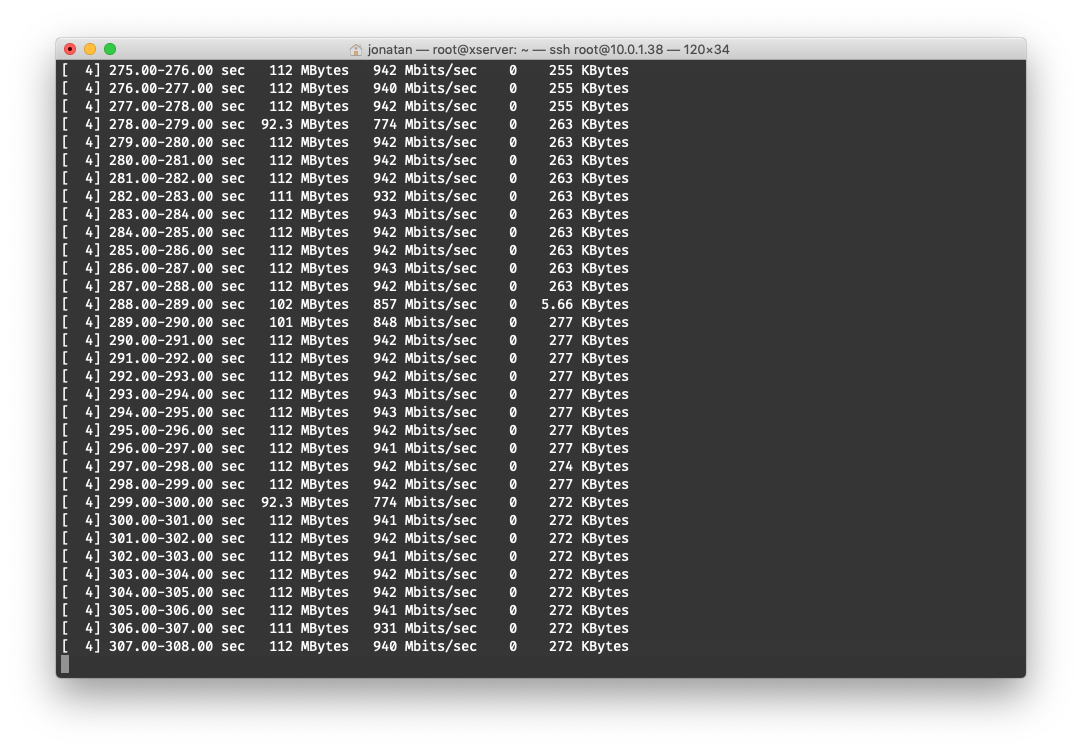

Results are a bit better but still not full gigabit. Here's an screenshot with ntopng enabled

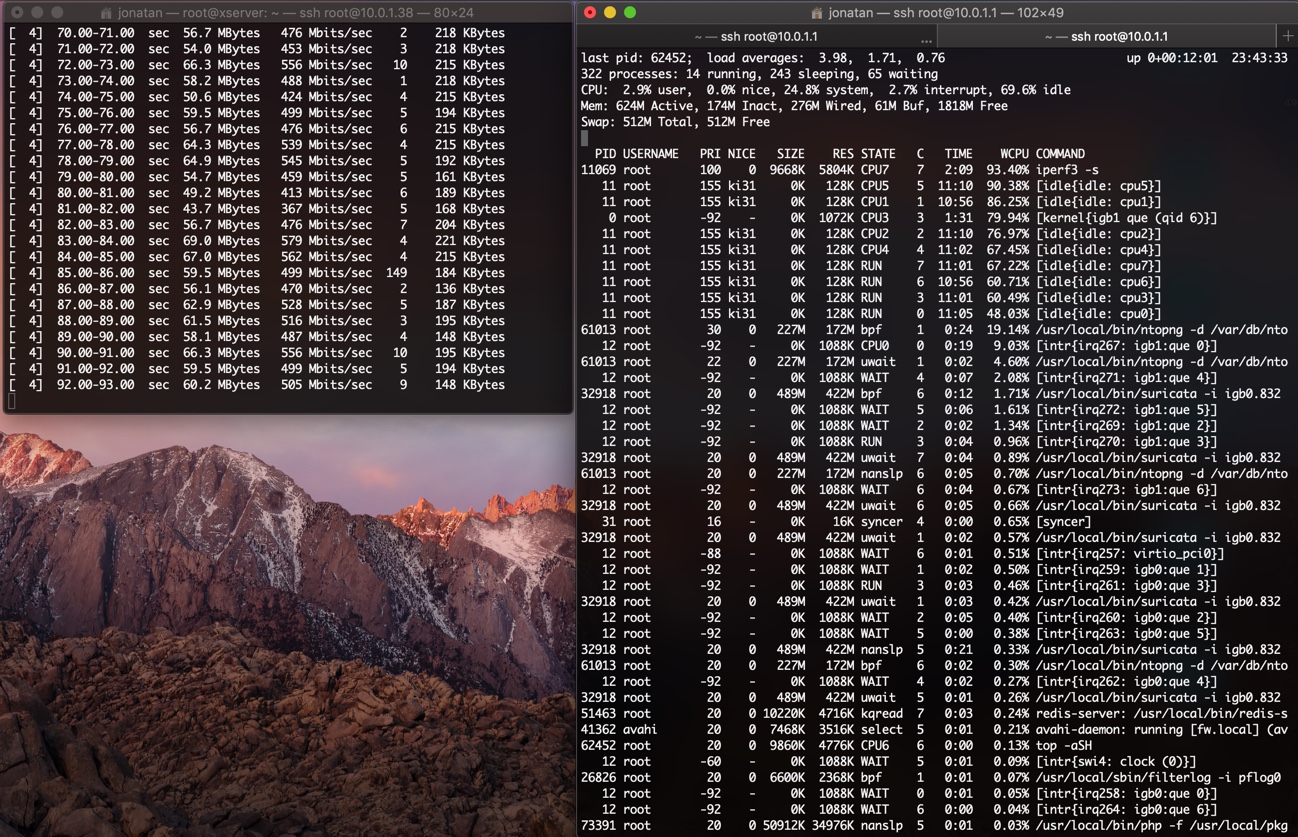

Here's the same but with ntopng disabled (it seems to get some drops to ~770 megabits from time to time)