QoS / Traffic Shaping / Limiters / FQ_CODEL on 22.05

-

An update for anyone following along:

Today I unboxed a brand new 6100, flashed

22.01-RELEASEonto it and proceeded to make only ONE configuration change from the default factory config: creating 2 limiters/queues and adding the floating rule exactly as per the offical docsI set the bandwidth at 150Mbps for testing, to ensure I'd be able to easily see if the limiters were working.

Guess what? It worked flawlessly.

Next, I went to System > Update and updated to 22.05.a.20220403.0600. No other changes were made.

After rebooting, I re-tested and got this (which matches my original problem throughout this thread):

I diff'ed the

config.xml's from before and after the 22.05 upgrade to be sure there were no other changes made behind the scenes (there were not).So now I am even more convinced there's either a bug in 22.05 or something's changed in the

ipfwthat ships with it that requires some sort of syntax change which hasn't been accounted for. -

Issue report here:

https://redmine.pfsense.org/issues/13026 -

Since this seems to be just an issue with how the ruleset syntax is generated, is there a way I can manually run a fixup command or hand-edit the rules to fix this problem right now on 22.05? I have a somewhat urgent need to use limiters now...and since 22.05 is still at least 2 months away and I can't roll back my config anymore (too many changes and it's not backwards-compatible with 22.01) it would be very helpful.

-

L luckman212 referenced this topic on

L luckman212 referenced this topic on

-

L luckman212 referenced this topic on

L luckman212 referenced this topic on

-

This post is deleted! -

This post is deleted! -

Just adding some notes from redmine...

Currently this bug (#13026: Limiters do not work) appears to be blocked by the following 2 bugs:

- #12579: Utilize dnctl(8) to apply changes without reloading filter

- #13027: Input validation prevents adding a floating match rule with limiters and no gateway

12579 says "#12003 should be merged first" but even though progress is at 0%, it appears a patch has been merged. 13027 also has a merge request pending. Target on 13027 is 22.09—hope we don't have to wait that long to have functioning limiters again!

@jimp is there any movement going on with this (imo) important bug? Thanks

-

@luckman212 It's being worked on.

-

@marcos-ng Good to know. I just updated to 22.05.a.20220426.1313 and was going to test a bit, but I'll keep waiting for some news on redmine.

-

T thomas.hohm referenced this topic on

-

T thomas.hohm referenced this topic on

-

T thomas.hohm referenced this topic on

-

T thomas.hohm referenced this topic on

-

Just reporting back here to wrap this up. I've been busy with other stuff but finally got around to retesting this. All working great on 22.05.r.20220604.1403. It's so nice to have this working again! Increased WAF factor by 10x.

-

B bsod referenced this topic on

-

@luckman212 Just one question. Did you use the same settings on post # 1 or did you change something?

Thanks.

-

-

@luckman212 I'm still seeing this issue in 22.05-REL?

No matter what limiter I'm putting on the WANDown it's still going full-bandwidth and bufferbloat is suffering.

-

@keylimesoda said in QoS / Traffic Shaping / Limiters / FQ_CODEL on 22.05:

I'm still seeing this issue in 22.05-REL?

That's why @luckman212 said :

@luckman212 said in QoS / Traffic Shaping / Limiters / FQ_CODEL on 22.05:

All working great on 22.05.r.20220604.1403.

Go from stock 22.05 to 22.05..r.20220604.1403 and re test ;)

-

@gertjan Are you sure?

Stock 22.05 was 22.05-RELEASE (amd64)

built on Wed Jun 22 18:56:13 UTC 2022

FreeBSD 12.3-STABLE20220604 is older.

And as far as redmine says.

https://redmine.pfsense.org/issues/13026?tab=history

It has been resolved in 22.05 -

@netblues said in QoS / Traffic Shaping / Limiters / FQ_CODEL on 22.05:

It has been resolved in 22.05

I stand corrected I guess.

-

@keylimesoda said in QoS / Traffic Shaping / Limiters / FQ_CODEL on 22.05:

@luckman212 I'm still seeing this issue in 22.05-REL?

No matter what limiter I'm putting on the WANDown it's still going full-bandwidth and bufferbloat is suffering.

Which brings us back to the original question regarding wandown

I just checked it on 22.05 and it DOES work.

Do a speedtest and see what speed are you getting and if it is consistent.

(eg. wan links from wisp's tend to fluctuate in speed)

And do keep in mind that wan down can only be controlled indirectly, by dropping tcp packets and hoping that ack-window will take care the rest.

If your incoming traffic is e.g. udp and there is no mechanism in the app that utilises udp traffic to request fewer data, there is no way to stop flooding your download. -

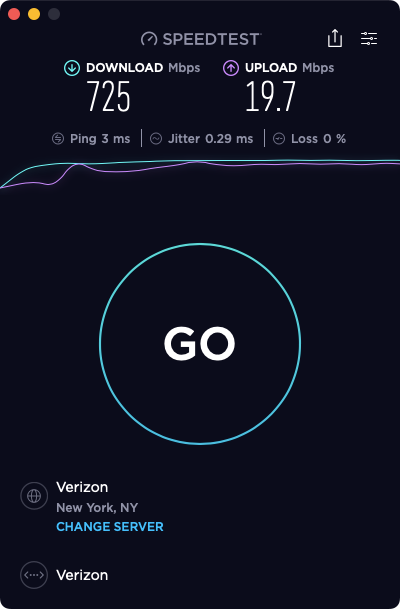

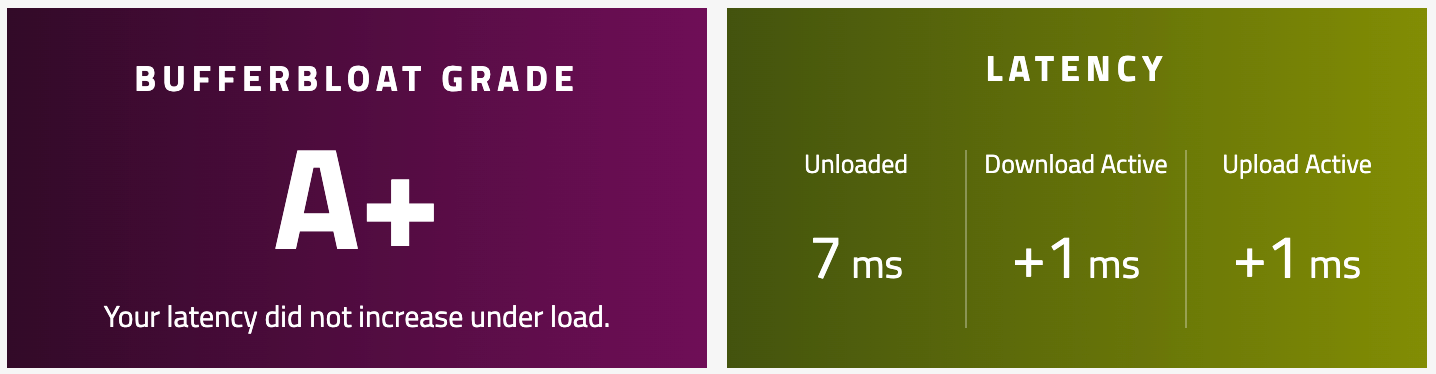

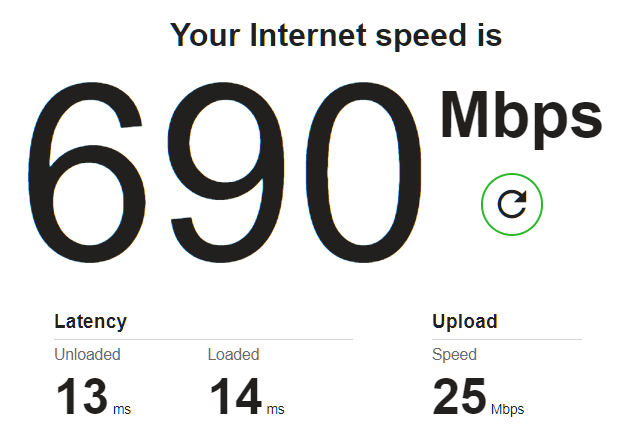

@netblues I'm getting different behavior on different speedtests which is odd. Could be the UDP issue?

On Ookla and FAST, the WAN down shows as scaling correctly. And in fact on FAST it shows almost no bufferbloat.

On Waveform test, it seems to ignore the download limiter, and shows all kinds of wonky behavior around buffer bloat on download (ranging from 20ms to 120ms). Upload is steadier.

In an informal test (watching ping times to google.com while running Ookla), I am seeing significant ping impact (from 16ms to 40-70ms) under load, which suggests that something is still off.

-

@keylimesoda said in QoS / Traffic Shaping / Limiters / FQ_CODEL on 22.05:

@netblues I'm getting different behavior on different speedtests which is odd. Could be the UDP issue?

On Ookla and FAST, the WAN down shows as scaling correctly. And in fact on FAST it shows almost no bufferbloat.

On Waveform test, it seems to ignore the download limiter, and shows all kinds of wonky behavior around buffer bloat on download (ranging from 20ms to 120ms). Upload is steadier.

In an informal test (watching ping times to google.com while running Ookla), I am seeing significant ping impact (from 16ms to 40-70ms) under load, which suggests that something is still off.

I have been seeing really weird results on the Waveform test. It seems broken. I have a 1Gbps connection and it will show over 1200Mbps and then go all over the place showing increased latency. I have my limiter set at 940Mbps. Speedtest (Ookla) seems to respect the limiters.

-

@keylimesoda Try liming to a ridiculous slow rate, something like 10Mbit down., 10Mbit up and see what happens there

If the line suffers from great speed spikes limiters don't work well.

I see this on a install that has a stable ftth line, and a 5g

on the same box.

5g leaves a lot to be desired at C, while ftth is a+ on bufferbloat tests -

I just went ahead and bought a TAC Pro sub. Order SO22-30515. Hope I can get some assistance next week.