Traffic Shaping Performance issues

-

Be warned, too large of a buffer will add bloat.

Also,

What do you mean by bloat in this context? Are we just talking about added RAM use?

My pfSense box has 2GB of RAM, only because I wanted to take advantage of the dual channel performance, so I needed two sticks of RAM, and it turns out you just can't buy anything smaller than a 1GB stick these days :p

With 2GB of RAM, I've never seen pfSense use more than ~5%. I think I can probably live with a little RAM bloat if that's the extent of it.

-

Be warned, too large of a buffer will add bloat.

Also,

What do you mean by bloat in this context? Are we just talking about added RAM use?

My pfSense box has 2GB of RAM, only because I wanted to take advantage of the dual channel performance, so I needed two sticks of RAM, and it turns out you just can't buy anything smaller than a 1GB stick these days :p

With 2GB of RAM, I've never seen pfSense use more than ~5%. I think I can probably live with a little RAM bloat if that's the extent of it.

He means bufferbloat: latency caused by oversized network buffers.

Regarding your original question, if you put in 150Mbit then you should get practically 150Mbit. If you are not, then something is wrong. Honestly, I usually only see this strange behavior when someone is new to pfSense, so I assume misconfiguration, but I could be wrong. Double-check your setting and perhaps show pictures of your queues. Make sure to reset states between queue/firewall changes.

Plenty of us use pfSense and when we setup a 10Mbit queue, that queue moves 10Mbit. Long-time users would quickly recognize if the values were skewed.

-

He means bufferbloat: latency caused by oversized network buffers.

Regarding your original question, if you put in 150Mbit then you should get practically 150Mbit. If you are not, then something is wrong. Honestly, I usually only see this strange behavior when someone is new to pfSense, so I assume misconfiguration, but I could be wrong. Double-check your setting and perhaps show pictures of your queues. Make sure to reset states between queue/firewall changes.

Plenty of us use pfSense and when we setup a 10Mbit queue, that queue moves 10Mbit. Long-time users would quickly recognize if the values were skewed.

Thank you. I'll do some poking around and post some screenshots.

While I am not new to pfSense at all (been using it since ~2010) I AM new to traffic shaping. I tried to set it up once a few years back, but it got complicated and I gave up.

While I understand the basics, I've always gotten myself confused by how the firewall rules work, and how that interacts with queues etc. I have no problem blocking or opening certain ports and setting up port forwards, but any more complicated than that, and I've quickly become confused and I've never found a good write-up that explains the whole thing adequately.

I'll happy accept recommendations for further reading :)

When I built this router box last week, I simply saved my old configuration from the pfSense install I had running as a guest on my ESXi server. Hopefully there are no old settings in there screwing things up. Maybe I should try a clean install. It doesn't take THAT long to set up my port forwards and static DHCP leases…

-

He means bufferbloat: latency caused by oversized network buffers.

Regarding your original question, if you put in 150Mbit then you should get practically 150Mbit. If you are not, then something is wrong. Honestly, I usually only see this strange behavior when someone is new to pfSense, so I assume misconfiguration, but I could be wrong. Double-check your setting and perhaps show pictures of your queues. Make sure to reset states between queue/firewall changes.

Plenty of us use pfSense and when we setup a 10Mbit queue, that queue moves 10Mbit. Long-time users would quickly recognize if the values were skewed.

Thank you. I'll do some poking around and post some screenshots.

While I am not new to pfSense at all (been using it since ~2010) I AM new to traffic shaping. I tried to set it up once a few years back, but it got complicated and I gave up.

While I understand the basics, I've always gotten myself confused by how the firewall rules work, and how that interacts with queues etc. I have no problem blocking or opening certain ports and setting up port forwards, but any more complicated than that, and I've quickly become confused and I've never found a good write-up that explains the whole thing adequately.

I'll happy accept recommendations for further reading :)

When I built this router box last week, I simply saved my old configuration from the pfSense install I had running as a guest on my ESXi server. Hopefully there are no old settings in there screwing things up. Maybe I should try a clean install. It doesn't take THAT long to set up my port forwards and static DHCP leases…

The official pfSense wiki is good. Otherwise, there's the official pfSense book, "The book of pf", and of course Google.

Firewall rules match and then assign said packets to a queue of your choosing. Then you setup the queue to have whatever traffic-shaping characterics you want.

-

Be warned, too large of a buffer will add bloat.

Also,

What do you mean by bloat in this context? Are we just talking about added RAM use?

My pfSense box has 2GB of RAM, only because I wanted to take advantage of the dual channel performance, so I needed two sticks of RAM, and it turns out you just can't buy anything smaller than a 1GB stick these days :p

With 2GB of RAM, I've never seen pfSense use more than ~5%. I think I can probably live with a little RAM bloat if that's the extent of it.

Bufferbloat is why people see high latency during congestion. Get rid of the bloat and you get rid of the latency.

Example. Say set your buffer to hold a gigabit of packets, but you only have a 1Mb connection. If someone was to send you a bunch of data faster than 1Mb/s, your buffer would eventually fill up. Once your buffer is full, it will take at least 1,000 seconds to empty, and that's assuming no new data comes in. Now what happens if your buffer is nearly full and someone send a ping packet? The ping has to sit at the back of the line and wait 1,000 seconds before you see it. Now you have 1,000 seconds of latency.

You want a large enough buffer to absorb a bursty traffic, but you want a small buffer to handle sustained traffic. It's very difficult to balance. CoDel doesn't use data sized buffers but time sized buffers. The default is 5ms. Once your buffer has 5ms of data, a packet will get dropped. The most likely packet to get dropped is a packet associated with a greedy flow, signally it to back-off. It's not guaranteed, but CoDel is biased towards dropping packets from high bandwidth flows and unlikely to drop small packets from low bandwidth flows, like VoIP, ping, games.

To set your queue size, edit your traffic shaper queue in the "Queue Limit" setting. When not set, it's 50. I think the queue size is meaningless when CoDel is selected.

-

Thank you very much for the explanation.

What is codel, and how do I set it? I can't seem to find it anywhere in the traffic shaper menus?

-

Alright, So I did the test I suggested I was going to above.

I reset pfsense to factory settings to make sure nothing old was hiding in the config.

Ran bandwidth test without traffic shaping, got my full ~160/160

Again, did the HFSC traffic shaper wizard, and wound up with the same results as before, very slow.

So I went back again, to a saved config I had before doing the traffic shaper wizard, and instead went to the "by interface" screen, and added codelq there for both LAN and WAN. It was confusing though, because this thread suggested no bandwidth needed to be entered for codelq, as it's operation is bandwidth independent and it adjusts on its own, but the screen would not let me apply the settings without applying a bandwidth.

It was unclear to me which bandwidth this should be though. The wizard game me an "upstream" and a "downstream" bandwidth. Here it is just one per interface. The tooltip said that "this is usually the interface bandwidth", so I set both to 1 Gbit/s. Was this the right thing to do, or should I have chosen something closer to my external bandwidth (or just under, like with HFSC), and in that case which do I enter where, upstream and downstream wise?

Anyway, I did a bandwidth test after applying codelq, and it appears as if I have my full bandwidth, but how do I know it is actually working and doing it's magic in the background?

Again, appreciate all your help.

–Matt

-

Alright, So I did the test I suggested I was going to above.

I reset pfsense to factory settings to make sure nothing old was hiding in the config.

Ran bandwidth test without traffic shaping, got my full ~160/160

Again, did the HFSC traffic shaper wizard, and wound up with the same results as before, very slow.

So I went back again, to a saved config I had before doing the traffic shaper wizard, and instead went to the "by interface" screen, and added codelq there for both LAN and WAN. It was confusing though, because this thread suggested no bandwidth needed to be entered for codelq, as it's operation is bandwidth independent and it adjusts on its own, but the screen would not let me apply the settings without applying a bandwidth.

It was unclear to me which bandwidth this should be though. The wizard game me an "upstream" and a "downstream" bandwidth. Here it is just one per interface. The tooltip said that "this is usually the interface bandwidth", so I set both to 1 Gbit/s. Was this the right thing to do, or should I have chosen something closer to my external bandwidth (or just under, like with HFSC), and in that case which do I enter where, upstream and downstream wise?

Anyway, I did a bandwidth test after applying codelq, and it appears as if I have my full bandwidth, but how do I know it is actually working and doing it's magic in the background?

Again, appreciate all your help.

–Matt

The answers to your questions are out there. Searching this forum & Google can answer everything you are asking.

Sorry for the callousness, but I spent ~9 months researching traffic-shaping (mostly relating to pfSense) and I know for a fact that you can find the answers if you search & read. If, after exhaustively researching traffic-shaping, you still have an unsolved problem, please ask. I (and very likely others) will be interested in solving your problem.

but otherwise, much smarter & more educated people have already answered your questions. You simply need to find those answers. ermal's posts in this forum are a favorite resource of mine, but most of his topics may be too advanced. Easy solutions are rare. :)

-

Shaping is bloody simple, but everything you read out there makes it seem hard for some reason. I'll see about creating some screenshots some time this weekend.

There are only four things you need to do

HSFC

- Set your interface bandwidth

- Create your queues

- Set your queue minimum bandwidths

- Assign traffic to your queues

#1 seems to be the #1 reason for issue. I have no idea how this part is so hard. If you only have 10Mb of bandwidth, set your Interface to only have 9Mb.

#2 seems to be the next issue. Stop creating complicated hierarchical queues, just create 3, high normal and idle

#3 I can't explain this, same as #1. Stop thinking about priorities and blah blah blah. How much minimum bandwidth do you want for this queue

#4 The wizard does create these for you. Unlike normal firewall rules, for floating, the last one winsP.S. Don't use realtime, it's confusing, seems to have little benefit in most cases, and is easy to mess up

-

- Set your interface bandwidth

[…]

#1 seems to be the #1 reason for issue. I have no idea how this part is so hard.

I do.

I worked quite a while on this until I found out that the selection 'kbit/s' in the bandwidth setting was plain wrong. It should have been 'bit/s'. (Don't know whether this has been fixed in 2.3 but the bug is still in 2.2.6.)

If as a consequence of this bug I set the bandwidth limit to effectively 10 bit/s (intending it to be 10 kbit/s) the performance was of course completely off …

-flo-

- Set your interface bandwidth

-

Here is my setup. I'm only showing the WAN because the LAN is mostly identical, just slightly lower interface bandwidth. Some of my settings, like queue size when using CoDel, is probably not correct, and I have funny percentages, but this is exactly what I use. Take it, leave it, learn from it. Do whatever.

-

I worked quite a while on this until I found out that the selection 'kbit/s' in the bandwidth setting was plain wrong. It should have been 'bit/s'. (Don't know whether this has been fixed in 2.3 but the bug is still in 2.2.6.)

If as a consequence of this bug I set the bandwidth limit to effectively 10 bit/s (intending it to be 10 kbit/s) the performance was of course completely off …-flo-

Thanks for pointing that out. So it's actually bit labeled as Kbit huh. I was confused when the speed test showed 10% of my actual download after setting in Kbit. I settled for Mbit and it's fine. However setting up bandwidth in Kbit via the wizard, it's okay. After that editing to Mbit and back to Kbit messes it up for me. It's like that on both 2.3 and 2.3.1

Hmm… I have used pfSense since 2.1.x and kbit has always been kbit. With 2.3 I am using kbit with no problems.

I extensively tested 2.2.x using mostly "kbit" and never experienced this bug. Are we sure it even exists? Has it been submitted to the pfSense bug tracker?

-

I extensively tested 2.2.x using mostly "kbit" and never experienced this bug. Are we sure it even exists?

I already reported this nearly one year ago, see this thread: Noob guide to Traffic Shaping. You already commented on that thread back then.

At least one user confirmed this problem, others didn't.

Has it been submitted to the pfSense bug tracker?

I didn't, should I?

-flo-

-

Has it been submitted to the pfSense bug tracker?

I didn't, should I?

-flo-

Absolutely.

You would need to share some detailed, repeatable steps that consistently show that "kbit" is actually "bit", then someone will either confirm the bug (and fix it) or have further questions.

It might just be easier to post the info here and someone more experienced will submit a bug.

-

It's my mistake; I couldn't duplicate the issue. I knew that traffic wizard always worked, and my internet upload speed is 20% of my download.

-

While editing the shaper, I may have plugged in up/down bandwidth numbers in lan/wan instead of the other way round.

-

I also had "Disable hardware large receive offload" unchecked - it hinders my real speed test results.

Hence I got a speed test result of about 10% of the download speed, leading me to think that kbits might be bits. Sorry for the confusion : D

-

-

The answers to your questions are out there. Searching this forum & Google can answer everything you are asking.

Sorry for the callousness, but I spent ~9 months researching traffic-shaping (mostly relating to pfSense) and I know for a fact that you can find the answers if you search & read. If, after exhaustively researching traffic-shaping, you still have an unsolved problem, please ask. I (and very likely others) will be interested in solving your problem.

but otherwise, much smarter & more educated people have already answered your questions. You simply need to find those answers. ermal's posts in this forum are a favorite resource of mine, but most of his topics may be too advanced. Easy solutions are rare. :)

Well, I did do some searching, and I THINK I've come up with the following, but allow me to bounce it off of you guys to amke sure:

In the "by interface" section under Traffic Shaping set both LAN and WAN to codelq

Set bandwidth on WAN interface to ~97% of upstream bandwidth

Set bandwidth on LAN interface to ~97% of downstream bandwidth.Now, I also read something interesting about maybe seeing better results of fair distribution of bandwidth (because apparently codel does this poorly) by setting the primary to fairq, and using codel in a child queue.

I may have to try this.

It would seem making edits to the pfsense Traffic Shaping wiki page with some of this information would be good. That's where I checked before I posted this thread, but it was lacking. Peoples eyes glaze over if they have to read through many-page forum threads before finding that needle in a haystack that answers their question. I'd do it myself, but I'm not convinced I have a complete enough understanding of how it works yet to do so.

-

You would need to share some detailed, repeatable steps that consistently show that "kbit" is actually "bit", then someone will either confirm the bug (and fix it) or have further questions.

I worked on this but right now I cannot reproduce this anymore. Originally the results were differing form the configured values by about factor 10. This is not the case in my setup anymore now.

I'll keep an eye on this.

-flo-

-

You would need to share some detailed, repeatable steps that consistently show that "kbit" is actually "bit", then someone will either confirm the bug (and fix it) or have further questions.

I worked on this but right now I cannot reproduce this anymore. Originally the results were differing form the configured values by about factor 10. This is not the case in my setup anymore now.

I'll keep an eye on this.

-flo-

Always reset the states, or even just restart pfSense, when playing with traffic-shaping.

Personally, my first few months playing with QoS & pfSense included lots of "why is it doing that?!" situations. Like you, I even thought that the bit/kbit/mbit might be inaccurate. Though, after lots reading & testing, eventually my configurations started to perform as I predicted.

-

The answers to your questions are out there. Searching this forum & Google can answer everything you are asking.

Sorry for the callousness, but I spent ~9 months researching traffic-shaping (mostly relating to pfSense) and I know for a fact that you can find the answers if you search & read. If, after exhaustively researching traffic-shaping, you still have an unsolved problem, please ask. I (and very likely others) will be interested in solving your problem.

but otherwise, much smarter & more educated people have already answered your questions. You simply need to find those answers. ermal's posts in this forum are a favorite resource of mine, but most of his topics may be too advanced. Easy solutions are rare. :)

Well, I did do some searching, and I THINK I've come up with the following, but allow me to bounce it off of you guys to amke sure:

In the "by interface" section under Traffic Shaping set both LAN and WAN to codelq

Set bandwidth on WAN interface to ~97% of upstream bandwidth

Set bandwidth on LAN interface to ~97% of downstream bandwidth.Now, I also read something interesting about maybe seeing better results of fair distribution of bandwidth (because apparently codel does this poorly) by setting the primary to fairq, and using codel in a child queue.

I may have to try this.

It would seem making edits to the pfsense Traffic Shaping wiki page with some of this information would be good. That's where I checked before I posted this thread, but it was lacking. Peoples eyes glaze over if they have to read through many-page forum threads before finding that needle in a haystack that answers their question. I'd do it myself, but I'm not convinced I have a complete enough understanding of how it works yet to do so.

You have it pretty right. My upload connection is ~760Kbit link-rate, with a real-world bitrate of ~666Kbit, so I set my queue to 640Kbit. My ADSL is very stable, so 640 works well enough.

CODELQ is dirt simple, but yeah, there is no fair queueing. You can use either HFSC (Hierarchical Fair Service Curve) or FAIRQ and select the "CoDel Active Queue" check-box to get both fair queueing & codel.

Yeah… searching the forums can be annoying, but you gotta do it. We (pfSense forumites) are not very responsive when it's obvious that a poster has not searched for an answer.

-

The answers to your questions are out there. Searching this forum & Google can answer everything you are asking.

Sorry for the callousness, but I spent ~9 months researching traffic-shaping (mostly relating to pfSense) and I know for a fact that you can find the answers if you search & read. If, after exhaustively researching traffic-shaping, you still have an unsolved problem, please ask. I (and very likely others) will be interested in solving your problem.

but otherwise, much smarter & more educated people have already answered your questions. You simply need to find those answers. ermal's posts in this forum are a favorite resource of mine, but most of his topics may be too advanced. Easy solutions are rare. :)

Well, I did do some searching, and I THINK I've come up with the following, but allow me to bounce it off of you guys to amke sure:

In the "by interface" section under Traffic Shaping set both LAN and WAN to codelq

Set bandwidth on WAN interface to ~97% of upstream bandwidth

Set bandwidth on LAN interface to ~97% of downstream bandwidth.Now, I also read something interesting about maybe seeing better results of fair distribution of bandwidth (because apparently codel does this poorly) by setting the primary to fairq, and using codel in a child queue.

I may have to try this.

It would seem making edits to the pfsense Traffic Shaping wiki page with some of this information would be good. That's where I checked before I posted this thread, but it was lacking. Peoples eyes glaze over if they have to read through many-page forum threads before finding that needle in a haystack that answers their question. I'd do it myself, but I'm not convinced I have a complete enough understanding of how it works yet to do so.

You have it pretty right. My upload connection is ~760Kbit link-rate, with a real-world bitrate of ~666Kbit, so I set my queue to 640Kbit. My ADSL is very stable, so 640 works well enough.

CODELQ is dirt simple, but yeah, there is no fair queueing. You can use either HFSC (Hierarchical Fair Service Curve) or FAIRQ and select the "CoDel Active Queue" check-box to get both fair queueing & codel.

Yeah… searching the forums can be annoying, but you gotta do it. We (pfSense forumites) are not very responsive when it's obvious that a poster has not searched for an answer.

Hmm, so I finally got around to working on this today.

I did several speed tests in a row to establish downstream at about 152Mb/s and upstream at about 161Mb/s.

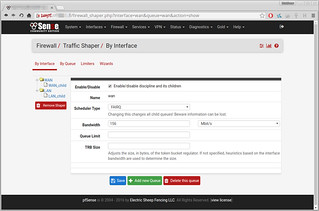

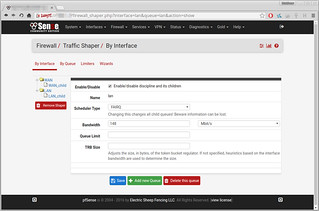

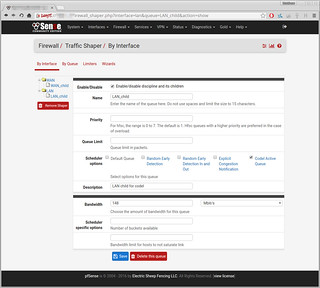

Here is how I set it up: (click for full size)

After which I saved all my settings, and made sure to reload the firewall rules.

Then, just as a sanity check I ran another speed test:

This suggests that it isn't working, as it is allowing this single process to exceed the bandwidth limits (the 148 and 156 respectively)

Any idea what I might be doing wrong?

Thanks,

Matt