Snort + Barnyard2 + What?

-

Hey,

Thanks for the big update. I stopped posting at Update 4 as I thought it was getting annoying - eventually I figured it out (that it was missing plugins) but I have to confess - I did not look at the dependencies doh, I reviewed the panel JSON and looked up which plugin would for instance support geo data. What a roundabout way.

I had to change some column names (the geo field names did not match my setup), change data types etc. like you said. His Elasticsearch suricata index template did not work for me at all, so I am using the grafana one modified a bit.

I'll review your ideas next... Thanks so much for your help.

-

How are you faring with this? Any useful APIs or views you're willing to share? Let me know if you'd like to see the dashboards I use.

A tip for anyone else who might try this: the EVE JSON encodes packets using base64 -- scapy can take the base64 packet and convert it into a .pcap for use in WireShark. That would look something like this:

from elasticsearch import Elasticsearch from scapy.all import Ether, wrpcap from flask import Flask, send_file import base64 app = Flask(__name__) @app.route("/<flow_id>") def get_flow_single_packet(flow_id): es = Elasticsearch( ['YOUR_IP'], scheme="http", port=9200 ) res = es.search(index="", body={"query": {"match_all": {}}}, q='flow_id:' + flow_id + ' AND _exists_:packet', size=1) p = Ether(base64.b64decode(res['hits']['hits'][0]['_source']['packet'])) wrpcap(flow_id + '-single.pcap', p) return(send_file(flow_id + '-single.pcap')) if (__name__ == '__main__'): app.run(debug=True, host='0.0.0.0', port=9201)Note: This example is wildly insecure, doesn't clean up the .pcap files after sending them, and only returns a single packet from a flow. It's just an example.

Something like this would let you click from a flow in Grafana and open the packet in WireShark -- without having to deal with base64 and so forth.

-

So far I have connected my pfSense filter log to graylog / grafana to see some firewall rule statistics. I also connected another remote node's suricata to the same stream so I have been dealing with setting up new filters to filter on source.

I built some new panels but this is still early days. I had to restart from scratch after I could not delete an extractor, turns out Safari is pretty pathetic and it was not an app issue, but a browser memory leak issue.

Will share once I have something novel :)

-

A quick update:

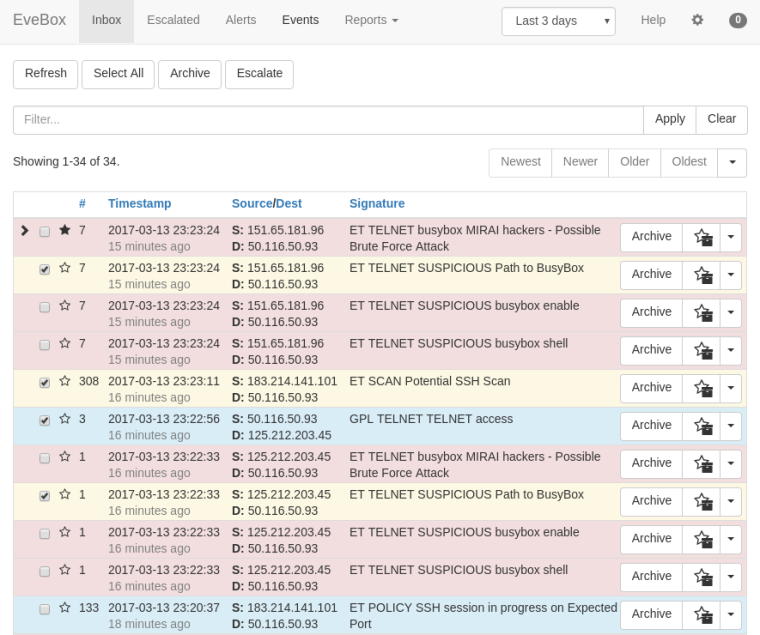

For anyone using Suricata on pfSense, you might want to investigate EveBox. This is by one of the guys who works for OISF (the developers of Suricata).

In its default configuration its meant to be used with an entire ELK stack and a larger product (SELKS), but you can use it with just Elasticsearch. For that you will need an ES instance and FileBeat installed on pfSense and configured to send EVE JSON logs.

This gives a nice interface to search, ignore, download pcaps, etc. I didn't try to put EveBox on pfSense, it's running with the ES instance on Ubuntu.

-

For anyone reading this thread and using Barnyard2 with either Suricata or Snort -- you need to be looking at migrating to a Barnyard2 replacement that works with JSON logging. The Suricata team has already announced they will eventually drop support for the unified2 binary logging format needed by Barnyard2. Currently all new development in Suricata for logging is happening on the EVE (JSON) side of Suricata while the Barnyard2 code in Suricata is just being updated in "maintenance mode" (meaning no new features will be added there).

On the Snort side, Snort3 (when it goes to RELEASE) will have a strong JSON logging component (much like EVE in Suricata). So I would not be surprised to see Barnyard2 eventually deprecated in Snort as well. No material updates of any kind have been done to Barnyard2 in the FreeBSD ports tree for at least 4 years. That's another sign Barnyard2 is slowly dying.

About three years ago I experimented with a logstash-forwarder package on pfSense, but it was a little problematic to maintain. I never did publish it as production code. I'm open to suggestions for something similar to logstash that is lightweight and does not come with a ton of dependencies that will load up the firewall with more attack surfaces. The package would need to ingest JSON logs and then export them to an external host for storage and processing (SIEM-style).

-

Is filebeat not lightweight enough? It will take arbitrary log files and forward them to either a logstash or elasticsearch instance. It is based on the code from logstash-forwarder

To get this working on pfSense 2.4.4 I used the package here:

https://www.freshports.org/sysutils/filebeat -

@boobletins said in Snort + Barnyard2 + What?:

Is filebeat not lightweight enough? It will take arbitrary log files and forward them to either a logstash or elasticsearch instance. It is based on the code from logstash-forwarder

To get this working on pfSense 2.4.4 I used the package here:

https://www.freshports.org/sysutils/filebeatYeah, it can probably work. I just wish there was something out there written in plain old C. The filebeat port, like logstash-forwarder, needs the go language compiled and installed on the firewall in order to execute. That is just more baggage with more security vulnerability exposure points in my old fashioned view.

-

I gave the incorrect link to the port which should have been:

https://www.freshports.org/sysutils/beats/I'm probably confused, but I don't think I have go installed? It may be required to compile, but not execute?

find / -name "go*"doesn't return any results that look like go to me.I can't recall now if it installed and then uninstalled go to compile.

Below is the output from pkg info if that helps at all

beats-6.4.2 Name : beats Version : 6.4.2 Installed on : Wed Nov 28 21:34:33 2018 CST Origin : sysutils/beats Architecture : FreeBSD:11:amd64 Prefix : /usr/local Categories : sysutils Licenses : APACHE20 Maintainer : elastic@FreeBSD.org WWW : https://www.elastic.co/products/beats/ Comment : Collect logs locally and send to remote logstash Options : FILEBEAT : on HEARTBEAT : on METRICBEAT : on PACKETBEAT : on Annotations : FreeBSD_version: 1102000 Flat size : 109MiB Description : Beats is the platform for building lightweight, open source data shippers for many types of operational data you want to enrich with Logstash, search and analyze in Elasticsearch, and visualize in Kibana. Whether you're interested in log files, infrastructure metrics, network packets, or any other type of data, Beats serves as the foundation for keeping a beat on your data. Filebeat is a lightweight, open source shipper for log file data. As the next-generation Logstash Forwarder, Filebeat tails logs and quickly sends this information to Logstash for further parsing and enrichment or to Elasticsearch for centralized storage and analysis. Metricbeat Collect metrics from your systems and services. From CPU to memory, Redis to Nginx, and much more, Metricbeat is a lightweight way to send system and service statistics. Packetbeat is a lightweight network packet analyzer that sends data to Logstash or Elasticsearch. WWW: https://www.elastic.co/products/beats/ -

@boobletins said in Snort + Barnyard2 + What?:

I gave the incorrect link to the port which should have been:

https://www.freshports.org/sysutils/beats/I'm probably confused, but I don't think I have go installed? It may be required to compile, but not execute?

find / -name "go*"doesn't return any results that look like go to me.I can't recall now if it installed and then uninstalled go to compile.

Below is the output from pkg info if that helps at all

beats-6.4.2 Name : beats Version : 6.4.2 Installed on : Wed Nov 28 21:34:33 2018 CST Origin : sysutils/beats Architecture : FreeBSD:11:amd64 Prefix : /usr/local Categories : sysutils Licenses : APACHE20 Maintainer : elastic@FreeBSD.org WWW : https://www.elastic.co/products/beats/ Comment : Collect logs locally and send to remote logstash Options : FILEBEAT : on HEARTBEAT : on METRICBEAT : on PACKETBEAT : on Annotations : FreeBSD_version: 1102000 Flat size : 109MiB Description : Beats is the platform for building lightweight, open source data shippers for many types of operational data you want to enrich with Logstash, search and analyze in Elasticsearch, and visualize in Kibana. Whether you're interested in log files, infrastructure metrics, network packets, or any other type of data, Beats serves as the foundation for keeping a beat on your data. Filebeat is a lightweight, open source shipper for log file data. As the next-generation Logstash Forwarder, Filebeat tails logs and quickly sends this information to Logstash for further parsing and enrichment or to Elasticsearch for centralized storage and analysis. Metricbeat Collect metrics from your systems and services. From CPU to memory, Redis to Nginx, and much more, Metricbeat is a lightweight way to send system and service statistics. Packetbeat is a lightweight network packet analyzer that sends data to Logstash or Elasticsearch. WWW: https://www.elastic.co/products/beats/lang/go is a build requirement for sure. Further reading and research indicates to me that a go runtime is bundled into the compiled exectuable. The go runtime is described as being similar to the libc library used by C programs, except in the case of go the runtime library is statically-linked into the executable. There is probably no avoiding the use of one of these 'trendy and new sexy" languages when adding a log distributer package. They all seem to have one as either a build, runtime or both requirement. Heaven forbid I once even found one that needed Java on the firewall! What was that developer thinking???

I am not familiar with beats. Does it allow the creation of secure connections with the external log host? For example, logstash-forwarder could use SSL certs to establish a secure connection with the logstash host.

-

Yes, you can specify SSL/TLS settings

One limitation I've run into is that you cannot easily send the same logs to multiple destinations directly from Filebeat. You have to either run multiple instances on the firewall or duplex it from eg a central Logstash service to other locations. It has load balancing built-in, but not duplexing.