Site to site tunnel routing through wrong VPN network half the time

-

So I have been trying to set up a site-to-site shared key tunnel with OpenVPN to one of our remote sites.

Setup as follows:

Site A (Headquarters/HQ):

LAN networks: 10.0.0.0/20, 10.3.0.0/20, 10.110.0.0/20, 10.113.0.0/20pfSense box at 10.0.0.253, LAN gateway is a Dell L3 switch at 10.0.0.1

This pfsense box runs 2 OpenVPN server instances:ovpns1: Remote Access (User Auth) type

Tunnel network (client address space): 10.10.0.0/20

VPN1 Gateway: 10.10.0.1ovpns3: Site-to-Site (Shared Key) A <-> B

Tunnel network: 10.100.0.0/30

Site B LAN: 10.15.0.0/22

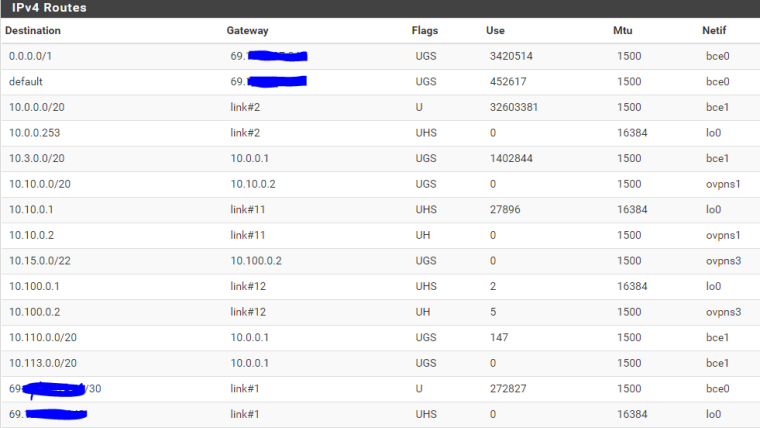

pfsense at site B is on 10.15.0.253, LAN gateway is a Cisco L3 switch at 10.15.0.1Now the issue I've been facing and trying to resolve is that anything on B's subnet can ping and access everything at A 100% of the time. But pinging from A to B only succeeds 50% of the time. After a lot of troubleshooting I've discovered the reason for the failure is that it is routing traffic meant for ovpns3 (10.15/22 net) through ovpns1. But this only happens as I said half the time. It's predictable as well: ping -t from A to B, when it is failing I see this in the state table:

ovpns3 icmp 10.10.0.1:62101 (10.0.5.207:1) -> 10.15.0.253:62101

OPT2 icmp 10.100.0.1:39578 (10.0.5.207:55253) -> 10.15.0.253:39578(OPT2 is the gateway for ovpns1: 10.10.0.1)

as you can see the interfaces and NAT addresses applied are backwards. If the ovpns3 instance is restarted, or a rule gets edited, or anything changes including someone else on the network trying to ping from A to B, it will suddenly start working and the state table shows:LAN icmp 10.0.5.207:1 -> 10.15.0.253:1

ovpns3 icmp 10.100.0.1:16682 (10.0.5.207:1) -> 10.15.0.253:16682

WAN icmp 69.129.x.x:55774 (10.100.0.1:9933) -> 10.100.0.2:55774as expected. As long as the pinging continues, it will work. If it is stopped, and you wait a few seconds for the state to drop, then try again the pings fail and the states reappear as they do when failing, meaning with the gateways/networks/interfaces swapped. And again if someone else starts pinging while I'm failing, they will succeed and vice versa. I spoke to someone on the IRC channel and he said it was like there's a load balancer between the 2 VPNs, but there isn't.

I have confirmed through packet captures that when failing, the packets are being sent wrongly into the 10.10 VPN network. One other thing of interest is that going to System > Advanced > Firewall & NAT, then checking the Disable Firewall option will immediately make things start working. Turning the firewall back on interrupts any connections that had been established. I have determined its not a firewall rule doing this, leaving NAT as the possible culprit. Is it possible that conflicting outbound NAT rules could result in the wrong NAT being applied and thus being routed wrongly?

Long story short: ping and access from B to A works 100% of the time, pinging from A to B works every other time, and when it fails it is because it is being sent into the wrong VPN network. How do I prevent this?

-

Did you assign an interface to the site-to-site instance?

-

I did not, is that necessary on both sites?

-

It is if you‘re running multiple OpenVPN instances on one node.

-

Sorry viragomann but this is just not true.

@int0x1C8 : What happens if you disable your RAS ovpns1? The site to site is working stable in your testing then? Sounds more like a wrong configuration in your RAS somewhere.

A good starting point/checklist is https://docs.netgate.com/pfsense/en/latest/book/openvpn/troubleshooting-openvpn.html-Rico

-

Well of course it works if I disable the RAS, since then it doesn't have another tunnel to get routed into.Just tried it and it is still trying to route into the disabled server.It wasn't actually disabled, now it is and the site-to-site is working 100% in both directions. Anything in the RAS config that I should look at in particular? -

Show your complete config of both sides with screenshots.

-Rico

-

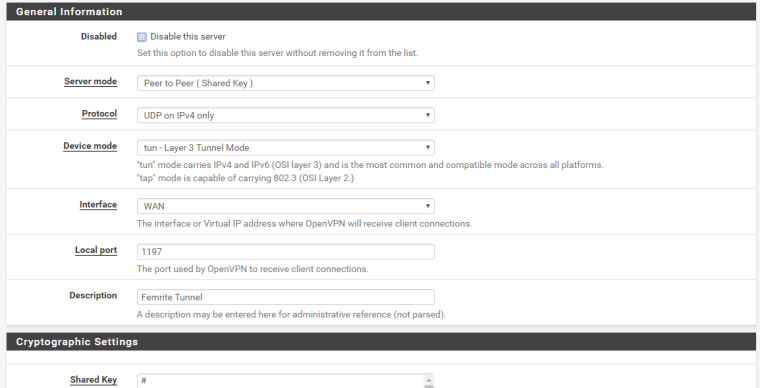

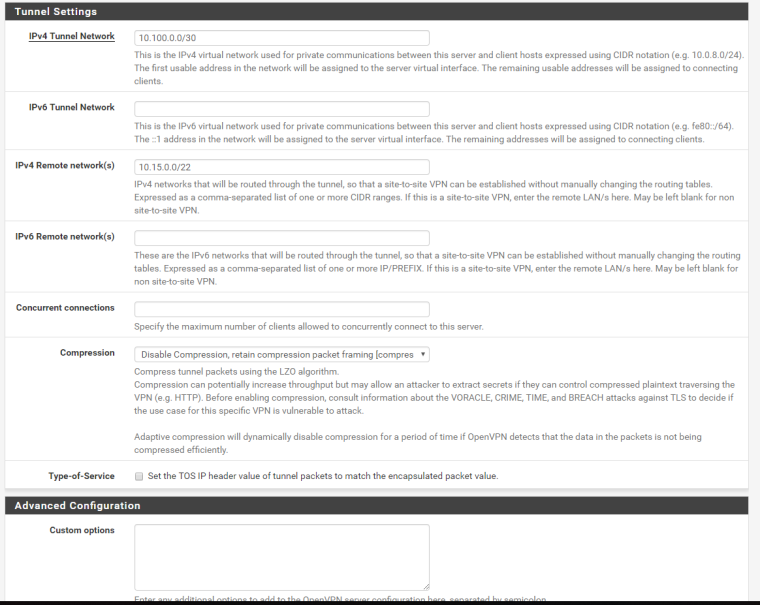

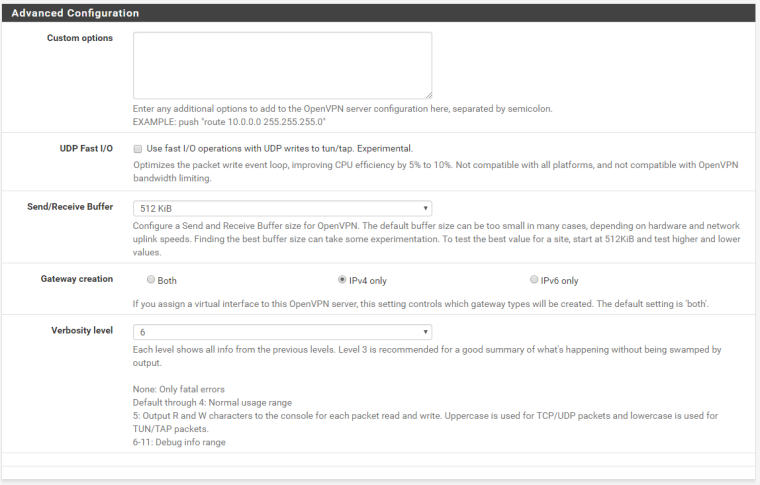

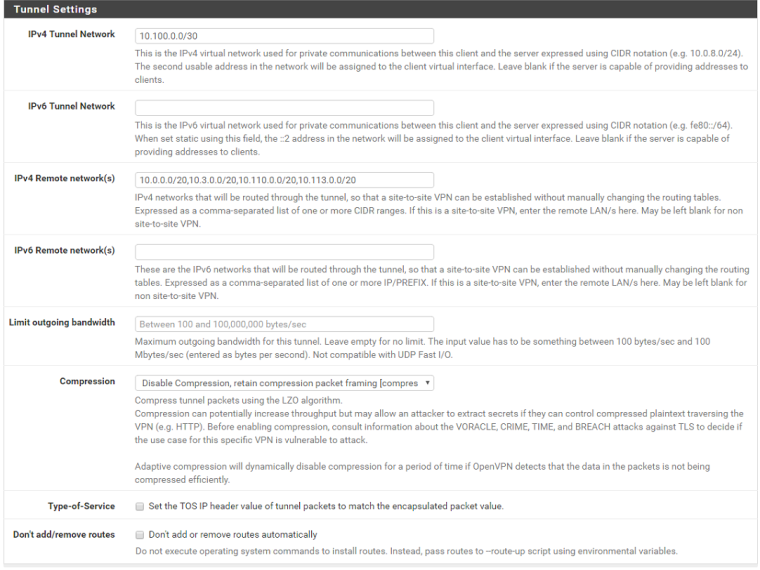

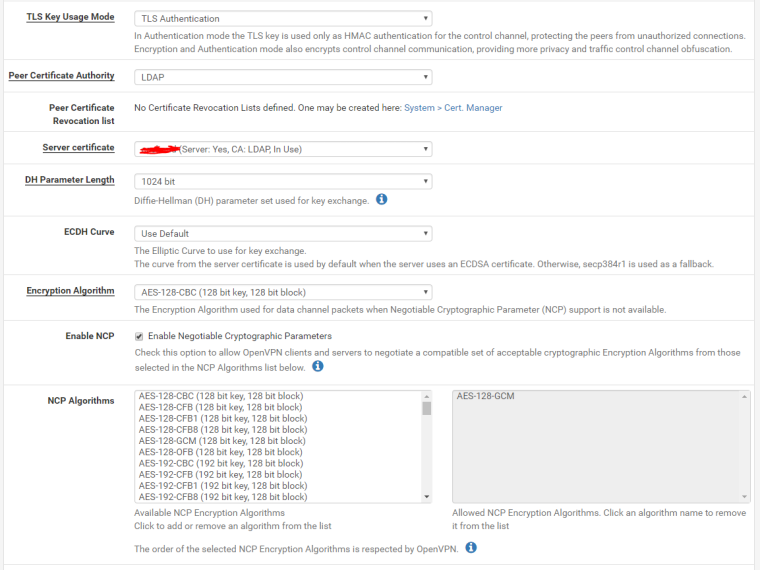

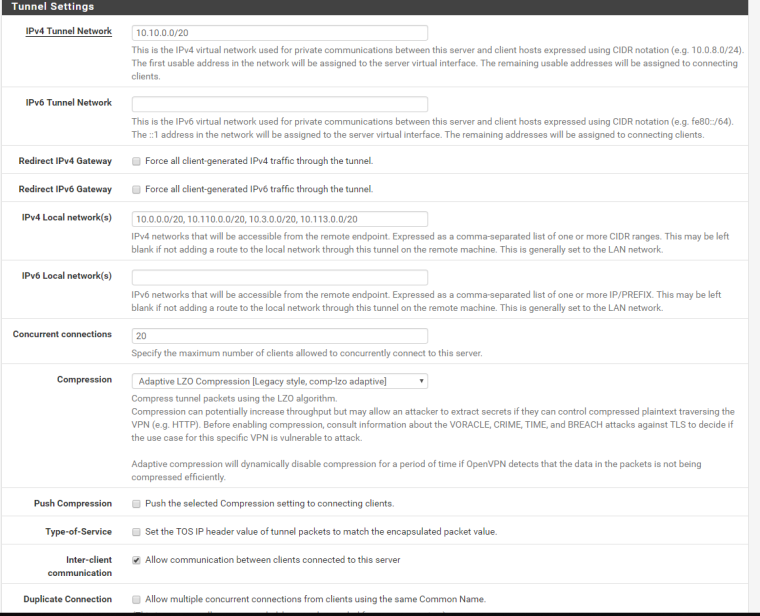

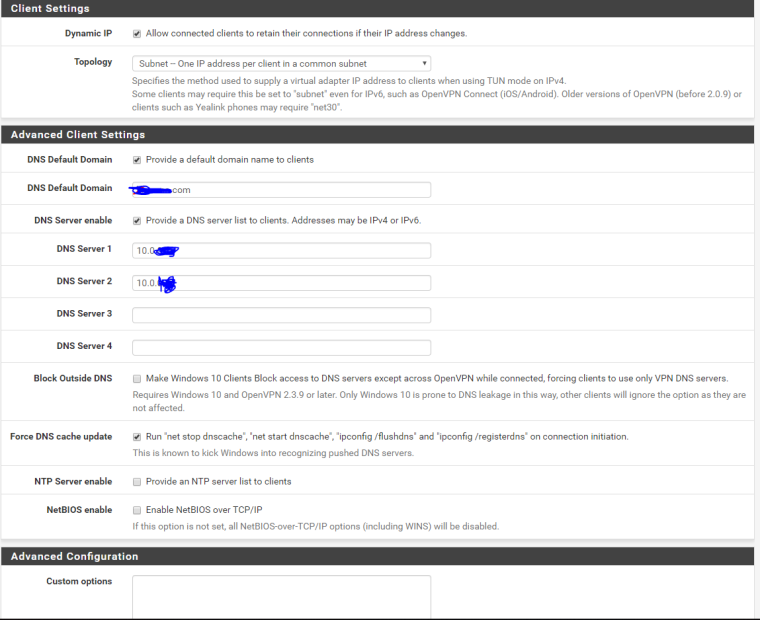

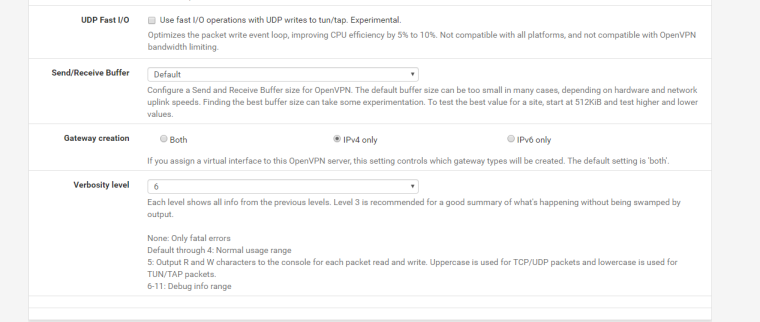

ovpns3 HQ site-to-site tunnel server config:

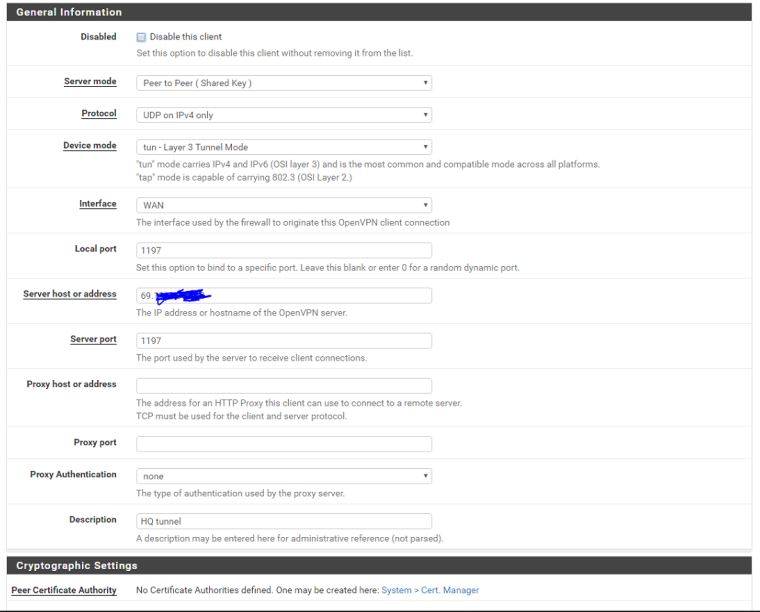

ovpnc1 site B site-to-site tunnel client config:

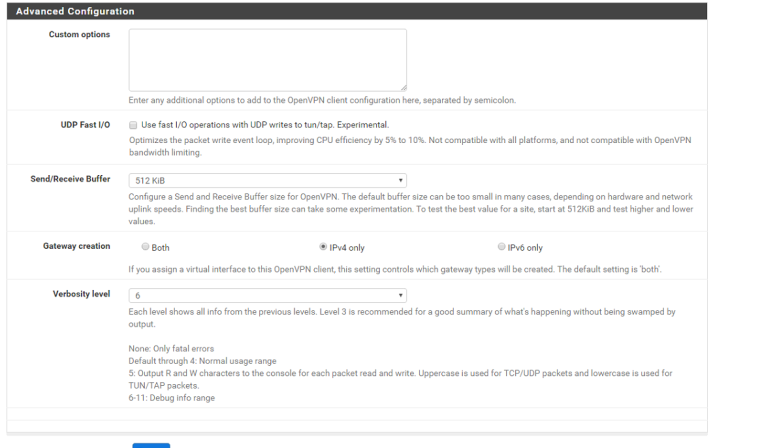

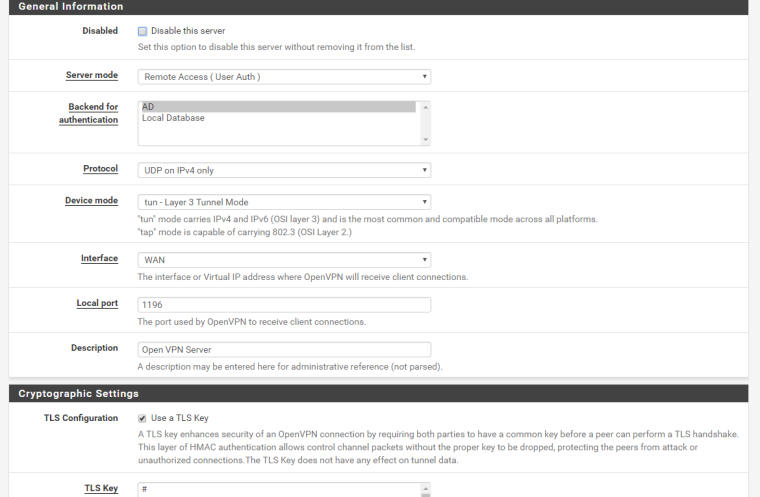

ovpns1 HQ remote access server config:

And here's the route table for pfsense at Site A:

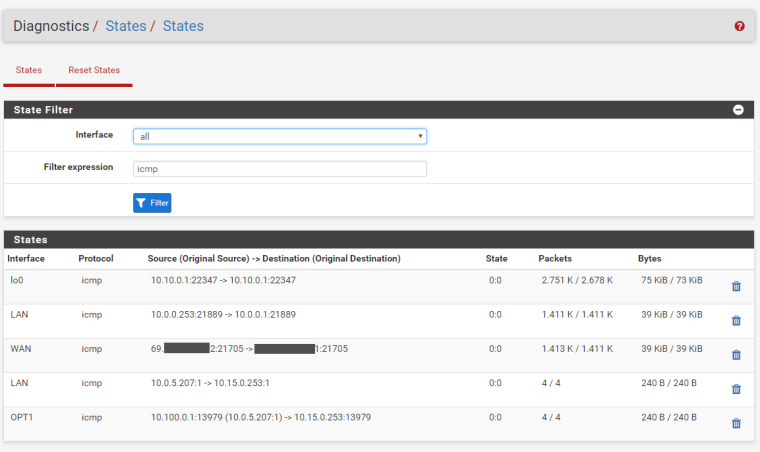

Here you can see states when routing correctly:

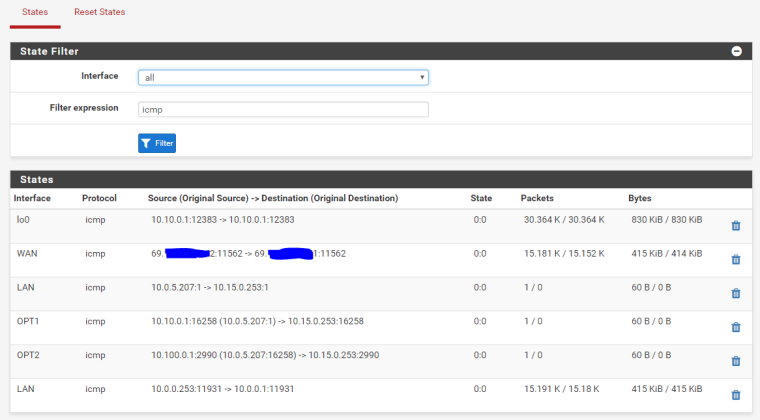

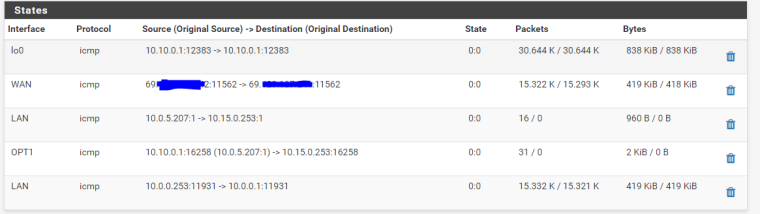

And failing: note in the first one, a state is opened up at first for both tunnels/interfaces, but they are switched around

then one of the states disappears after a second:

If you need anything else let me know.

-

Please disable Inter-client-communication and try again.

-Rico

-

I did and still seeing same behavior. Do I need to fully reboot pfsense? Also we have not yet re-exported the client for that VPN instance since upgrading to 2.4.x, would that have any effect?

-

Well trying a reboot with any strange behavior is always a good idea.

Exporting the client config file does not touch anything tho.-Rico

-

Ok it seems to be working now. The winning combination was to assign but not enable the ovpns3 interface as OPT1, disable ALL autogenerated NAT outbound rules that applied to VPN interfaces or networks, and then disable inter-client communication on the RAS config as Rico said above. My theory on why this worked is that in pfsense any OpenVPN server is in the OpenVPN interface group, so NAT rules applied to OpenVPN instead of the OPTx interfaces specifically affect all VPN traffic. In addition, perhaps having inter-client communication configured on one of the VPN instances made pfsense think that all OpenVPN interfaces could inter-communicate. Thus it didn't matter which VPN instance it sent the traffic out, it was expecting it could get from one to the other (which is something we want to enable, but I'm just happy enough it's working for now). Can anyone confirm/deny?

In conclusion to any future seekers of information: if you're seeing the same kind of behavior in routing and state tables, look into NAT outbound rules.

-

@int0x1c8 said in Site to site tunnel routing through wrong VPN network half the time:

In addition, perhaps having inter-client communication configured on one of the VPN instances made pfsense think that all OpenVPN interfaces could inter-communicate

I don't think that's the case because inter client com is internal to (each seperate instance of) OpenVPN. In other words, The host (pfS) does not see the packets travelling between clients. Enabling it should not affect normal routing.

Tried to enable it again? (If you need it)

P.S.

Better not use inter client com (no way to firewall) but instead use firewall rules to control (even selective) traffic between clients. -

I enabled it again and it continues to work which confuses me since one of the first things I tried was to disable NAT rules so I don't know why it didn't work then.