DNS Resolver not caching correct?

-

So no way to cache longer? With that unbound is slower than forwarding. To cloudflare or google I get 8 ms to the root servers up to 150 ms.

-

@mrsunfire said in DNS Resolver not caching correct?:

So no way to cache longer? With that unbound is slower than forwarding. To cloudflare or google I get 8 ms to the root servers up to 150 ms.

Millions are hitting the same DNS when you use forwarding to a data-collector like Cloudfare.

That's their big advantage.

But even when you forward to Cloudfare, it will resolve for you - some one will take the xx msec, and then the IP will stay in their cache for the duration of the TTL.Btw : @KOM as shown above, twitter.com last for about 1800 sec == 30 minutes. At least, for me - and @mrsunfire.

180 msec isn't very fast. My query time is more like 45 msec when hitting the root server road.

-

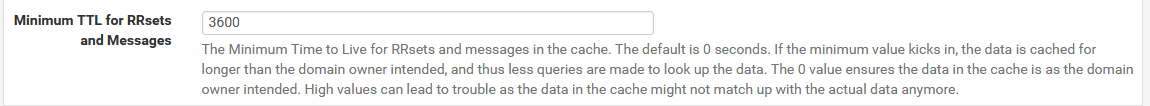

You can set the min TTL time in unbound.

Please be aware of this - it could cause you issues.. I have never seen any, but it could keep you talking to an outdated IP for an hour longer then you should be, etc. Say if the site owner was switching to new IP, etc. But I have yet to see any issue.. I wouldn't set it too high, but not a fan of the very short TTLs like 5 minutes and shit.

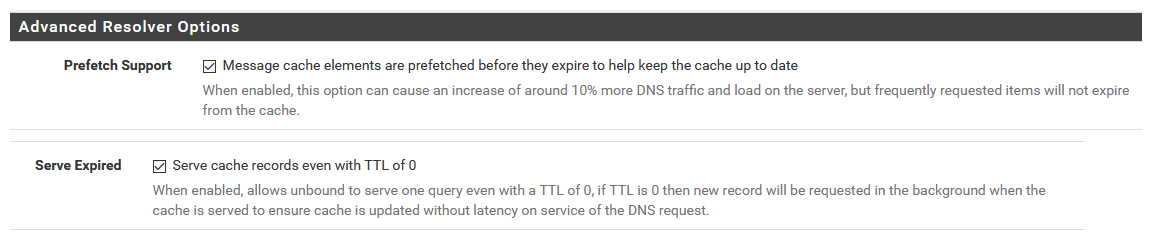

To help you can also setup prefetch.

I would really suggest you understand what these are doing before enabling them.. They should not cause you any issues either.. In a nutshell, what they do is renew a cache for an item, if someone asks unbound for it, and the TTL is close to expiring.

And the serve 0, will answer a query for something that has actually expired, and then go update it. This could cause you problems if your setting the min ttl to somethiing other than what the owner has set on their records.. But again I have not seen any issues with doing it.

Again - suggest you research what they actually do, and understand that they could cause you issues, so if you run into any issues you understand that you have made these changes so you can look into if that is the problem your experiencing at some point.

edit: BTW the TTL for twitter.com is 1800 seconds or 30 minutes.. You can find that by just doing a query direct to an authoritative NS for that domain.. Say the SOA for example

;; QUESTION SECTION: ;twitter.com. IN A ;; ANSWER SECTION: twitter.com. 1800 IN A 104.244.42.129 twitter.com. 1800 IN A 104.244.42.193 ;; AUTHORITY SECTION: twitter.com. 13999 IN NS d.r06.twtrdns.net. twitter.com. 13999 IN NS d01-01.ns.twtrdns.net. twitter.com. 13999 IN NS ns3.p34.dynect.net. twitter.com. 13999 IN NS b.r06.twtrdns.net. twitter.com. 13999 IN NS d01-02.ns.twtrdns.net. twitter.com. 13999 IN NS ns1.p34.dynect.net. twitter.com. 13999 IN NS a.r06.twtrdns.net. twitter.com. 13999 IN NS ns2.p34.dynect.net. twitter.com. 13999 IN NS c.r06.twtrdns.net. twitter.com. 13999 IN NS ns4.p34.dynect.net. ;; Query time: 32 msec ;; SERVER: 208.78.70.26#53(208.78.70.26) ;; WHEN: Thu Aug 29 05:23:29 Central Daylight Time 2019 ;; MSG SIZE rcvd: 279 -

@Gertjan said in DNS Resolver not caching correct?:

@mrsunfire said in DNS Resolver not caching correct?:

So no way to cache longer? With that unbound is slower than forwarding. To cloudflare or google I get 8 ms to the root servers up to 150 ms.

Millions are hitting the same DNS when you use forwarding to a data-collector like Cloudfare.

That's their big advantage.

But even when you forward to Cloudfare, it will resolve for you - some one will take the xx msec, and then the IP will stay in their cache for the duration of the TTL.Btw : @KOM as shown above, twitter.com last for about 1800 sec == 30 minutes. At least, for me - and @mrsunfire.

180 msec isn't very fast. My query time is more like 45 msec when hitting the root server road.

Bur why is unbound showing not 0 ms after 10-20 seconds agsin? It seems the TTK is much shorter for me?

I habe prefetch support enabled.

-

if you query something, back to back you can see the ttl count down..

;; ANSWER SECTION:

twitter.com. 3600 IN A 104.244.42.65

twitter.com. 3600 IN A 104.244.42.193;; ANSWER SECTION:

twitter.com. 3598 IN A 104.244.42.193

twitter.com. 3598 IN A 104.244.42.65;; ANSWER SECTION:

twitter.com. 3596 IN A 104.244.42.65

twitter.com. 3596 IN A 104.244.42.193then a a bit later

;; ANSWER SECTION:

twitter.com. 3472 IN A 104.244.42.193

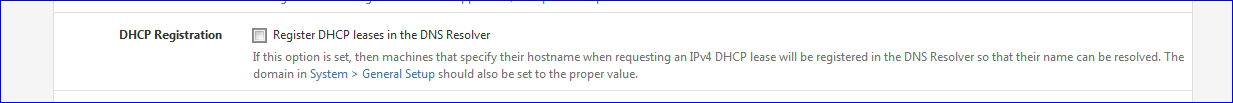

twitter.com. 3472 IN A 104.244.42.65edit: If your seeing it have to go query for it again, before the ttl has expired - maybe you restarted unbound!! Say if you have it registering dhcp, every time new dhcp lease it will restart unbound and you will lose your cache.

A restart of unbound will clear the cache.

You can look at status to see how long its been up

[2.4.4-RELEASE][admin@sg4860.local.lan]/: unbound-control -c /var/unbound/unbound.conf status

version: 1.9.1

verbosity: 1

threads: 4

modules: 2 [ validator iterator ]

uptime: 41538 seconds

options: control(ssl)

unbound (pid 43528) is running... -

What about the option „Serve Expired“? This should cache even with TTL 0.

-

Not if the cache has been wiped because unbound has restarted.

Users that have issues with unbound, is normally related to it being restarted all the time - register dhcp reservations, and they have lots of them happening all the time. Or say pfblocker restarting it, etc.

Every time unbound restarts the cache is flushed.

-

And there your have it :

@johnpoz said in DNS Resolver not caching correct?:maybe you restarted unbound!! Say if you have it registering dhcp, every time new dhcp lease it will restart unbound and you will lose your cache.

A restart of unbound will clear the cache.Not a real issue, but very few people are aware of this.

Running pfSense with default settings, depending on the number of clients on LAN, will increase DHCP requests.

When registering a new lease, the DHCP daemon will kick (restart) the DNS cache = unbound. Which implies : cache lost.Solution : do nothing with your devices.

Declare static DHCP entries as much as possible on your LAN(s) using their MAC address.

When done, remove this check :

edit : true, other processes can also restart unbound. pfBlocker might be one of them.

The DNS logs will tell you how often it restarts. -

do this command

unbound-control -c /var/unbound/unbound.conf stats_noreset | grep rrset.cacheWhat do you show for records in your cache... Then restart unbound and do it again

before restart

rrset.cache.count=8549Then restarted and looked a few moments later

[2.4.4-RELEASE][admin@sg4860.local.lan]/: unbound-control -c /var/unbound/unbound.conf stats_noreset | grep rrset.cache

rrset.cache.count=736It has already started buiding up cache because things on network are always doing queries - but on a restart of unbound the cache is flushed.

You can also look at the infra cache value

infra.cache.count=5194That was before, and then after restart

infra.cache.count=621So yes if unbound is restarting often, you loose the cache.. And then stuff has to be resolved again.

-

@johnpoz Thanks, John. I didn't know that unbound could override the default TTL from an authoritative server.

-

I wouldn't suggest normal people do it ;) But if your fully aware of how dns actually works - and your sick of seeing queries for shit because they have a ridiculous low ttl.. 60 freaking seconds - give me a gawd damn break! ;) And I wouldn't suggest you set it too high.. An hour to me seems like a good min ttl for those that are under.. 60 seconds, 5 minutes, etc. Those are just too freaking low unless your about to switch to different IP, etc.

I think the amazon dns defaults to that on purpose to be honest, because they want more queries because they charge you per query ;) hehehe

I get it cdn stuff can move around - but put the shit behind some load balancers for freak sake vs such low ttls..

Altering the min ttl, can lead to issues - so you should be well aware of what your doing before you change it.. And know how to troubleshoot if that is what might be causing you some issue you run into.

Not going to help if your cache keeps getting cleared ;) which I would guess is the OP problem

edit: The other thing that ticks me a off is the lack of local cache for most of these iot devices.. Since they have no local cache every time they want to look up something, which can be every freaking minute - they have to do a query for it, so for local queries doesn't matter how high the ttl is. They normally only looking for a couple of things, so the local cache doesn't have to be large - shit cache say 10 records or something, running a min local cache can be done with almost zero resources.. etc.

Synology nas is like this, so I installed the dns package - just so I could point it to itself for dns (127.0.0.1) so its not doing a query every time it needs to look up something. It now has its own local cache. And only forwards to pfsense when something is not in its local cache.

Same thing goes for the usg from unifi.. It runs no local cache.. stupid ass shit!

-

@johnpoz said in DNS Resolver not caching correct?:

unbound-control -c /var/unbound/unbound.conf stats_noreset | grep rrset.cache

I don't have the DHCP registration option set because I use static DHCP entries. My unbound doesn't restart. Well today it does because I changed something in the settings.

unbound-control -c /var/unbound/unbound.conf stats_noreset | grep rrset.cacheshows me

rrset.cache.count=3875If I use a client to connect to twitter.com and shortly after do a dig twitter.com it also shows me 15 ms or more. Shouldn't be there 0ms because the client already asked for that query? Before that I cleared my DNS cache on that client.

I think I can enable the option "Server Expired" or is this a problem?

How can I see all entries that are cached?

-

And maybe its 15ms because that is how long it took to query it from cache.. That seems like a really fast response all the way from roots.. Once unbound has looked up say host.domain.tld, and then it looks for otherhost.domaint.tld it will already have the authoritative ns cached, and only has to ask them directly and not walk down from roots.

You need to validate via the run time query I did above to see how long unbound has been running, there are other things that can reload it.. For example pfblocker.

you can lookup specifics, or dump the whole cache if you would.

dump_cache The contents of the cache is printed in a text format to stdout. You can redirect it to a file to store the cache in a file.Use the lookup command and it will tell you what is cached for that and what it would use to lookup something.

Or you can just grep in the full cache for some specific record.

[2.4.4-RELEASE][admin@sg4860.local.lan]/: unbound-control -c /var/unbound/unbound.conf dump_cache | grep www.google.com www.google.com. 1275 IN A 172.217.1.36 msg www.google.com. IN A 32896 1 1275 3 1 0 0 www.google.com. IN A 0 [2.4.4-RELEASE][admin@sg4860.local.lan]/:So in there you can see what the TTL is in the cache, and that it has a 0 set so it will respond even if the other cache entry .

unbound will return from cache, unless that entry has been flushed, or the whole cache has been flushed. 15 ms sure seems pretty quick for a full resolve from roots. So either it only talked to the authoritative server it already had cached, or it served it up from cache and it was a bit slow doing that.

Do looking up specific entries per the above command example will show you if the record is in cache, and what is left on the ttl, etc.

-

@johnpoz said in DNS Resolver not caching correct?:

unbound-control -c /var/unbound/unbound.conf dump_cache | grep www.google.com

If it's cached, it's always 0 ms. I think PCI-E SSD and Core i7 should be fast enough :)

I will test around a bit and see if its better now with the Serve expired setting.

www.google.com. 243 IN A 172.217.21.196 www.google.com. 243 IN AAAA 2a00:1450:4001:808::2004 msg www.google.com. IN A 32896 1 243 3 1 0 0 www.google.com. IN A 0 msg www.google.com. IN AAAA 32896 1 243 3 1 0 0 www.google.com. IN AAAA 0 -

I worded that a bit wrong, I meant that I have reply with 0 ttl set, and still shows in the cache, etc. with the ttl counting down. Bad wording on my part.

Example of the ttl counting down

www.cnn.com. 3595 IN CNAME turner-tls.map.fastly.net. msg www.cnn.com. IN A 32896 1 3595 3 2 1 0 www.cnn.com. IN CNAME 0 [2.4.4-RELEASE][admin@sg4860.local.lan]/: unbound-control -c /var/unbound/unbound.conf dump_cache | grep www.cnn.com www.cnn.com. 3496 IN CNAME turner-tls.map.fastly.net. msg www.cnn.com. IN A 32896 1 3496 3 2 1 0 www.cnn.com. IN CNAME 0 [2.4.4-RELEASE][admin@sg4860.local.lan]/:What I would do is say query for something with a short ttl.. Say 60 seconds or something... Now just keep doing that query every couple seconds.. Do you get fast response? you see the ttl counting down.. You should see responses in 1 or 2 ms..

-

After some hours I came back home and test again some names and now they show me all 0ms. I think the option Serve expering option solved my problem. Even twitter.com now resolves with 0 ms after 3 hours.

-

Maybe I missed something in the dozen+ posts on this topic, but why does it matter? 0ms vs 12ms is barely noticeable and it only applies to lookups.

-

0 vs 12 is not an issues.. But serving up say something via cache in 0ms or 12ms from cache can make make a difference vs say 500ms having to resolve it..

Much of it more of a tech thing vs hey I can notice its slower thing as well ;) Even if going to site xyz took 500ms to resolve its unlikely someone could actually notice the page loading slower if its was .5 seconds slower..

It can be hey I query this from cmd line why does it take 500ms when it should be cache local and be 1ms..

In the big picture I think resolving is the better solution, as long as your cache is working as it should - users are never going to notice anything. And you are now getting the info from the horses mouth so to speak.. And in the long run you can end up doing less queries since your always going to get the full ttl from the authoritative ns vs something that was cached, and you only got a partial ttl and had to do another query later, to only get again a less than full ttl. So while your query might be a few ms shorter, your going to end up doing more queries in the long run..

To actually make a decision you would have to do some real analysis on on your overall types of queries and amount of queries and the ttls you are getting back from if you forward, vs resolving, etc. But normally resolving is going to be the better option. But there are always going to be one offs.. Most users don't understand how it all works, and it comes down to I ask google for host.domain.tld and get an answer in X ms, vs I resolve it and get it Y ms.. where X<Y the gut reaction is forwarding is better.. When in the big picture its prob not.

-

Got it.

-

I could talk about this stuff for hours and hours and hours ;) Its a bit of a hobby/passion with me - my dream job would be just dealing with dns all day.. Vs now only now and then ;) I had a cool project a while back trying to host over 3000 some domains for a major player, etc. Trying to explain to them how its not worth it to try and do such a thing on your own - and how its not cost effective for the bandwidth required and the equipment required and how you can not do it from only 2 locations and provide actually good service - that it needs to be global, etc..

It was a fun project even though it came to nothing in the long run and they hosted it elsewhere - and prob cost my company money.. Not a business we wanted to get it hosting dns, when there are majors with global anycast networks that just better to host with them, etc.

I will say this, I would never go back to forwarding my queries anywhere... I will run a resolver on my own thank you very much.. It gives me the control and the info to do what I want, how I want to do it vs just sending all my queries to X and trusting their responses.. But that could just be me, others are very happy just asking x.x.x.x for host.domain.tld and being happy with what they get back.. That is not what I want - and I would think most people that have taken the step to moving to pfsense vs your off the shelf soho router like that ability as well.

Then can run a resolver, they can forward, they can run a full blown bind with a nice gui if they want, etc. This is one of the best things about pfsense - gives you options!!! And the ability to use such options without having to dive into the nitty gritty of conf files..

Sorry for the rant - but I love this topic, and I am like 6 beers in already.. Stopped for a few after work with a buddy ;)