Carp failures after upgrade to 2.4.5

-

Hi

Each ~15 minutes, my secondary node flood his logs (and my mails :-)) that the master has timed out and he became master. One second later, he logs a new Carp backup event that the master has resumed CARP state.

I have this since I updated both firewalls to 2.4.5-RELEASE this morning.

When I do a packet capture on the master node, I get the info below. Here you can see that there is a gap from a few seconds between 13:45:06 and 13:45:11. In the logs of the secondary node, the carp events start at 13:45:11.Any idea where I can start looking?

I didn't had this problem on 2.4.4.13:45:00.627082 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:01.628076 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:02.629083 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:03.630082 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:04.631072 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:05.632078 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 **13:45:06.633081 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:11.688779 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56)** xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:12.891840 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:14.901001 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:16.451941 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:20.466661 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:22.022676 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:23.023025 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:24.024090 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:25.025091 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:26.026033 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:27.027097 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:28.028169 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:29.029092 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136 13:45:30.030120 00:00:5e:00:01:9b > 01:00:5e:00:00:12, ethertype IPv4 (0x0800), length 70: (tos 0xe0, ttl 255, id 0, offset 0, flags [DF], proto VRRP (112), length 56) xxx.xx.xx.xx > 224.0.0.18: carp xxx.xx.xx.xx > 224.0.0.18: CARPv2-advertise 36: vhid=155 advbase=1 advskew=0 authlen=7 counter=6708048307695276136Thanks

-

I already found that when I reload the filter manually, the problem occurs.

-

Is there high CPU usage at the time? There have been a few posts about CPU spikes and large tables, e.g. https://forum.netgate.com/topic/151819/2-4-5-high-latency-and-packet-loss-not-in-a-vm and https://forum.netgate.com/topic/151690/increased-memory-and-cpu-spikes-causing-latency-outage-with-2-4-5.

-

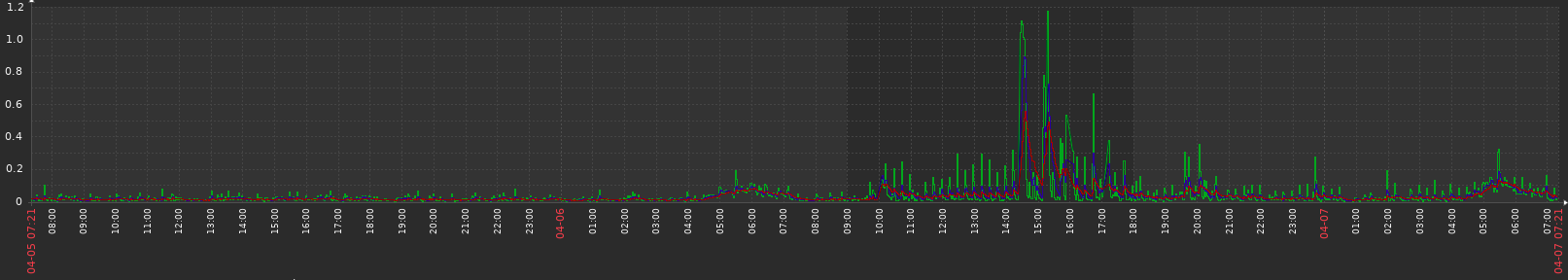

Yes indeed. The CPU spikes when reloading the filter. I did the upgrade to 2.4.5 around 10am on 6 april.

I've seen CPU loads of +10 for ~30 seconds at times when reloading the filter or forcing the config sync (16 cores, 32GB ram).

-

So, I've noticed something interesting in a large environment that I manage. It consists of about a dozen VMs and four hardware gateways.

The interesting bit? Systems with multiple CPUs exhibit this behavior. Those which do not have not.

I had a pair of VMs with 4x CPUs and another pair with 2x CPUs.

I'd reduced the 4x to 1x and will be watching it tomorrow to see if it begins to behave more appropriately. I've got a variety of theories in my head as to why this is and became a problem. But I'm not willing to indulge them just yet. -

Had similar issue with a test setup here. Random packet loss on both servers in HA when applying firewall rules. Also HA failover and reboots resulted in packet loss and flapping interfaces.

I tried to disable things mentioned in the locked thread https://forum.netgate.com/topic/151698/upgrade-ha-cluster-2-4-4-p3-to-2-4-5-persistent-carp-maintenance-mode-causes-gateway-instability but no big difference.

However when I disabled "Block bogon networks" on the wan interface, all problems seems to be gone. No packets lost when applying rules. Reboot of both master and backup results in worst case 1 ping lost.

Running 2x 25G Melanox cards in each server connected to cumulus switches in MLAG/LACP + VLANs. Separate 1G direct link for sync.

-

Hi Safe. See this longer thread: https://forum.netgate.com/topic/151819/2-4-5-high-latency-and-packet-loss-not-in-a-vm

Netgate is working on a solution. -

@Izaac A much delayed update: reducing the VM instances to single CPU/core did indeed resolve the problem. The hardware gateways, which are not so easily so handled -- heh -- still have issues.

So. If you can "get by" with a single core, do that until a fix can roll.