Playing with fq_codel in 2.4

-

@fabrizior "Very curious as to why the limiter isn't improving the avg. lag and the max lag gets worse."

QoS on your endpoint does nothing to change the underlying quality (or lack of it) of your connection. If you are sat on the end of a connection that's terminating in an exchange where they've oversold connectivity and the feed line out of the exchange to the internet is saturated then there's absolutely nothing you can do about that except choose a better ISP and hope their infrastructure is better.

If your max lag gets worse then it's likely your settings are awry. Please try the following:- delete your limiters

- ensure you aren't running any other QoS within pfSense

- delete your limiter firewall rules

- Go to the limiters, and create the first one as follows:

- tick "enable limiter and its children"

- Give it a name of WANDown

- set your download bandwidth to ~90% of your lowest speedtest.net download score (which I assume is about 450Mbps)

- No mask

- Give it a description of "WAN Downloads"

- Choose CoDel as the queue management algorithm

- Choose FQ_CODEL as the scheduler

- Tick ECN

- Click Save

- Go back and change the CoDel target to 5 and interval to 100, and the FQ_CODEL target to 5, interval to 100, quantum to 300, limit to 20480 and flows to 4096

- Click Save again

- Click "Add New Queue" next to the save button

- Tick to enable this queue

- Give it a name of "WANDownQ"

- No Mask

- Description "WAN Download Queue"

- Queue Management Algorithm = CoDel

- Queue length left blank

- ECN ticked

- Click Save

- Ensure the Target and Interval are set to 5 and 100 respectively (should be the default)

Now go back and create another set of limiter and queue with the same settings for the Upload, entering in 90% of the slowest upload speed you get from speedtest.net (but with the same other parameters...and of course name them WAN Upload etc)

Then, go into the firewall rules and into floating rules, and you're looking to create 4 rules right at the top of the list, as follows:

Rule 1

- Action = Pass

- Quick = ticked

- Interface = WAN

- Direction = out

- Address family = IPv4

- Protocol = TCP/UDP

- Source = ANY

- Destination = ANY

- Give it a description :)

- Click "Advanced Options"

- Scroll down to "Gateway" and select the WAN gateway

- In the in/out pipe section, set the in pipe to WANUpQ and the out pipe to WANDownQ

- Click Save

Rule 2

- Action = Pass

- Quick = ticked

- Interface = WAN

- Direction = in

- Address family = IPv4

- Protocol = TCP/UDP

- Source = ANY

- Destination = ANY

- Give it a description :)

- Click "Advanced Options"

- Leave the Gateway as default this time

- In the in/out pipe section, this time set the in pipe to WANDownQ and the out pipe to WANUpQ

- Click Save

Rules 3 & 4 are mirrors of rules 1 and 2 except this time selecting IPv6 as the Address Family and using the relevant IPv6 gateway in rule 3 where you select the gateway.

This should give you a working set of limiters.

Now, once you have this, you need to test whether it's going to do you any favours at all (or whether your underlying ISP connection is sh1t). You do this by:

- Measuring your network with the dslreports speedtest with no other load on the network

- Measuring the same, but with a load on the network (e.g. get someone else to run a ton of Youtube/Netflix/etc at the same time)

Your buffer bloat shouldn't vary too much. If it varies a lot (e.g. 30ms or more) then you may have an underlying ISP connection that is overprovisioned or of not very good quality, or they're already doing some QoS on it. First thing you would want to check is if you reduce the throughput values in the limiters does it improve things? If not, and you've brought it down a fair whack, then it's likely the issue is with the underlying pipe.

Hope that helps. It's mainly just an amalgamation of all the info given in here over the years, but it's served me well and it might do you to blow away your existing QoS and restart just to get it all fresh.

-

@pentangle

This is a great guide except the firewall rules, where you should use Match action instead of Pass, because rule evaluation will stop with Quick checked and you will unintentionally allow any TCP/UDP traffic in and out of your WAN.

The pfsense docs also recommend Match action for limiters."The match action is unique to floating rules. A rule with the match action will not pass or block a packet, but only match it for purposes of assigning traffic to queues or limiters for traffic shaping. Match rules do not work with Quick enabled."

https://docs.netgate.com/pfsense/en/latest/firewall/floating-rules.html#match-action -

@mind12 said in Playing with fq_codel in 2.4:

https://docs.netgate.com/pfsense/en/latest/firewall/floating-rules.html#match-action

For reals?

Why has this never come up in the many threads about how to configure the fq_codel limiter?Also, after setting all this up, traceroute on router is working fine, but on LAN clients, traceroute is now only showing the final IP/FQDN for all hops unexpectedly.

What the hell did I break now?

-

@fabrizior

It has come up, I saw it also in this thread. I was also shocked that even in Tom's from Lawrence Systems video was a pass rule not a match.

Of course if there is no NAT that could match a connection they can't connect but the possibility is there.Traceroute needs another floating rule to work if you use a limiter:

https://forum.netgate.com/topic/112527/playing-with-fq_codel-in-2-4/814 -

@Pentangle

Thanks for the specific recommendations.I've done as you suggest and dropped/rebuilt my limiters and firewall rules.

I did make two changes to the firewall rules though (just had to...) the WAN-IN rules for IPv6 and IPv4 I did as one, setting Address Family to IPv4+IPv6 - since there is no gateway definition needed, this seemed reasonable.

I also set to Action = Match vs Pass (and quick = not-ticked) all all floating rules for this config.None of these changes (yours or my deviations herein) has made any difference in reducing my bufferbloat latency results.

I do see where this config provides benefit, keeping latencies consistent (such as they are), when under high load.

I tested under load by adding a 10GB download using 100 sockets set to consume 240Mbit/s. This ran for about 5m39s from another LAN client to a remote internet service while I ran the dslreports speedtest twice from my primary desktop (24-core, 64GB RAM, 2x1gE bonded NICs). The two tests I ran under load (w/ & w/o limiters) were both completed during the 10GB download period.

WAN Limiter bandwidth was set at 415Mbit/s (down) and 22 Mbit/s (up) for these tests.

Test Bandwidth Latency # Conditions (Down/Up Mbit/s) (Down/Up ms) 1 lim: disabled, load: no 452/22 89/65 2 lim: disabled, load: no 460/24 64/66 3 lim: disabled, load: no 472/24 68/66 4 lim: disabled, load: no 426/25 62/67 5 lim: 415/22, load: no 390/21 53/34 6 lim: 415/22, load: yes 116/16 42/33 7 lim: disabled, load: yes 191/21 96/62Seems interesting that download latency was lower under load than without while the limiters were enabled. If this was at work... I would probably run multiple tests per each set of conditions and pull min/max/median/std.dev... but this data at least leads me to conclude that the limiters are functional and of benefit under load, even with the seemingly higher than desired latency floor.

Is this really bufferbloat, with the limiters turned on, or simply the lag to whatever testing servers dslReports is picking? no-load latency dropped substantially with the limiters turned on; just not as low as desired.

With regards to the loss of maximum bandwidth (for the sake of stable latency) am I correct in my understanding that with this baseline, I can re-test with higher limiter bandwidth settings to figure out how high I can crank it up before my latency numbers become undesirably unstable?

-

@mind12 said in Playing with fq_codel in 2.4:

https://forum.netgate.com/topic/112527/playing-with-fq_codel-in-2-4/814

floating rule (pass, quick) for IPv4 ICMP traceroute is set, as is the one for ICMP echoreq/echorep.

LAN clients tracceroute still not working properly.

What's next in how to triage this?@mind12 @pentangle @TheNarc Thanks a LOT for your time. I appreciate it.

-

@fabrizior

I have just tested it, it also failed on one of my windows clients.

I added the ICMP floating rule per the guide I sent you and it's working now:Add quick pass floating rule to handle ICMP echo-request and echo-reply. This rule matches ping packets so that they are not matched by the limiter rules. See bug 9024 for more info.

Action: Pass

Quick: Tick Apply the action immediately on match.

Interface: WAN

Direction: any

Address Family: IPv4

Protocol: ICMP

ICMP subtypes: Echo reply, Echo Request

Source: any

Destination: any

Description: limiter drop echo-reply under load workaround

Click Save -

@mind12 You are of course correct - typo from me, should be "match"

-

@mind12 "Traceroute needs another floating rule to work if you use a limiter:"

- This is why I chose tcp/udp rather than "any", since it was a problem with ICMP.

-

@fabrizior "None of these changes (yours or my deviations herein) has made any difference in reducing my bufferbloat latency results."

I did fear that that would be the case, since with a 450/20 connection you would have to be going some to continually saturate your internet connection. In which case, if your pfSense throughput isn't going anywhere near capacity whilst you're testing then the issue lies upstream of you, and there's not a lot you can do about that aside from shouting at your ISP.

-

@fabrizior "Is this really bufferbloat, with the limiters turned on, or simply the lag to whatever testing servers dslReports is picking? no-load latency dropped substantially with the limiters turned on; just not as low as desired."

It's bufferbloat, just not YOUR bufferbloat. Your internet connection between you and the next hop (which is likely to be the DSLAM or equivalent fibre concentrator in your ISP's phone exchange) is not now suffering bufferbloat to any great extent, but the pipe connecting the phone exchange to the wider internet is likely to have latency or oversubscription issues. If you can find a pingable next hop that doesn't incorporate all that additional latency then you could test to the nth degree, but for now it's probably pretty pointless.

"With regards to the loss of maximum bandwidth (for the sake of stable latency) am I correct in my understanding that with this baseline, I can re-test with higher limiter bandwidth settings to figure out how high I can crank it up before my latency numbers become undesirably unstable?"

You can test as much as you like. You will find that as you start specifying bandwidth limits that are close to the maximum the line can reliably tolerate, your latency will go down the toilet, so it's good to get to that point and then crank it back a notch so that you're never hitting that scenario.

-

@pentangle

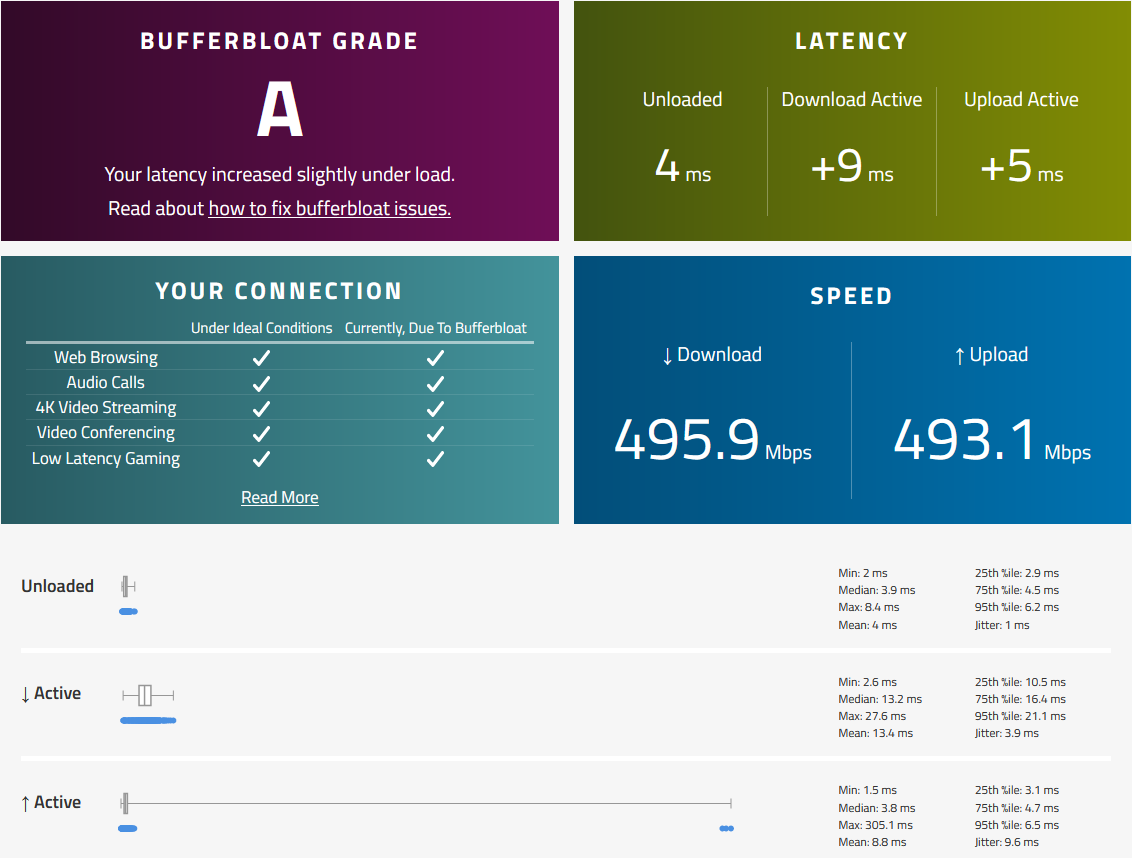

I have used your configuration and achieved A grade. Thank you.The DSL reports site gives me much lower speeds than speedtest.net with the same configuration. So I searched for another test site and found this. Got similar speeds like on speedtest.net and the same A grade.

https://www.waveform.com/tools/bufferbloatWhich configuration should I modify to achieve even better results?

My connection is a 150/10.

-

Can the developers comment when/if we will see support for BBR TCP Congestion Control in pfSense/OPNsense? I understand that it may be a feature of FreeBSD 13.x and if HardenedBSD is based on the same release level we should expect this to show up in HBSD 13.x as well when OPNsense integrates HBSD 13.x?

There is nothing official on this but I came across links like this

https://fasterdata.es.net/host-tuning/freebsd/

https://lists.freebsd.org/pipermail/freebsd-current/2020-April/075930.htmlThe FreeBSD list doesn't really refer to which version of FreeBSD with the non CC method.

Linux has this integrated for quite some time but there is no elegant front end interface like pfSense or OPNsense for Linux - too bad it wasn't ported to Linux as well with nftables backend. If I build on linux I have to do all the setup from scratch link DNS, DHCP, firewalls, etc and not a big fan of the debian model for network configs and would much prefer the RHEL model.

I have a gigabit internet connection and they sync the GPON at 2.5Gbps which means that on upload I can actually exceed the provisioned upload rate causing packet loss, packet retransmissions, and performance degradation - thus not getting the provisioned upload rate.

I have 1Gbps/750Mbps but I should be able to get 1G/1G since they overprovision the line and right now I get 930/660 usually but if I switch to 1Gbps sync then I get 700/930 so it flips around so it seems realistic I should be able to get 1G/1G if I could prevent flooding using BBR on FreeBSD/HBSD under pfSense/OPNsense. I may consider 1.5G/1G package one day but 1G plan is good for me especially if I could get full upload rate.

Some folks on a forum I hang out show results using Linux with BBR when syncing at 1Gbps and 2.5Gbps on a 1.5Gbps/1000Gbps plan.

This is what it looks like for me under various conditions. performance loss is affected by latency.

1000 (No Throttle) Latency Down Up

Bell Alliant - Halifax (3907) 24 792 919

Bell Canada - Montreal (17567) 1 901 933

Bell Canada - Toronto (17394) 8 765 933

Bell Mobility - Winnepeg (17395) 30 874 913

Bell Mobility - Calgary (17399) 47 782 8982500 (No Throttle) Latency Down Up

Bell Alliant - Halifax (3907) 24 1564 358

Bell Canada - Montreal (17567) 1 1638 933

Bell Canada - Toronto (17394) 8 1619 771

Bell Mobility - Winnepeg (17395) 30 1569 324

Bell Mobility - Calgary (17399) 47 1570 2362500 (Throttled 1000 up) Latency Down Up

Bell Alliant - Halifax (3907) 24 1558 915

Bell Canada - Montreal (17567) 1 1650 931

Bell Canada - Toronto (17394) 8 1620 931

Bell Mobility - Winnepeg (17395) 30 1569 885

Bell Mobility - Calgary (17399) 47 1600 8902500 (Throttled 1000 up +bbr ) Latency Down Up

Bell Alliant - Halifax (3907) 24 1541 922

Bell Canada - Montreal (17567) 1 1639 940

Bell Canada - Toronto (17394) 8 1583 936

Bell Mobility - Winnepeg (17395) 30 1617 928

Bell Mobility - Calgary (17399) 47 1547 916 -

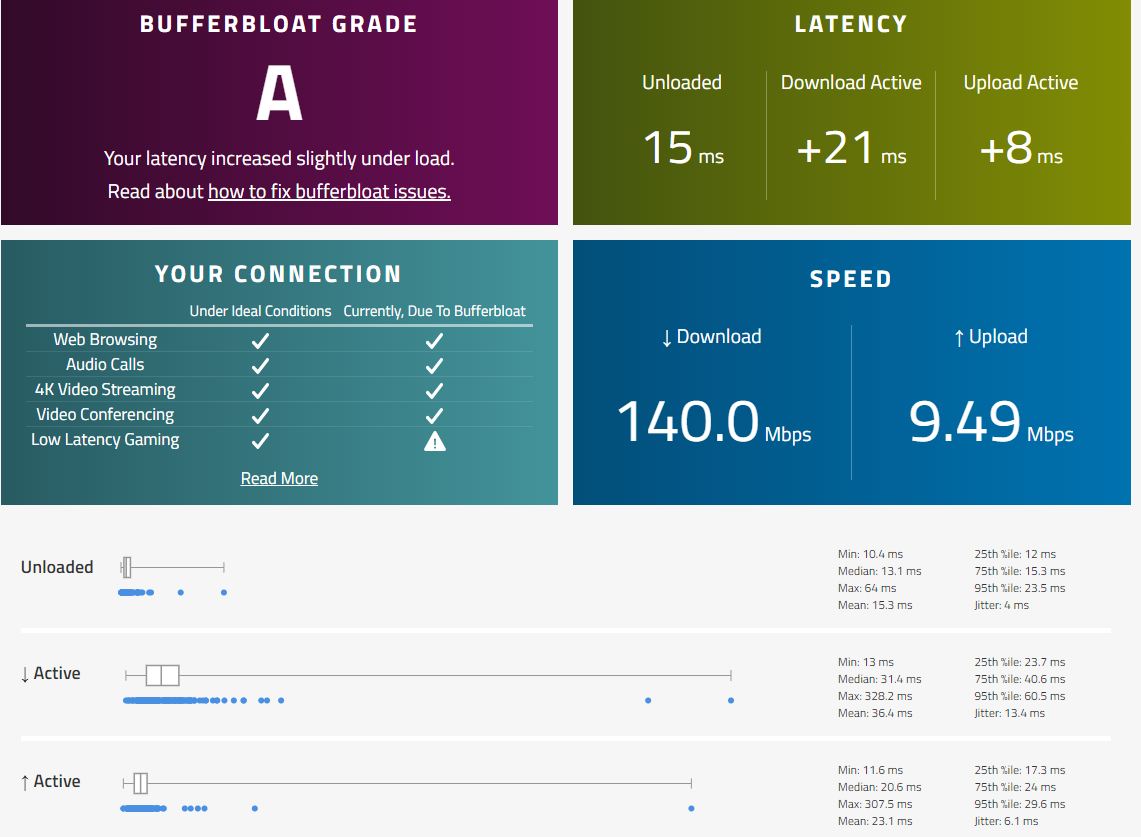

@mind12 I'd say you would now need to benchmark your ISP. By this I mean that your connection to the internet does not just consist of your connection to the hole in your wall, but also the structure of your ISP's network and its peering to the internet. As such, you may want to bring down your limiter bandwidth settings, just by maybe 5mbit/s each, and see again whether your latency increases by roughly +21ms and +8ms again. If it's constant then there's nothing much else you can do on your end of the link and that latency increase may be due to whatever's in your ISP's network or how they deal with your packets when they get them. Once you've done this you can slowly increase the bandwidth settings again until you get to a point whereby the latency goes through the roof. This would then be the speed at which you can send through your pfsense instance and you should then drop it back a bit to ensure your limiters can do their stuff as much as possible. Then once you've finished your testing you should be able to see whether your ISP is adding a reasonable amount of latency or not. Personally, from here I wouldn't be too unhappy with a 23ms upstream latency but a 36ms downstream latency looks a little suboptimal (although a lot depends upon a number of factors probably beyond your control).

-

@pentangle : your keyboard seems broken ...

-

@gertjan said in Playing with fq_codel in 2.4:

@pentangle : your keyboard seems broken ...

In what way?

-

@pentangle Thanks I will try this.

Those results are not that bad at all, I just would like to maximally optimize my limiter.What if it doesnt change by lowering the bandwidth?

How will I know if my limiter is the bottleneck? The other advanced parameters could also affect the results right? Queue length, limit flows etc.

-

@mind12 If the results don't change then you are within your bandwidth limits. The idea being that the limiters and FQ_CoDel need a certain amount of 'headroom' in order to operate (shuffling smaller packets to the front of the queue, etc), and so it'll operate well until it doesn't have enough 'headroom' to play with. You need to determine that headroom (bandwidth limit) and the easiest way to do it is to edge the bandwidth limits up until latency takes a nosedive at which point you know that your FQ_CoDel's efficiency is being impaired by the amount of headroom it has to play with, so you dial it back a notch and voila - the fastest throughput you could get whilst retaining low latency.

As regards other limits, I suggest you do a little reading on what those do for you. The settings I gave should be more than adequate for your connection - they work well with my 300/50 connection here. -

@mind12 I drove myself nuts trying to tune the limiter & queue knobs at various limiter bandwidth settings on a 400/25 mbps service with and without load (100 sockets generating ~30+ MB/s download throughput).

What I’ve learned from folks here (thank you):

The only setting that induced definitive change in my test results was the bandwidth limit. The other settings recommended seem sufficiently high to allow proper functionality under load while varying the limiter bandwidth as suggested to identify the required headroom.

The general consensus seems to be that, once configured for appropriate headroom based on your provisioned rates, any variable and higher-than-desired latency results are likely induced somewhere upstream with the ISP’s equipment suffering from bufferbloat (or over-provisioning).

If speed tests and bufferbloat latency numbers stay fairly stable with and without significant load running in parallel with the test, then your side of things is well-tuned.

@pentangle said in Playing with fq_codel in 2.4:

@mind12 If the results don't change then you are within your bandwidth limits. The idea being that the limiters and FQ_CoDel need a certain amount of 'headroom' in order to operate (shuffling smaller packets to the front of the queue, etc), and so it'll operate well until it doesn't have enough 'headroom' to play with. You need to determine that headroom (bandwidth limit) and the easiest way to do it is to edge the bandwidth limits up until latency takes a nosedive at which point you know that your FQ_CoDel's efficiency is being impaired by the amount of headroom it has to play with, so you dial it back a notch and voila - the fastest throughput you could get whilst retaining low latency.

As regards other limits, I suggest you do a little reading on what those do for you. The settings I gave should be more than adequate for your connection - they work well with my 300/50 connection here. -

Got this test without playing with fq_codel

I do have 500/500 connection