Ipsec over FIOS gigabit with AES-NI - Glory and flames, set me straight.

-

I was looking at some other websites and came across a iperf syntax that I tried. The result is windows pc at home to windows pc at work (across the vpn)

iperf command line was: iperf -c 172.16.1.117 -u -b 1000m

Results are pretty telling: I'm not sure what these swithes do (-u says use UDP not TCP and I'm not understanding -b much at all) but I'm getting full line speed. Hopefully this can tell us something which in turn I can tune on my firewalls. If I lower the -b to 900 800 700 the speed starts to decrease.

–----------------------------------------------------------

Client connecting to 172.16.1.117, UDP port 5001

Sending 1470 byte datagrams, IPG target: 11.76 us (kalman adjust)

UDP buffer size: 208 KByte (default)[ 3] local 192.168.0.55 port 58746 connected with 172.16.1.117 port 5001

[ ID] Interval Transfer Bandwidth

[ 3] 0.0-10.0 sec 1.11 GBytes 953 Mbits/sec

[ 3] Sent 810345 datagramsI just did the same test except -b 3000m from lan interface to lan interface (on each router), and got 1.5gbps throughput. What's going on here. How do I unleash this beast? (no graph)bad command line, it never sent any data across the network.

-

After reading a few more iperf thread I tried using the -P option which will open multiple streams to send data. So from my computer at work I did iperf -c 192.168.0.101 -P 3 (101 being my NAS on the other side of the vpn), and it fully saturated the line, 890 mbps.

So what's that telling me? My windows file copies are single stream and 280+ mbps is the most I'm going to get out of one stream? (as one post suggests). Are their copy programs that will do multiple streams? I've been searching and haven't come across anything.

My eventual need would be to be able to move data from the computer at work to the NAS on the other side of the vpn at line speeds. iperf just showed I can do it from machine to NAS, now I just have to find a program that can make it happen.

Roveer

-

At this point I've been having a conversation with myself on this topic but I'm determined to provide some valuable information to someone who will inevitably come across the same dilemma that I have.

So the past few nights I've been doing a lot of reading. WAN Accelerators, alternate protocols etc. Tonight I came across an article about transferring data across ipsec tunnels. One of the items the author mentioned was different speeds using different protocols. One of the protocols was http. Hmm. My NAS at home has a http front end and I remembered that it did some form of file transfer. I gave it a shot, uploading a 17.7 gig rar archive in 3 minutes and 11 seconds. Here's the tail end of the transfer: As you can see, it achieved full line rate 100+ MBps

I see there are a number of windows programs out there allowing for http transfer. Hopefully I can find a command line version or better yet some that might actually map a drive or at least allow me to send files to my NAS. That would be super. This could be just what I'm looking for to finally saturate my ipsec vpn for file transfer. Sure beats a four thousand dollar WAN Accelerator.

Roveer

-

I too have Gigabit FiOS and have a site to site connection to another ISP which only has gigabit in the download direction the upload is much lower around 40 Mbps. Up until now I have been using OpenVPN because years ago it seemed to handle being behind a NAT much better. Both my machines are i5 with AES-NI support. I can't seem to get over 160 Mbps throughput so I am in the process of converting the link to IPSec to see if there is a speed increase. When I[m finish I will report my results back here. I don't expect to get the full line-rate but if I can get 50% of the link speed I will be happy.

-

The issue you have here is SMB in general and how windows works with tcp coms. With TCP you have a window size which in principle is how much data to send before waiting for ACK.

So there is a formula which is based on latency there is a Max transmission speed to you achieve. So iperf will tell you what the line and your kit can do but this will not translate to smb throughput.

HTTP FTP etc does not exhibit these issues and if you want to transfer files use another method than smb.

-

I tried to get SMB to work over IPsec but I was able to get anything higher than 500 Kb/s. So I switched back to Openvpn.

The issue you have here is SMB in general and how windows works with tcp coms. With TCP you have a window size which in principle is how much data to send before waiting for ACK.

So there is a formula which is based on latency there is a Max transmission speed to you achieve. So iperf will tell you what the line and your kit can do but this will not translate to smb throughput.

HTTP FTP etc does not exhibit these issues and if you want to transfer files use another method than smb.

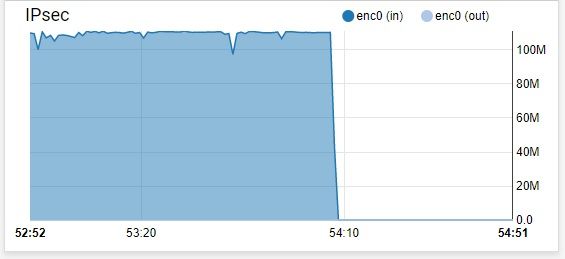

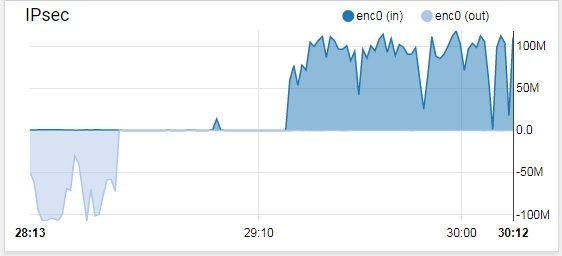

Using Openvpn taking the information you gave me I tried various other protocols like SFTP and FTP and you were correct sir, I was able to get over 400Mb/s. I can see TCP ramp up to over 400 almost 500 Mbps but for what ever reason TCP backs off than starts to ramp up again. I know that is how TCP works but it seems to back off hard when it should probably keep ramping up until seq packets are dropped or come out of order and then have a gradual back off not a hard back off (See pic). I have my send and receive buffers set to 2MiB on both sides of the VPN Tunnel. I will report back if I can get more consistent speeds. I will post the results in the OpenVPN area and provide a link to it here if anyone is interested.

-

Let me preface this with this is more generic WAN advice than specific to pfSense. I have run across two factors to consider on a WAN IPsec link: the previously mentioned window size and Path MTU. And window size hits you twice with SMB.

First TCP window size. A TCP connection can't go any faster than the product of the TCP window size and 1/RTT, no matter how big the pipe is. RFC-1323 is a good read on this and provides the solution. Dig some more to find out how to make sure this enabled on both end points at the OS level. This is effect of the WAN RTT, not IPsec. The pfSense boxes and any other routers in between don't come in to play on this issue.

Also SMB has it's own application window size, which really kills you as RTT increases over the WAN, for the same reasons. I'm not certain if this was ever addressed in later versions of SMB or not, as we threw in some Cisco WAAS devices to work around that protocol, long ago, so I never looked at it again. Likewise, nothing to do with pfSense or IPsec.

Lastly Path MTU. IPsec reduces the MTU over the WAN. More so if are using Tunnel mode, which you probably are. If the Path MTU discovery methods aren't working correctly, you can lose speed to fragmentation. You can also have trouble connecting at all, if the application is requesting don't fragment (DF). I recently encountered this between an AWS EC2 instance and a premises server, going over the VPN. Couldn't even complete an SSL/TLS negotiation as the cert exceeded the MTU and they were using DF. Another symptom of this is with an FTP connection. If you can authenticate the FTP session, but ls, dir and transfers fail, that can be caused by MTU problems.

Path MTU discovery is often broken by blocking the ICMP unreachable messages (in the name of "security"…) or if the tunnel endpoints don't properly setup the tunnel MTU to generate the ICMP unreachables. I don't know one way or the other about pfSense's handling of tunnel MTU, but hopefully some who does will chime in. A quick test for this would be to lower the interface MTU on both your application endpoints to say 1300 and see if that impacts the throughput. You can also use ping with different sizes and the DF bit set to discover the current path MTU. The lowered endpoint MTU is a quick and dirty hack to workaround this problem. A slightly less dirty hack is to add MTU information to your routing table manually. I've done this in Linux, but never looked at doing it in Windows or BSD. The best solution is to get Path MTU Discovery working properly.

-

@Jed:

Let me preface this with this is more generic WAN advice than specific to pfSense. I have run across two factors to consider on a WAN IPsec link: the previously mentioned window size and Path MTU. And window size hits you twice with SMB.

First TCP window size. A TCP connection can't go any faster than the product of the TCP window size and 1/RTT, no matter how big the pipe is. RFC-1323 is a good read on this and provides the solution. Dig some more to find out how to make sure this enabled on both end points at the OS level. This is effect of the WAN RTT, not IPsec. The pfSense boxes and any other routers in between don't come in to play on this issue.

Also SMB has it's own application window size, which really kills you as RTT increases over the WAN, for the same reasons. I'm not certain if this was ever addressed in later versions of SMB or not, as we threw in some Cisco WAAS devices to work around that protocol, long ago, so I never looked at it again. Likewise, nothing to do with pfSense or IPsec.

Lastly Path MTU. IPsec reduces the MTU over the WAN. More so if are using Tunnel mode, which you probably are. If the Path MTU discovery methods aren't working correctly, you can lose speed to fragmentation. You can also have trouble connecting at all, if the application is requesting don't fragment (DF). I recently encountered this between an AWS EC2 instance and a premises server, going over the VPN. Couldn't even complete an SSL/TLS negotiation as the cert exceeded the MTU and they were using DF. Another symptom of this is with an FTP connection. If you can authenticate the FTP session, but ls, dir and transfers fail, that can be caused by MTU problems.

Path MTU discovery is often broken by blocking the ICMP unreachable messages (in the name of "security"…) or if the tunnel endpoints don't properly setup the tunnel MTU to generate the ICMP unreachables. I don't know one way or the other about pfSense's handling of tunnel MTU, but hopefully some who does will chime in. A quick test for this would be to lower the interface MTU on both your application endpoints to say 1300 and see if that impacts the throughput. You can also use ping with different sizes and the DF bit set to discover the current path MTU. The lowered endpoint MTU is a quick and dirty hack to workaround this problem. A slightly less dirty hack is to add MTU information to your routing table manually. I've done this in Linux, but never looked at doing it in Windows or BSD. The best solution is to get Path MTU Discovery working properly.

I thought bout a lot of what your saying but reducing the MTU between both tunnels doesn't seem to help. I'm wondering why this problem effects IPsec tunnels but not OpenVPN tunnels to the extent that on OpenVPN I can at least get 100 Mbps, IPSec I'm getting 50 Kbps. I've setup many IPSec Tunnels so I'm almost certain I have it configured correctly. I'm too am hoping something can be done to make SMB work better over these links. Not sure if this is something Microsoft needs to address or something that Pfsense can fix or maybe it's a all of the above deal.

-

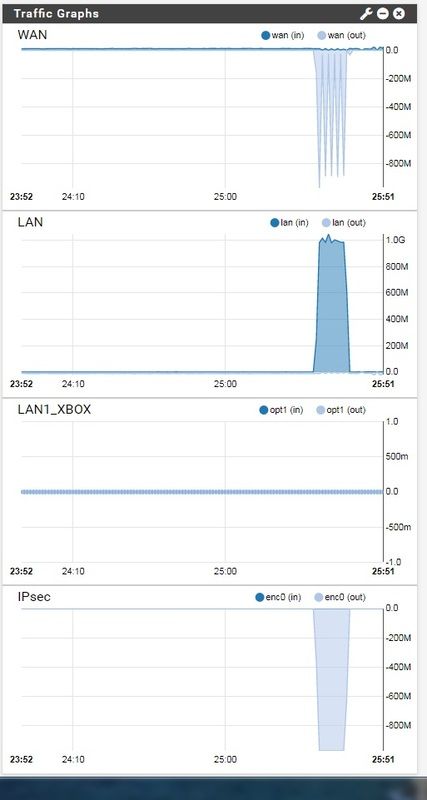

I think the answer to all of my questions on saturating my 1Gb ipsec was in front of me all of the time. SMB3. I had been doing some 10GB testing and not until I got to Win10 (Win8.1 does this too), did I get the advantages of SMB3 which among other things does multiple connections. The dips you see in the graph are most likely the crappy pc I had on one side of the vpn trying to do win10 updates at the same time I was uploading. I can hear the HD grinding away right now.

-

Is that a 100 MBps or 100 Mbps?