IPsec routed vti: phase2 not renewed

-

Dear all,

i'm facing a poblem with ip vti routed interface.

I've followed the routed ipsec hangout and tryed a setup.

The VPN starts correctly and phase1 and phase2 agrees fully.

The configuration looks fine, but I see is that after lifetime seconds, the phase2 is not renewed and it is not possible to stat phase2 again.Can someone give me an advice ablout this problem?

Here the details.

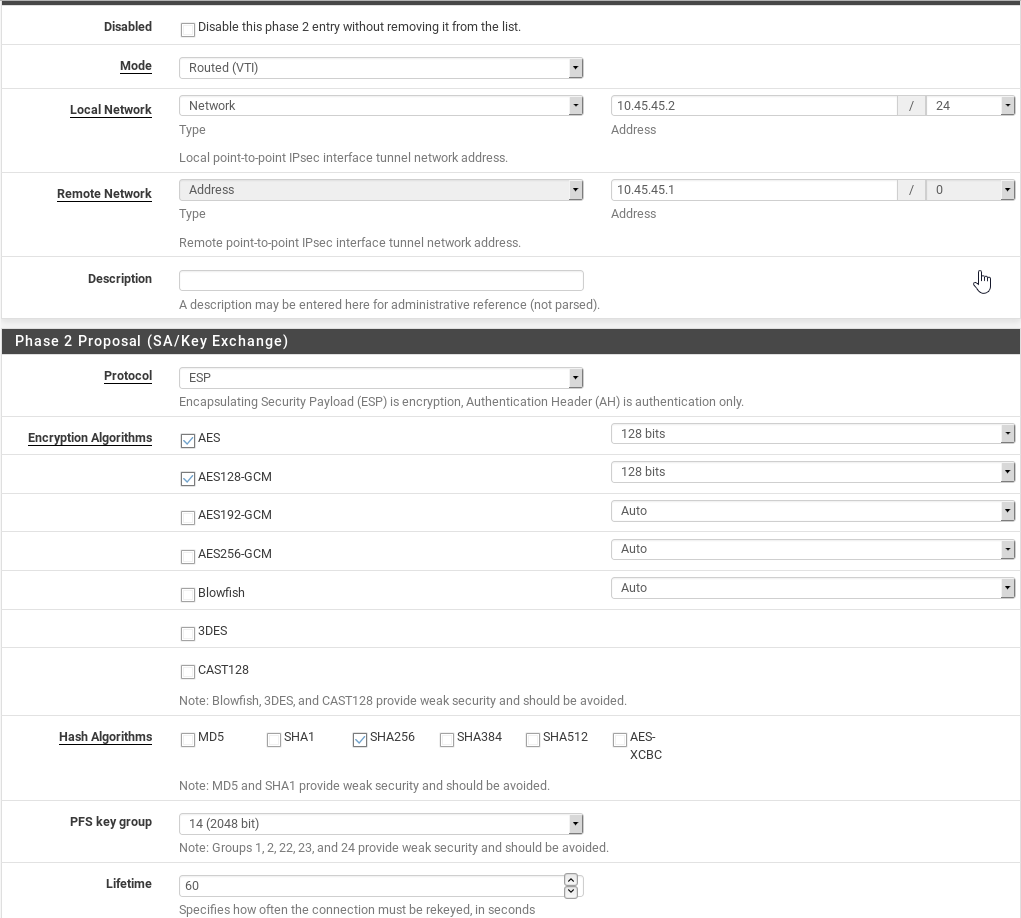

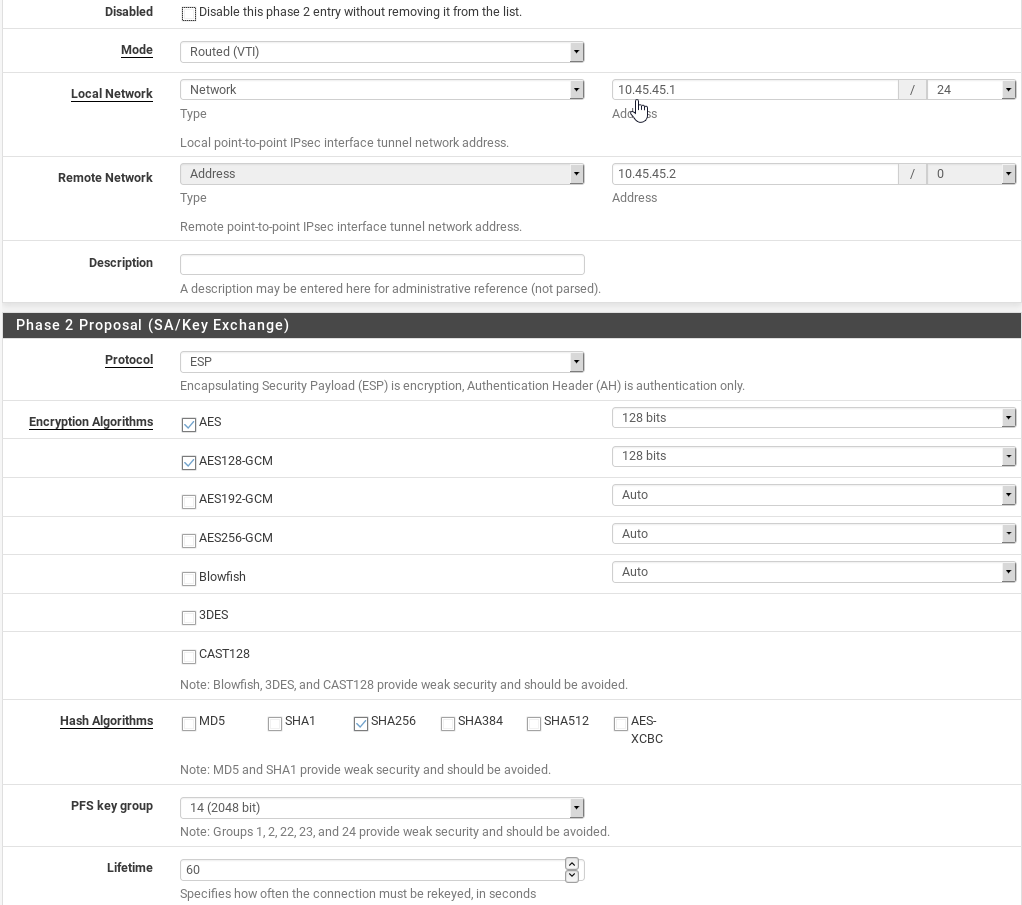

(in the screenshot here the lifetime period is set to 60 sec instead of 3600 to make testing easier)

All of the devices are pfsense version 2.4.4.p3

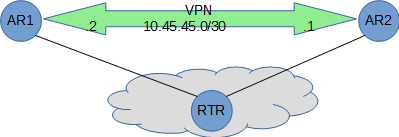

AR1

WAN 15.0.0.105/24

ipsec 10.45.45.2/30RTR (only for internet simulation)

WAN1 15.0.0.100/24

WAN2 14.0.0.100/24

AR2

WAN 14.0.0.1/24

ipsec 10.45.45.1/30

RTR

is a pfsense with permit any any (internet connection )

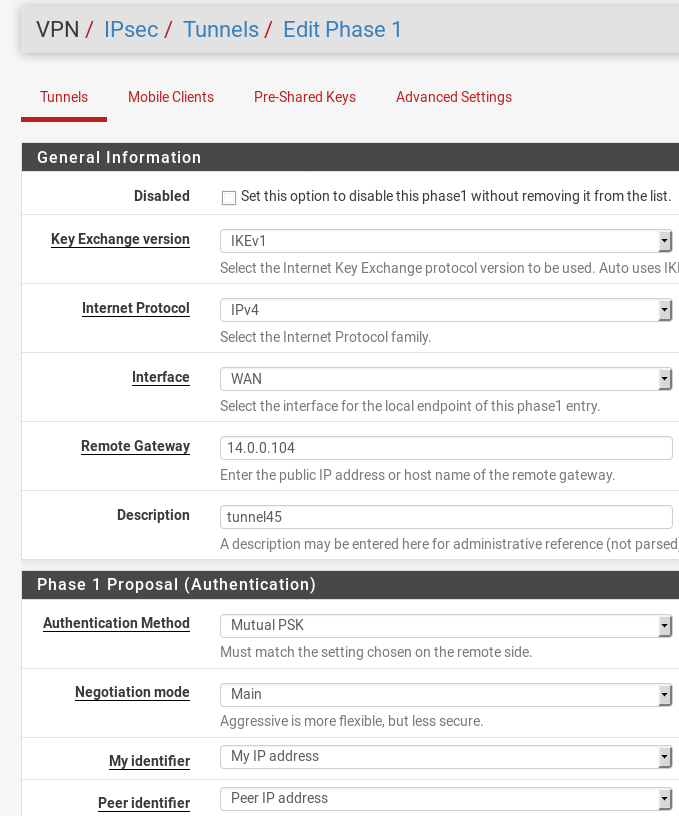

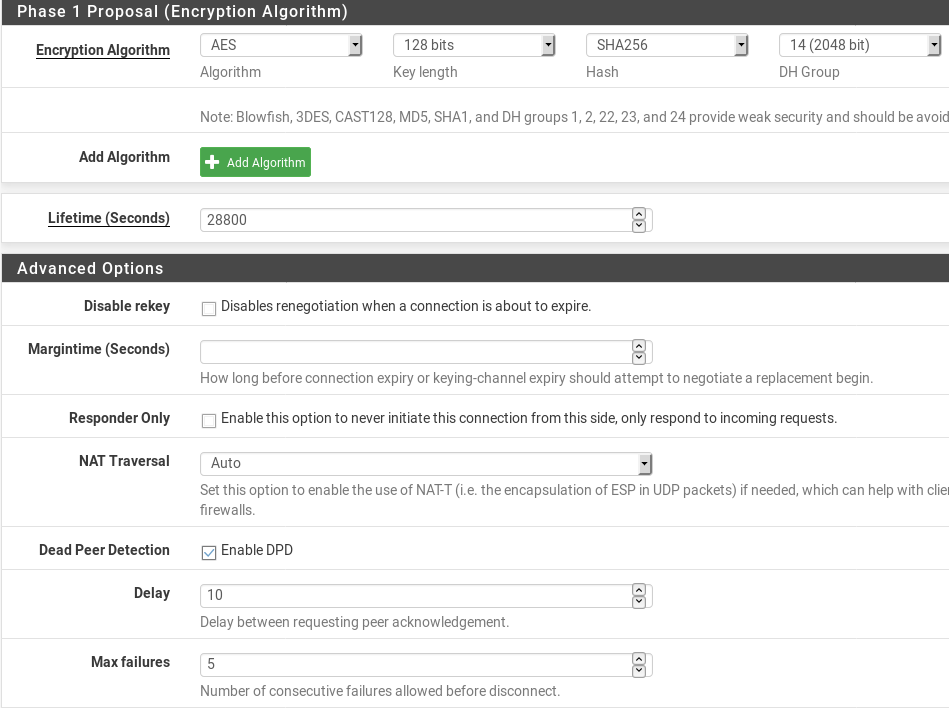

Here is phase1 from AR1

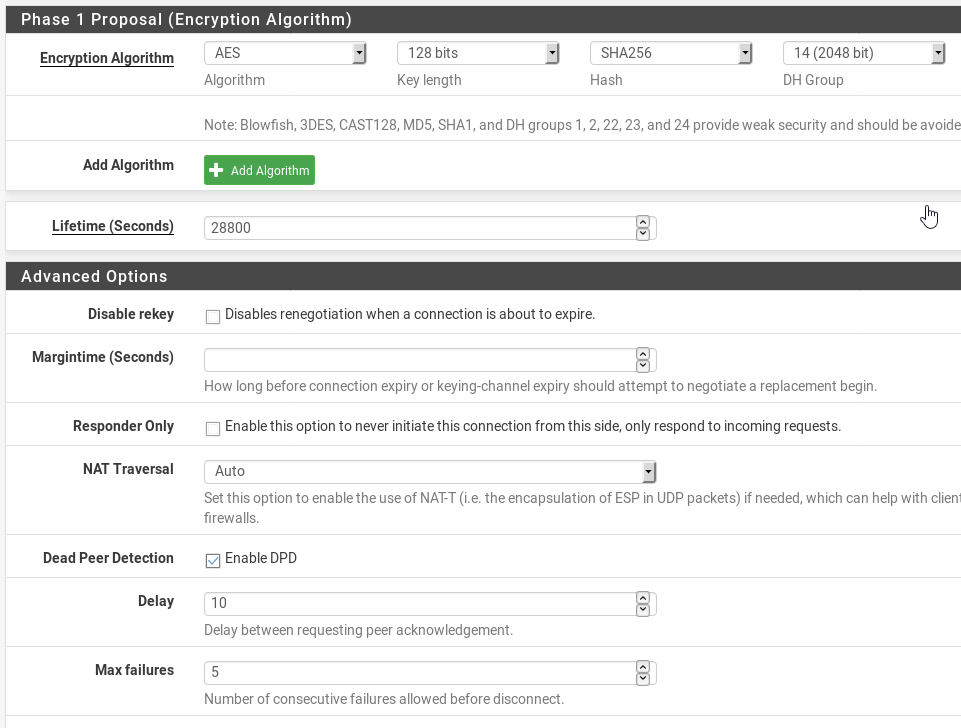

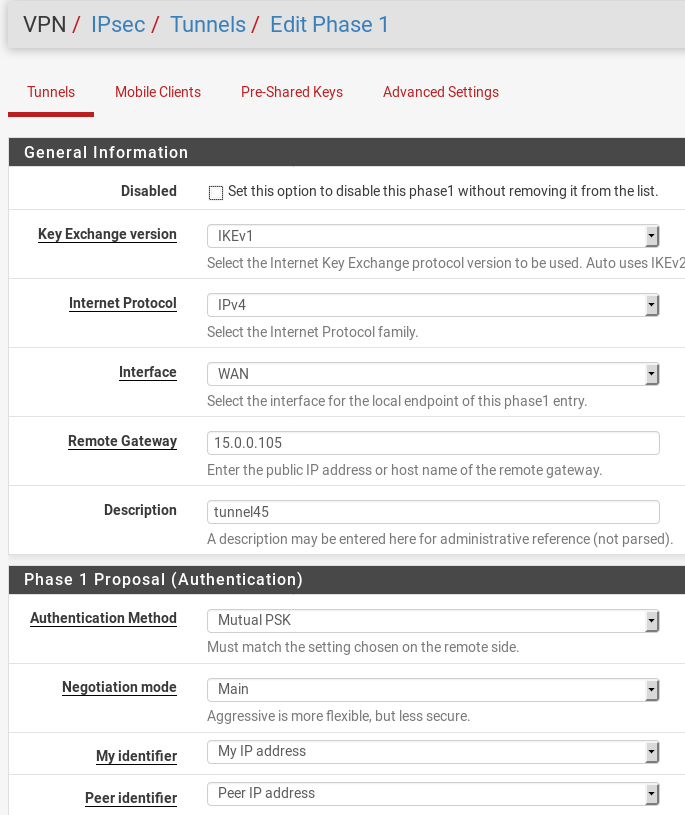

Here is phase1 from AR2

Here is Phase2 from AR1

Here is Phase2 from AR2

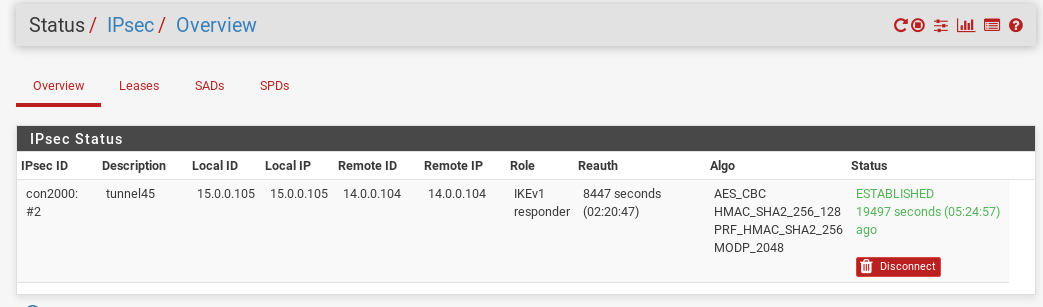

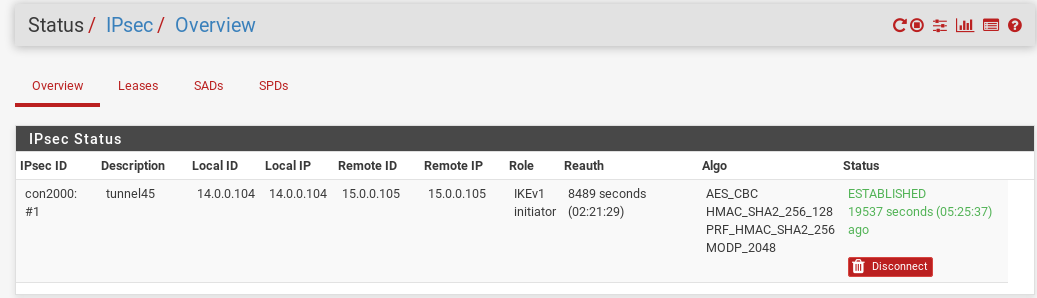

VPN Status on AR1

VPN status on AR2

-

Hi, you set the tunnel network to /24 instead of /30. You could also try to assign the interface and to disable gateway monitoring (pfSense in some sense treats a VTI always as assigned, as you will notice when you try to disable a phase, but for configuration purposes you have to assign it explicitly).

-

Thanks Abbys for the suggestion: the /24 to /30 network modification had no effect.

I'm currentrly trying with gateway monitoring disabled.

I will keep you updated. -

It seems to work correctly after disabling gateway monitoring: why does this appens?

-

I don't know, but the VTI feature seems a bit unstable, apparently due to how pfSense treats assigned interfaces. I experienced this in a BGP setup, where it is not only required to disable gateway monitoring but also to use a loopback IP in order to prevent the BGP session from dying.

You might be lucky having pfSenses on both ends, because I also see some occasional packet drops to Azure and Cisco ASA VPNs. Various MTU/MSS settings did not help so far. -

Hi Abbys,

thanks for the info.

I'm sorry to report that the setup is not so stable, the renewal of phase2 is still a problem (after a longer number of renwal)

In effect i'm trying to configure ospf dynamic routing in this setup (3 sites with 3 pfsense), but untill I get stable VPN connection I cannot procede.

Can you explain me the part about loopback IP for BGP session?Regards.

-

That is strange, because I also had 3 pfSenses in IPsec VTI configuration and OSPF was quite solid. Only due to Cisco and Azure (they don't support OSPF via VTI) I switched to BGP. The hybrid OSPF/BGP setup is cumbersome, if you have to filter some routes.

Anyway, to set up your pfSenses with BGP, you should create loopback IPs. That's the Firewall menu > Virtual IPs. Pick private IPs that you don't use anywhere else and assign them to the loopback interface.

If you only have one connection between each pair of pfSenses, then one virtual IP on each appliance is enough.

You then configure the remote VIPs as BGP neighbors and in the neighbor settings you choose the local VIP as outgoing interface.

Finally, you have to create a static route to each remote VIP, using the gateway of the respective (assigned) VTI.So, the BGP traffic finds its way between the VIPs over static routes and should only be affected by "real" connection loss. The other traffic is routed via the BGP routes.

-

Side note: I use IKEv2 for the entire installation, but it should not make any difference.

-

I can confirm that the problem was solved after removing gateway monitoring .

I also switched to ipsec v2 and set the mtu on the VTI interface to a lower value (becasue of ospf advertisement into the ipsec tunnel)Many thanks to Abbys.

-

I see a similar problem with tunnels to AWS, but in this case I've already disabled gateway monitoring so I'm not sure what else I could do.

I have a pair of IPSEC tunnels configured (as per AWS recommendations), and OpenBGPD for failover which is working fine.

After a couple of days, one of the tunnels goes down - and I get an E-mail from AWS saying I'm running non-redundantly. At this point, I find that one of the tunnels does not respond to ping:

[2.4.4-RELEASE][admin@fw1.int.example.net]/root: ping -c3 169.254.23.9 PING 169.254.23.9 (169.254.23.9): 56 data bytes 64 bytes from 169.254.23.9: icmp_seq=0 ttl=254 time=10.560 ms 64 bytes from 169.254.23.9: icmp_seq=1 ttl=254 time=10.756 ms 64 bytes from 169.254.23.9: icmp_seq=2 ttl=254 time=10.587 ms --- 169.254.23.9 ping statistics --- 3 packets transmitted, 3 packets received, 0.0% packet loss round-trip min/avg/max/stddev = 10.560/10.634/10.756/0.087 ms [2.4.4-RELEASE][admin@fw1.int.example.net]/root: ping -c3 169.254.22.149 PING 169.254.22.149 (169.254.22.149): 56 data bytes --- 169.254.22.149 ping statistics --- 3 packets transmitted, 0 packets received, 100.0% packet loss [2.4.4-RELEASE][admin@fw1.int.example.net]/root:Doing these test pings by hand does not bring up the failed tunnel.

In the GUI, looking under Status > IPSec, it showed AWS tunnel 1 as connected and AWS tunnel 2 as disconnected - with a green "Connect" button next to it. Clicking this button makes the tunnel come up immediately. So there's something preventing the tunnel from auto-establishing.

I created all the tunnel parameters based on what AWS put in their settings file (downloaded the "generic" version):

- Phase 1

- IKEv1

- IPv4

- Main mode

- AES, 128 bits, SHA1, DH group 2

- DPD enabled (10 secs, 3 failures)

- Lifetime 28800

- Phase 2

- ESP

- AES, 128 bits, SHA1, PFS DH group 2

- Lifetime 3600

Any ideas?

- Phase 1

-

What is the kernel route to 169.254.22.149?

-

@Abbys: luckily I still have the netstat output from the time the link was down:

[2.4.4-RELEASE][admin@fw1.int.example.net]/root: netstat -rn | grep 169.254.22 169.254.22.149 link#27 UH ipsec500 169.254.22.150 link#27 UHS lo0It's exactly the same now that the tunnel is up - except BGP has also installed a route to our AWS address space (10.30/16)

[2.4.4-RELEASE][admin@fw1.int.example.net]/root: netstat -rn | grep 169.254.22 10.30.0.0/16 169.254.22.149 UG1 ipsec500 169.254.22.149 link#27 UH ipsec500 169.254.22.150 link#27 UHS lo0(The interface is actually ipsec5000, it's just been truncated in netstat output)