Packet loss and high response time from LAGG to LAGG

-

Below is quite the read. Please ask me to clarify if anything is not explained properly. I am a little confused and desperate for help.

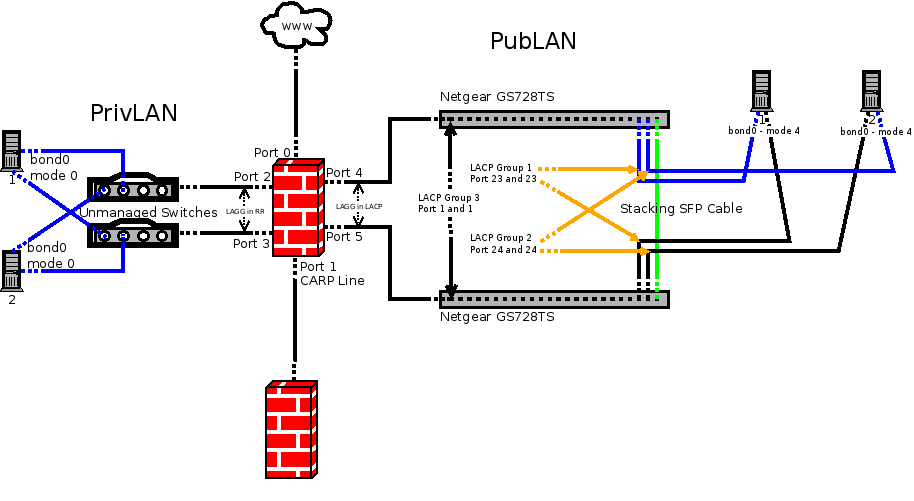

I have 2 stacked switches from Netgear. GS728TS. They stack with an included 1/2.5G SFP Stacking SFP cable. I have two LAGG groups setup in different networks coming off the pfsense. One is PrivLAN and one is PubLAN. Each has its own set of switches for redundancy. PrivLAN uses 2 Cisco SMB switches that are NOT managed. PubLAN uses two Netgear GS728TS switches in a stacked and managed config. The OS(CentOS 6) of the servers connected to the switches take care of the bonding of interfaces for server tasks.

Here are the bad scenarios:

When a server, with bonded interfaces, in the PrivLAN attempts to ping a server(that has bonded NICs) in the PubLAN, high response time and packet loss occurs.

When a server, with bonded interfaces, in the PubLAN attempts to ping a server(that has bonded NICs) in the PrivLAN, high response time and packet loss occurs.

When a server, without bonded interfaces, pings from the PubLAN to a PrivLAN server (with bonded NICs), No packet loss occurs and low response time.

When a server, with bonded interfaces, pings from the PrivLAN to a PubLAN server (without bonded NICs), No packet loss occurs and low response time.In all scenarios, pinging (using servers with bonded interfaces) to other servers(which also have bonded interfaces) in the same LAN, everything was fine. No packet loss and low low response time

Believe it or not, I tried centos bonds in each and every mode 0-6 and pfsense LAGG groups in all applicable modes. The combinations are crazy and brain frying.

Here is an image:

-

Starting to think that the Round Robin LAGG (coming from the Private LAN) is causing the issue. I will test the Active/Backup mode and re-test. Any thoughts on this? Any other ideas?

-

Have you found a solution?

I'm finding the same problems when bonding interfaces for a OpenVPN Bridge I have topic about this problem running here,

https://forum.pfsense.org/index.php?topic=72018 -

Sorry that I have not replied sooner. I didn't get an email about your response. My issue was with the way my CentOS bonds were configured.

In CentOS bonding, a second, third, etc. bond will simply be ignored without telling you. You need to tell the OS about the bond options in a different way.

Single bonds(and maybe multiple bonds with the same type) are completely configured with the bonding options in the bonding.conf.

Multiple bonds are denoted in the individual bonds sysconfig/network-scripts config file. Seen below:Step1 : Tell OS about bonding [–-do not put bonding opts---]

/etc/modprobe.d/bonding.confalias bond0 bonding alias bond1 bondingStep2 : Configure each interface with the bonding opts

/etc/sysconfig/network-scripts/ifcfg-bond0DEVICE=bond0 ONBOOT=yes BOOTPROTO=none IPADDR=192.168.35.13 NETMASK=255.255.255.0 GATEWAY=192.168.35.1 USERCTL=no BONDING_OPTS="miimon=100 mode=4 lacp_rate=1"/etc/sysconfig/network-scripts/ifcfg-bond1

DEVICE=bond1 ONBOOT=yes ENABLED=yes BOOTPROTO=none IPADDR=172.16.52.13 NETMASK=255.255.255.0 NETWORK=172.16.52.0 USERCTL=no BONDING_OPTS="miimon=150 mode=2" -

Mm not sure if it is similar then, as I was using 2x pfSense.

So not CentOS as OS….