Iperf testing, same subnet, inconsistent speeds.

-

Hmm. Are you still seeing it in one direction only?

And only on the link to ix1?

The Marvell switch should not be any sort of restriction, it can pass the 5Gbps combined internal ports easily.

Can you try reassigning the port to ix0?

Steve

-

I have been doing some SCP testing using 1GB file to other devices off the marvel switch, it appears inconsistent. I get full speed transfers (111MB/s-123MB/s) to two intel NUCs and a custom ITX build (Ports 2, 5, and 8).

Testing scp to my Synology and an x86 SBC (Up board) both result in 20MB/s-50MB/s.

I'll admit the x86 SBC probably isn't the best indicator of file transfer speed (Atom x5-Z8350, 32GB eMMC, Realtek 8111G - PCIe Gen2 x1 link to cpu), and the eMMC storage appears to be hitting its write speed limit for sustained transfer. (Maintains about 60MB/s for 2 seconds, then drops to 20MB/s).There is a local network upstream of this pfsense device, connected to the ix0 interface.

Testing scp to any of those devices also nets me around 111MB/s.This leads me to believe there is a problem with the actual port to both the Synology and my x86 SBC. Or potentially those two devices have something in common at the OS or network adapter level that compromises file transfer speeds, but not iperf testing?

At this point I have to say, ix0/ix1 and their transceivers are not the issue.

Here is some information about the ports on the marvel switch.

Ports 1, 3, and 4 are the problem. Synology is connected (now in active-passive mode) to port 3 and 4. The x86 SBC is connected to port 1.etherswitch0: VLAN mode: DOT1Q port1: pvid: 101 state=8<FORWARDING> flags=0<> media: Ethernet autoselect (1000baseT <full-duplex>) status: active port2: pvid: 101 state=8<FORWARDING> flags=0<> media: Ethernet autoselect (1000baseT <full-duplex,master>) status: active port3: pvid: 101 state=8<FORWARDING> flags=0<> media: Ethernet autoselect (1000baseT <full-duplex>) status: active port4: pvid: 101 state=8<FORWARDING> flags=0<> media: Ethernet autoselect (1000baseT <full-duplex>) status: active port5: pvid: 1018 state=8<FORWARDING> flags=0<> media: Ethernet autoselect (1000baseT <full-duplex,master>) status: active port6: pvid: 900 state=8<FORWARDING> flags=0<> media: Ethernet autoselect (none) status: no carrier port7: pvid: 103 state=8<FORWARDING> flags=0<> media: Ethernet autoselect (100baseTX <full-duplex>) status: active port8: pvid: 103 state=8<FORWARDING> flags=0<> media: Ethernet autoselect (1000baseT <full-duplex,master>) status: active port9: pvid: 1 state=8<FORWARDING> flags=1<CPUPORT> media: Ethernet 2500Base-KX <full-duplex> status: active port10: pvid: 1 state=8<FORWARDING> flags=1<CPUPORT> media: Ethernet 2500Base-KX <full-duplex> status: activeDespite the scp showing low speeds, iperf3 to and from the x86 SBC is practically full speed.

From x86 SBC to PC iperf 3.7 Linux host 5.10.0-9-amd64 #1 SMP Debian 5.10.70-1 (2021-09-30) x86_64 Control connection MSS 1448 Time: Sat, 18 Dec 2021 14:38:38 GMT Connecting to host 10.10.0.2, port 4444 Cookie: s55tkrqkae6ayrmxixoyiig3lboy43xsume4 TCP MSS: 1448 (default) [ 5] local 10.10.1.4 port 52440 connected to 10.10.0.2 port 4444 Starting Test: protocol: TCP, 1 streams, 131072 byte blocks, omitting 0 seconds, 10 second test, tos 0 [ ID] Interval Transfer Bitrate Retr Cwnd [ 5] 0.00-1.00 sec 68.8 MBytes 577 Mbits/sec 0 392 KBytes [ 5] 1.00-2.00 sec 97.1 MBytes 814 Mbits/sec 0 602 KBytes [ 5] 2.00-3.00 sec 111 MBytes 934 Mbits/sec 0 602 KBytes [ 5] 3.00-4.00 sec 112 MBytes 942 Mbits/sec 0 602 KBytes [ 5] 4.00-5.00 sec 111 MBytes 935 Mbits/sec 0 602 KBytes [ 5] 5.00-6.00 sec 106 MBytes 891 Mbits/sec 0 602 KBytes [ 5] 6.00-7.00 sec 111 MBytes 933 Mbits/sec 0 602 KBytes [ 5] 7.00-8.00 sec 112 MBytes 944 Mbits/sec 0 602 KBytes [ 5] 8.00-9.00 sec 112 MBytes 944 Mbits/sec 0 602 KBytes [ 5] 9.00-10.00 sec 109 MBytes 911 Mbits/sec 0 636 KBytes - - - - - - - - - - - - - - - - - - - - - - - - - Test Complete. Summary Results: [ ID] Interval Transfer Bitrate Retr [ 5] 0.00-10.00 sec 1.03 GBytes 882 Mbits/sec 0 sender [ 5] 0.00-10.01 sec 1.02 GBytes 879 Mbits/sec receiver CPU Utilization: local/sender 21.8% (0.6%u/21.2%s), remote/receiver 18.3% (2.5%u/15.8%s) snd_tcp_congestion cubic rcv_tcp_congestion cubic iperf Done.From PC to x86 SBC iperf 3.7 Linux host 5.11.0-43-generic #47~20.04.2-Ubuntu SMP Mon Dec 13 11:06:56 UTC 2021 x86_64 Control connection MSS 1448 Time: Sat, 18 Dec 2021 14:39:23 GMT Connecting to host 10.10.1.4, port 4444 Cookie: hky2jxyxjobncjqjsqkkutvqxpadhkkhxm2g TCP MSS: 1448 (default) [ 5] local 10.10.0.2 port 35838 connected to 10.10.1.4 port 4444 Starting Test: protocol: TCP, 1 streams, 131072 byte blocks, omitting 0 seconds, 10 second test, tos 0 [ ID] Interval Transfer Bitrate Retr Cwnd [ 5] 0.00-1.00 sec 106 MBytes 891 Mbits/sec 0 960 KBytes [ 5] 1.00-2.00 sec 100 MBytes 839 Mbits/sec 0 960 KBytes [ 5] 2.00-3.00 sec 101 MBytes 849 Mbits/sec 0 960 KBytes [ 5] 3.00-4.00 sec 100 MBytes 839 Mbits/sec 0 960 KBytes [ 5] 4.00-5.00 sec 108 MBytes 902 Mbits/sec 0 1007 KBytes [ 5] 5.00-6.00 sec 106 MBytes 891 Mbits/sec 0 1.25 MBytes [ 5] 6.00-7.00 sec 101 MBytes 849 Mbits/sec 0 1.25 MBytes [ 5] 7.00-8.00 sec 100 MBytes 839 Mbits/sec 0 1.25 MBytes [ 5] 8.00-9.00 sec 100 MBytes 839 Mbits/sec 0 1.25 MBytes [ 5] 9.00-10.00 sec 100 MBytes 839 Mbits/sec 0 1.25 MBytes - - - - - - - - - - - - - - - - - - - - - - - - - Test Complete. Summary Results: [ ID] Interval Transfer Bitrate Retr [ 5] 0.00-10.00 sec 1022 MBytes 858 Mbits/sec 0 sender [ 5] 0.00-10.00 sec 1016 MBytes 852 Mbits/sec receiver CPU Utilization: local/sender 1.3% (0.0%u/1.3%s), remote/receiver 49.5% (6.7%u/42.8%s) snd_tcp_congestion cubic rcv_tcp_congestion cubic iperf Done.I think this is a problem with these two devices (Synology and x86 SBC). I am pretty sure the Synology uses a Realtek nic, maybe that could be the issue?

-

Realtek NIC under Linux is probably fine.

The fact iperf gets full speed and SCP transfers do not implies the limitation is not the network. It's the storage speed or the CPU ability to run the SCP encryption rates.I'd be very surprised if the switch ports behaved differently but try swapping them, it should be easy enough.

I do note that ports 2,5 and 8 have some flow control active and the others do not.

Steve

-

The speed limitation also applies to native rsync and SMB3.

Is there a more verbose switch command for marvel that I can run?

I have not personally configured any flow control. -

@erasedhammer Why don't you just take pfsense out of the equation if you suspect it to be causing your 50MBps limit in file transfers.

I don't see how that would be the case when your showing network speeds at pretty close to wire, and for sure higher than 50MBps speeds.

Connect your PC and NAS to the dumb switch - do you see full speed file transfers then?

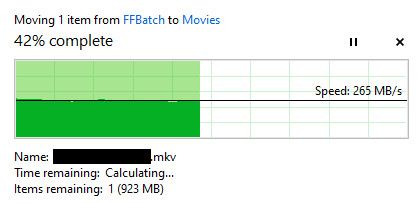

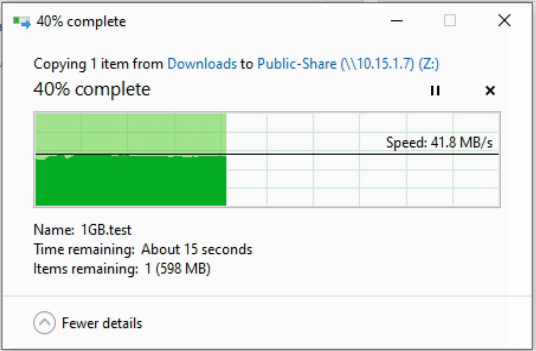

edit: I am in the middle of moving some files around from my PC to NAS and while I do not have your specific nas, I have a synology DS918+ I do not have any issues with disks or cpu causing slowdowns.. I far exceed 50MBps - while even streaming movies off the nas to currenly 4 different viewers.

This was like a 1.8GB file.. via a 2.5ge connection

-

This equipment is separated by two floors and some of these devices are essential to the network. Taking things out of service for more than a couple minutes is not possible right now.

I'm just trying to troubleshoot via the least invasive way. Next month I am doing a major migration and will have the required downtime to properly test this.

-

@erasedhammer said in Iperf testing, same subnet, inconsistent speeds.:

Is there a more verbose switch command for marvel that I can run?

You can run:

etherswitchcfg -vbut that's the same info the gui displays.

I would be very surprised if this was an issue with the switch. There's always a first time but as far as know we have never seen an issue like that.The flow control is negotiated when the link is established so some of those devices are capable or configured to use it.

Steve

-

@erasedhammer said in Iperf testing, same subnet, inconsistent speeds.:

Taking things out of service for more than a couple minutes is not possible right now.

That puts a hinder on testing ;)

Anyway you could take a laptop to where the nas?

Just at a complete loss to come up with some scenario where pfsense would be limiting file transfers if your seeing those speeds through it via iperf.

-

@johnpoz

I was thinking of using the secondary nic. I have to research if dismantling bond0 to get two separate nics on Synology would break connectivity permanently. That would be more disastrous than moving the whole NAS. -

@erasedhammer said in Iperf testing, same subnet, inconsistent speeds.:

I have to research if dismantling bond0 to get two separate nics on Synology would break connectivity permanently

Yeah, probably don't do that!

-

Deleting the bond0 has left one interface intact with the correct IP address. So I was able to use the secondary interface to test speeds directly.

It seems pretty clear that pfsense is not the problem at all.

Getting the same speeds directly with a laptop directly connected to the NAS.

Iperf3 test shows network is not the problem.

From Laptop to NAS iperf 3.6 Linux nas 4.4.180+ #42218 SMP Mon Oct 18 19:16:01 CST 2021 aarch64 ----------------------------------------------------------- Server listening on 4444 ----------------------------------------------------------- Time: Sat, 18 Dec 2021 19:33:48 GMT Accepted connection from 10.15.1.8, port 13951 Cookie: DESKTOP-VPJHHOI.1639856027.395816.51 TCP MSS: 0 (default) [ 5] local 10.15.1.7 port 4444 connected to 10.15.1.8 port 13952 Starting Test: protocol: TCP, 1 streams, 131072 byte blocks, omitting 0 seconds, 10 second test, tos 0 [ ID] Interval Transfer Bitrate [ 5] 0.00-1.00 sec 106 MBytes 889 Mbits/sec [ 5] 1.00-2.00 sec 111 MBytes 929 Mbits/sec [ 5] 2.00-3.00 sec 110 MBytes 922 Mbits/sec [ 5] 3.00-4.00 sec 111 MBytes 930 Mbits/sec [ 5] 4.00-5.00 sec 111 MBytes 933 Mbits/sec [ 5] 5.00-6.00 sec 110 MBytes 924 Mbits/sec [ 5] 6.00-7.00 sec 109 MBytes 913 Mbits/sec [ 5] 7.00-8.00 sec 110 MBytes 924 Mbits/sec [ 5] 8.00-9.00 sec 109 MBytes 917 Mbits/sec [ 5] 9.00-10.00 sec 112 MBytes 936 Mbits/sec [ 5] 10.00-10.03 sec 3.90 MBytes 944 Mbits/sec - - - - - - - - - - - - - - - - - - - - - - - - - Test Complete. Summary Results: [ ID] Interval Transfer Bitrate [ 5] (sender statistics not available) [ 5] 0.00-10.03 sec 1.08 GBytes 922 Mbits/sec receiver CPU Utilization: local/receiver 14.8% (0.6%u/14.1%s), remote/sender 0.0% (0.0%u/0.0%s) rcv_tcp_congestion cubic iperf 3.6From NAS to Laptop iperf 3.6 Linux nas 4.4.180+ #42218 SMP Mon Oct 18 19:16:01 CST 2021 aarch64 Control connection MSS 1460 Time: Sat, 18 Dec 2021 19:35:02 GMT Connecting to host 10.15.1.8, port 4444 Cookie: yg6a4kxaczkaolqgasfxmaynim35ot3rbjro TCP MSS: 1460 (default) [ 5] local 10.15.1.7 port 32954 connected to 10.15.1.8 port 4444 Starting Test: protocol: TCP, 1 streams, 131072 byte blocks, omitting 0 seconds, 10 second test, tos 0 [ ID] Interval Transfer Bitrate Retr Cwnd [ 5] 0.00-1.00 sec 111 MBytes 934 Mbits/sec 0 211 KBytes [ 5] 1.00-2.00 sec 108 MBytes 903 Mbits/sec 0 211 KBytes [ 5] 2.00-3.00 sec 106 MBytes 887 Mbits/sec 0 211 KBytes [ 5] 3.00-4.00 sec 107 MBytes 900 Mbits/sec 0 211 KBytes [ 5] 4.00-5.00 sec 106 MBytes 891 Mbits/sec 0 211 KBytes [ 5] 5.00-6.00 sec 104 MBytes 870 Mbits/sec 0 211 KBytes [ 5] 6.00-7.00 sec 109 MBytes 911 Mbits/sec 0 211 KBytes [ 5] 7.00-8.00 sec 107 MBytes 895 Mbits/sec 0 211 KBytes [ 5] 8.00-9.00 sec 107 MBytes 901 Mbits/sec 0 211 KBytes [ 5] 9.00-10.00 sec 107 MBytes 900 Mbits/sec 0 211 KBytes - - - - - - - - - - - - - - - - - - - - - - - - - Test Complete. Summary Results: [ ID] Interval Transfer Bitrate Retr [ 5] 0.00-10.00 sec 1.05 GBytes 899 Mbits/sec 0 sender [ 5] 0.00-10.00 sec 1.05 GBytes 899 Mbits/sec receiver CPU Utilization: local/sender 5.7% (0.4%u/5.3%s), remote/receiver 13.4% (6.5%u/6.9%s) snd_tcp_congestion cubic iperf Done.