New PPPoE backend, some feedback

-

Ah, yes PPP connections are not supported in HA setups indeed. But as you say if can be made to work (ish). What is the parent for the PPPoE there then? The CARP VIP? I don't think that's possible.

-

@stephenw10 said in New PPPoE backend, some feedback:

What is the parent for the PPPoE there then? The CARP VIP? I don't think that's possible.

Yes, it's a CARP VIP. I think I'll just get rid of it.

-

I can't remember or find that thread, but I think someone already asked about this...

Where exactly does the new PPPoE backend write the connection log?Status/System Logs/PPP contains only old mpd records.

-

Hmm, well it looks like you can (or could) actually set a CARP VIP as a PPPoE parent. Which seems illogical but....

And I assume you can't with if_pppoe because that's not a physical interface....

There isn't anything like the same logging that mpd gives. Yet. I would run a pcap on the parent NIC and see whats actually happening. I would think it has to send from the CARP MAC since it clearly doesn't us the actual VIP IP.

-

@stephenw10 said in New PPPoE backend, some feedback:

There isn't anything like the same logging that mpd gives. Yet. I would run a pcap on the parent NIC and see whats actually happening. I would think it has to send from the CARP MAC since it clearly doesn't us the actual VIP IP.

Are you talking about the mpd backend or the new one? On the new one, when selecting the CARP VIP, the pcap on the parent interface naturally shows nothing — the new backend simply can't configure itself properly and doesn't start at all.

Interesting. I switched back to mpd, leaving the settings with the VIP that were configured for the new backend — and now PPPoE doesn't want to work even with mpd. Something is definitely wrong with the configuration conversion between the two backends.

In the log, it also looks like it's connecting through the wrong interface:

2025-04-08 18:55:45.407053+03:00 ppp 56619 [wan] Bundle: Interface ng0 created 2025-04-08 18:55:45.406382+03:00 ppp 56619 web: web is not running 2025-04-08 18:55:44.495307+03:00 ppp 36089 process 36089 terminated 2025-04-08 18:55:44.446476+03:00 ppp 36089 [wan] Bundle: Shutdown 2025-04-08 18:55:44.403502+03:00 ppp 56619 waiting for process 36089 to die... 2025-04-08 18:55:43.401537+03:00 ppp 56619 waiting for process 36089 to die... 2025-04-08 18:55:42.400289+03:00 ppp 36089 [wan] IPV6CP: Close event 2025-04-08 18:55:42.400259+03:00 ppp 36089 [wan] IPCP: Close event 2025-04-08 18:55:42.400219+03:00 ppp 36089 [wan] IFACE: Close event 2025-04-08 18:55:42.400117+03:00 ppp 36089 caught fatal signal TERM 2025-04-08 18:55:42.399979+03:00 ppp 56619 waiting for process 36089 to die... 2025-04-08 18:55:42.399687+03:00 ppp 56619 process 56619 started, version 5.9 2025-04-08 18:55:42.399135+03:00 ppp 56619 Multi-link PPP daemon for FreeBSD 2025-04-08 18:54:43.826132+03:00 ppp 36089 [wan] Bundle: Interface ng0 createdbut nothing on LAGG

Ok next step...

I booted into the previous snapshot from February, launched PPPoE and pcap there —

Here’s an example of one of the packets:

b4:96:91:c9:77:84 is just active ethernet card ixl0 MAC form LAGG (FAILOVER) I have used for CARP VIP.

-

Hmm, well I can certainly see why that might fail. Setting it on a VIP really makes no sense for a L2 protocol. It seems like it worked 'by accident'. I'm not sure that will ever work with if_pppoe. I'll see if Kristof has any other opinion...

-

@stephenw10 I don't see how setting a carp IP on a PPPoE interface would make sense, no.

It doesn't make sense on the underlying Ethernet device (because it's not expected to have an address assigned at all), and also doesn't make sense on the PPPoE device itself, because there's no way to do the ARP dance that makes carp work.

-

@kprovost said in New PPPoE backend, some feedback:

It doesn't make sense on the underlying Ethernet device (because it's not expected to have an address assigned at all), and also doesn't make sense on the PPPoE device itself, because there's no way to do the ARP dance that makes carp work.

The whole point is to use the status of the parent interface to bring up the PPPoE interface. To determine the status of the parent (underlying) interface, the CARP VIP on the parent interface is exactly what's needed — to identify which node is the master and where to bring up PPPoE. Honestly, I have no idea why it even worked before. But if it's not supposed to work and never will, then of course I won't insist on this approach :)

Ideally, there would simply be a feature to bring up the PPPoE WAN session only if the firewall is the MASTER.

I doubt I'm the only one whose ISP doesn't appreciate users trying to initiate more than one PPPoE session. -

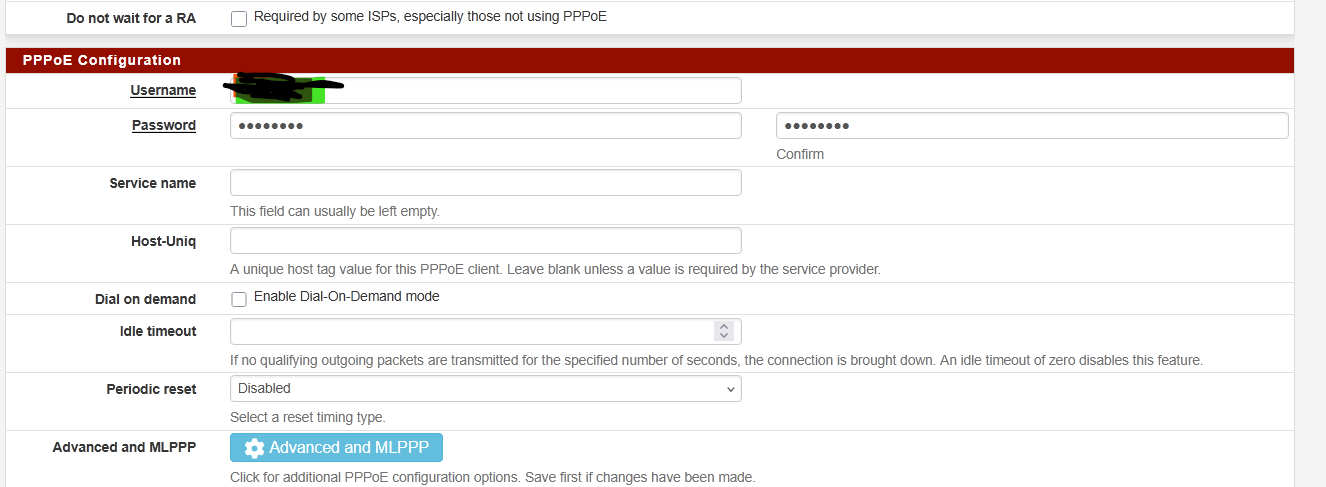

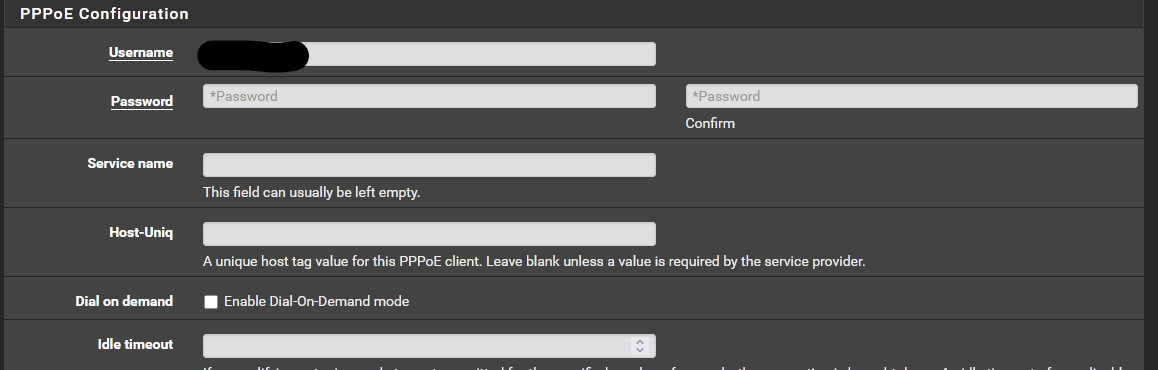

I've recently upgraded to the latest beta 2.8, and switched to the new PPPOE backend.

I really didn't have any issues with the previous one other than performance. I recently upgraded to 3gig fiber and have been struggling to get full speed when using pppoe on the pfsense box.

I have found no difference from the old to the new backend. Performance still seems to be the same. The odd thing is that I get full speed on the upload, but only about half to 2/3rds on the down. I.e. I get 3000-3200Mbps upload, but download is usually around 1700-1900Mbps.

I've tried it with an intel X520 card, and an X710 card. No difference.

What I have noticed, and I'm not sure if this is the reason for the performance hit, is that on the upload or the tx side it seems to use all the queue's available to it. but on the rx side it only uses the first queue. I tried tweaking the queue's on the x710 and didn't make any difference.

Here's an example

[2.8.0-BETA][root@router]/root: sysctl -a | grep '.ixl..*xq0' | grep packets

dev.ixl.0.pf.txq07.packets: 2550054

dev.ixl.0.pf.txq06.packets: 2444906

dev.ixl.0.pf.txq05.packets: 542271

dev.ixl.0.pf.txq04.packets: 781264

dev.ixl.0.pf.txq03.packets: 2216896

dev.ixl.0.pf.txq02.packets: 2738515

dev.ixl.0.pf.txq01.packets: 5394

dev.ixl.0.pf.txq00.packets: 8645

dev.ixl.0.pf.rxq07.packets: 0

dev.ixl.0.pf.rxq06.packets: 0

dev.ixl.0.pf.rxq05.packets: 0

dev.ixl.0.pf.rxq04.packets: 0

dev.ixl.0.pf.rxq03.packets: 0

dev.ixl.0.pf.rxq02.packets: 0

dev.ixl.0.pf.rxq01.packets: 0

dev.ixl.0.pf.rxq00.packets: 6262688at the moment I have this in my loader.conf.local file

net.tcp.tso="0"

net.inet.tcp.lro="0"

hw.ixl.flow_control="0"

hw.ix1.num_queues="8"

dev.ixl.0.iflib.override_qs_enable=1

dev.ixl.0.iflib.override_nrxqs=8

dev.ixl.0.iflib.override_ntxqs=8

dev.ixl.1.iflib.override_qs_enable=1

dev.ixl.1.iflib.override_nrxqs=8

dev.ixl.1.iflib.override_ntxqs=8

dev.ixl.0.iflib.override_nrxds=4096

dev.ixl.0.iflib.override_ntxds=4096

dev.ixl.1.iflib.override_nrxds=4096

dev.ixl.1.iflib.override_ntxds=4096If I could fix this issue the rest seems to be rock solid.

-

How are you testing? What hardware are you running?

The upload speed is also unchanged from mpd5?

-

I'm running a Supermicro E300-8D with a Xeon D-1518 CPU and 16gig of Ram.

The onboard 10gig nic's are Lagg'd in to my lan and the addon slot is filled with currently an X710 but initially I had tried a X520.The switch from mpd5 made very little if any difference. Maybe 100mbps if that.

I'm testing from my pc which has 10gig fiber into my core switch and then the pfsense box is fed by the mentioned 10gig LAGG group.

When I run the speed test right from the modem itself using he provider interface it comes back with 3.2gig up and down every time. When running it from my pc I'm using the providers speed test site. Upload is always 3-3.2gig, but the download always falls short.

The only difference I saw from going from mpd5 to the new one is the cpu usage dropped.. Previously on a test I'd see upwards of 60% cpu , now it's 38-43%. 39% on the download and upwards of 43% on the upload tests.

The only thing I haven't tried that I can think of is to remove the pppoe from pfsense and just set it up as a dhcp to the provider modem and see if I get the full speed all the way through.

The part I find odd is that if it was the pppoe , I wouldn't think I'd get full speed on the upload ? That's why I started looking at other things, like the queue's to see if I could find something off.

-

Does that speedtest use multiple connections?

The reason you see a difference between up and down is that when you're downloading Receive Side Scaling applies to the PPPoE directly and that is what limits it.

However if_pppoe is RSS enabled so should be able to spread the load across the queues/cores much better. But only if there are multiple streams to spread.

And just to be clear the WAN here was either the X520 or X710 NIC?

-

@dsl-ottawa

Did you remove the net.isr.dispatch=deferred? See PPPoE with multi-queue NICs -

@w0w said in New PPPoE backend, some feedback:

@dsl-ottawa

Did you remove the net.isr.dispatch=deferred? See PPPoE with multi-queue NICs@w0w I did remove this value, shouldn't I ? I didn't see any change without.

-

@mr_nets said in New PPPoE backend, some feedback:

@w0w I did remove this value, shouldn't I ? I didn't see any change without.

AFAIK it should be removed on the new backend.

Do you have any other tunes enabled, flow control, tcp segmentation offload, LRO, no?

Just guessing... I have never seen anything over 1Gig running PPPoE... -

@w0w said in New PPPoE backend, some feedback:

@mr_nets said in New PPPoE backend, some feedback:

@w0w I did remove this value, shouldn't I ? I didn't see any change without.

AFAIK it should be removed on the new backend.

Do you have any other tunes enabled, flow control, tcp segmentation offload, LRO, no?

Just guessing... I have never seen anything over 1Gig running PPPoE...Fine, every offload setting are disabled as well. The only thing I didn't remove is Jumbo Frame on PPPoE (MTU 1500) since my ISP support that.

-

What CPU usage are you seeing when you test? What about per core usage? I one core still pegged at 100%

-

@stephenw10

The multiple connection question is one I can't really answer. All I can say is that it's my providers tester and they sell up to 8gig on residential , so I have to sort of assume that it would take multiple streams into account. There is a comment on their page that mentions Ookla and Speedtest so there may be a linkage to them for the testing. It's an interesting thought though. Is there a known good tester that would be a good one to use to test against ? I'm betting it's going to be hard to get a full pipe test across the internet.Yup I've tried both cards for the WAN side of the connection.

I've even thought that maybe the problem is on the PFSENSE side be elsewhere, like what if it's my desktop that is having the issue (long shot but the reality is that it is a device in the chain of the test)

-

@w0w

I double checked and yup currently it is not there.

so in my tunables side here are the ones I think I have changed (some have been there for a while to help the 10gig on the LAN side and general performance). and Some where attempts to fix this issue. I'm certainly not an expert when it comes to tuning so it's very possible that something is messed up.kern.ipc.somaxconn 4096

hw.ix.tx_process_limit -1

hw.ix.rx_process_limit -1

hw.ix.rxd 4096

hw.ix.txd 4096

hw.ix.flow_control 0

hw.intr_storm_threshold 10000

net.isr.bindthreads 1

net.isr.maxthreads 8

net.inet.rss.enabled 1

net.inet.rss.bits 2

net.inet.ip.intr_queue_maxlen 4000

net.isr.numthreads 8

hw.ixl.tx_process_limit -1

hw.ixl.rx_process_limit -1

hw.ixl.rxd 4096

hw.ixl.rxd 4096

dev.ixl.0.fc 0

dev.ixl.1.fc 0and in my boot local file

net.inet.tcp.lro="0"

hw.ixl.flow_control="0"

hw.ix1.num_queues="8"

dev.ixl.0.iflib.override_qs_enable=1

dev.ixl.0.iflib.override_nrxqs=8

dev.ixl.0.iflib.override_ntxqs=8

dev.ixl.1.iflib.override_qs_enable=1

dev.ixl.1.iflib.override_nrxqs=8

dev.ixl.1.iflib.override_ntxqs=8

dev.ixl.0.iflib.override_nrxds=4096

dev.ixl.0.iflib.override_ntxds=4096

dev.ixl.1.iflib.override_nrxds=4096

dev.ixl.1.iflib.override_ntxds=4096And in the netowrk interface side

TCP Segmentaion offloading, Large Receive and of course use if_pppoe are checked.

more than 1gig pppoe, I'm not sure where in the world uses it but in Canada, they seem to love their PPPOE, we can get it up to 8gig from one of the providers. Honestly I still miss the old PVC based ATM connections, no special configuration from the users perspective it's just a pipe and it worked lol. I know the reason they use PPPOE it's just in cases like this it's extra overhead to deal with.

-

Yeah, if it's ookla it's almost certainly multistream. Also at those speeds it would probably need to be.

I'm not sure I can recommend anything other than the ISPs test at that speed either. Anything that was routed further may be restricted there.

But check the per-core CPU usage. Is it just hitting a single core limit?