Running pfSense 2.4.4 over a KVM VM in PROXMOX 6.1.5.

-

Hi everyone one.

I am playing with pfSense 2.4.4 installed over a VM in PROXMOX 6.1.5 and It takes about five minutes to start the pfSense.

Do you know if there is a special configuration that I need put in the VM or in the pfSense that help me to start pfSense faster?

Regards,

Ramsés

-

https://docs.netgate.com/pfsense/en/latest/virtualization/virtualizing-pfsense-with-proxmox.html

Since the VM is already up and running and the Proxmox network configuration is done properly, go down to the very last point and do the recommended advanced settings.

-

@viragomann, very thanks by your answer.

I have configured the VM as tells in the link that you send me.

Only have one difference:

In the link puts the disk as VirtIO and I have the disk as SCSI VirtIO.

Do you think that this can be the problem?

Regards,

Ramsés

-

No, that want matter here.

But did you disable "Hardware Checksum Offloading" as recommended? -

@viragomann, yes, I had disabled "Hardware Checksum Offloading" too.

I followed the manual of the link when I installed pfSense over PROXMOX.

Regards,

Ramsés

-

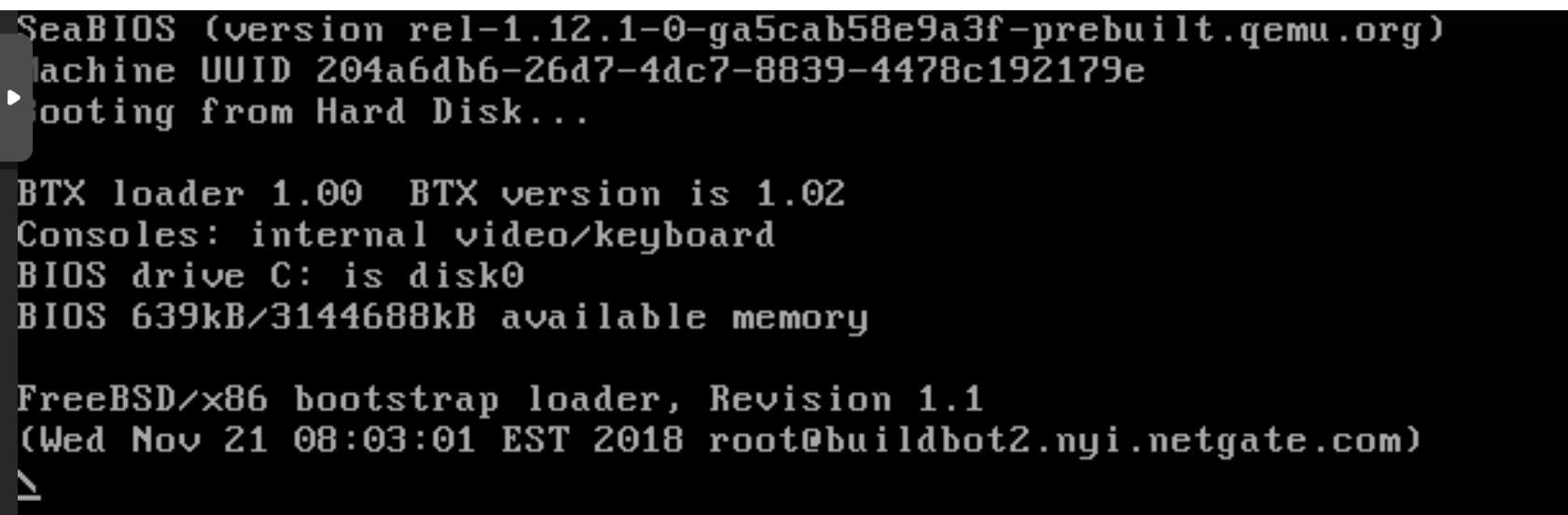

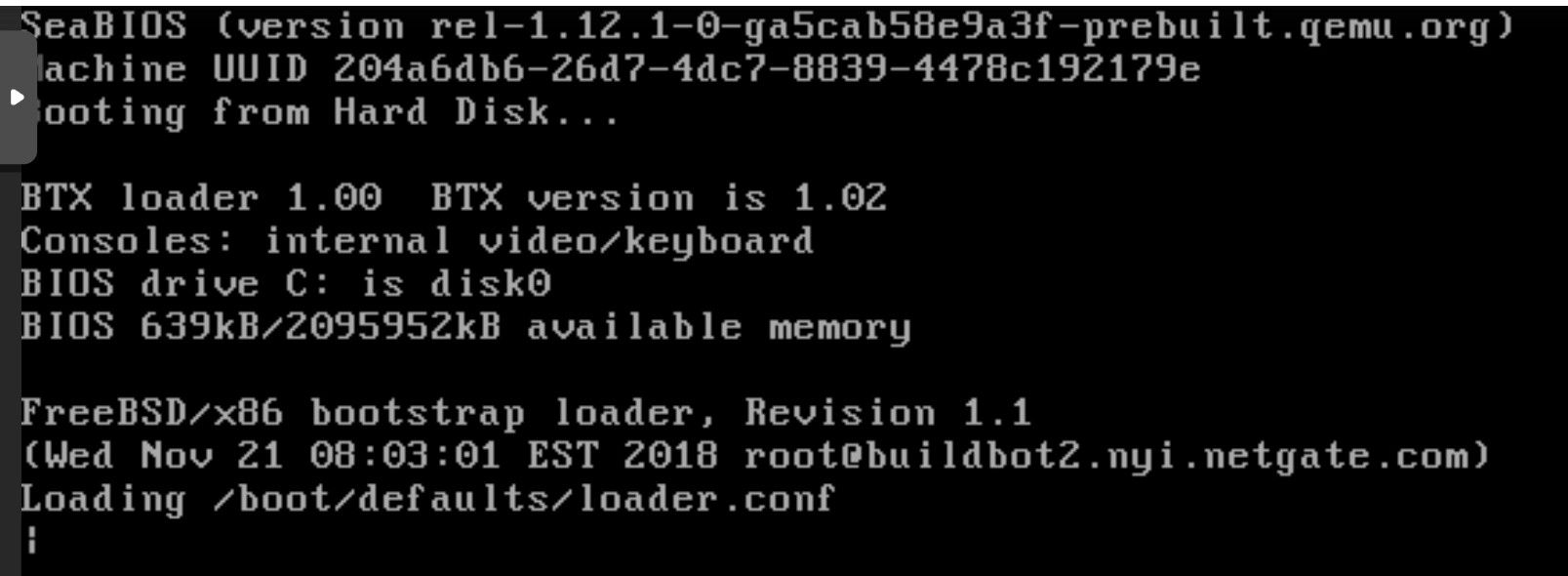

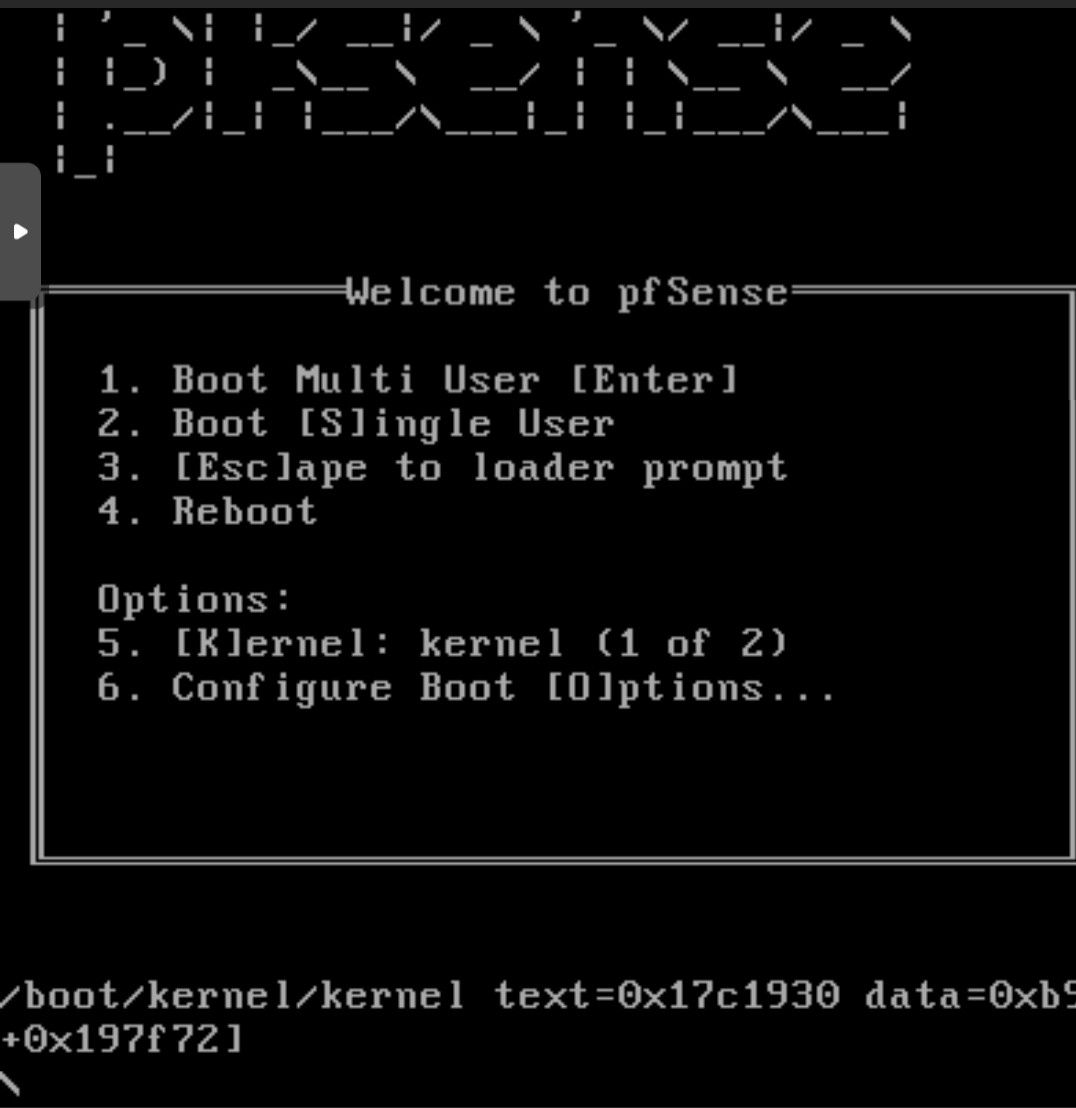

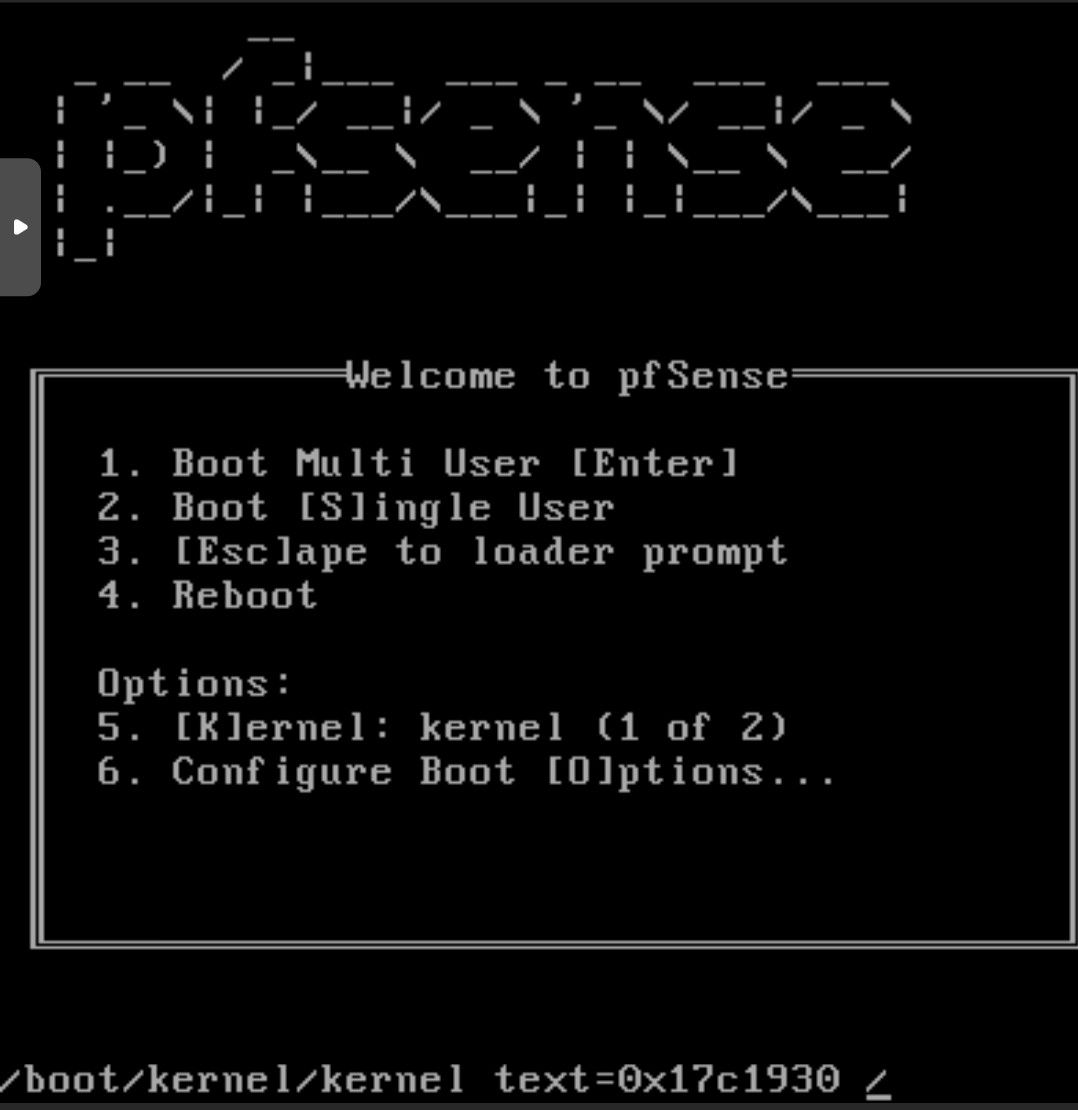

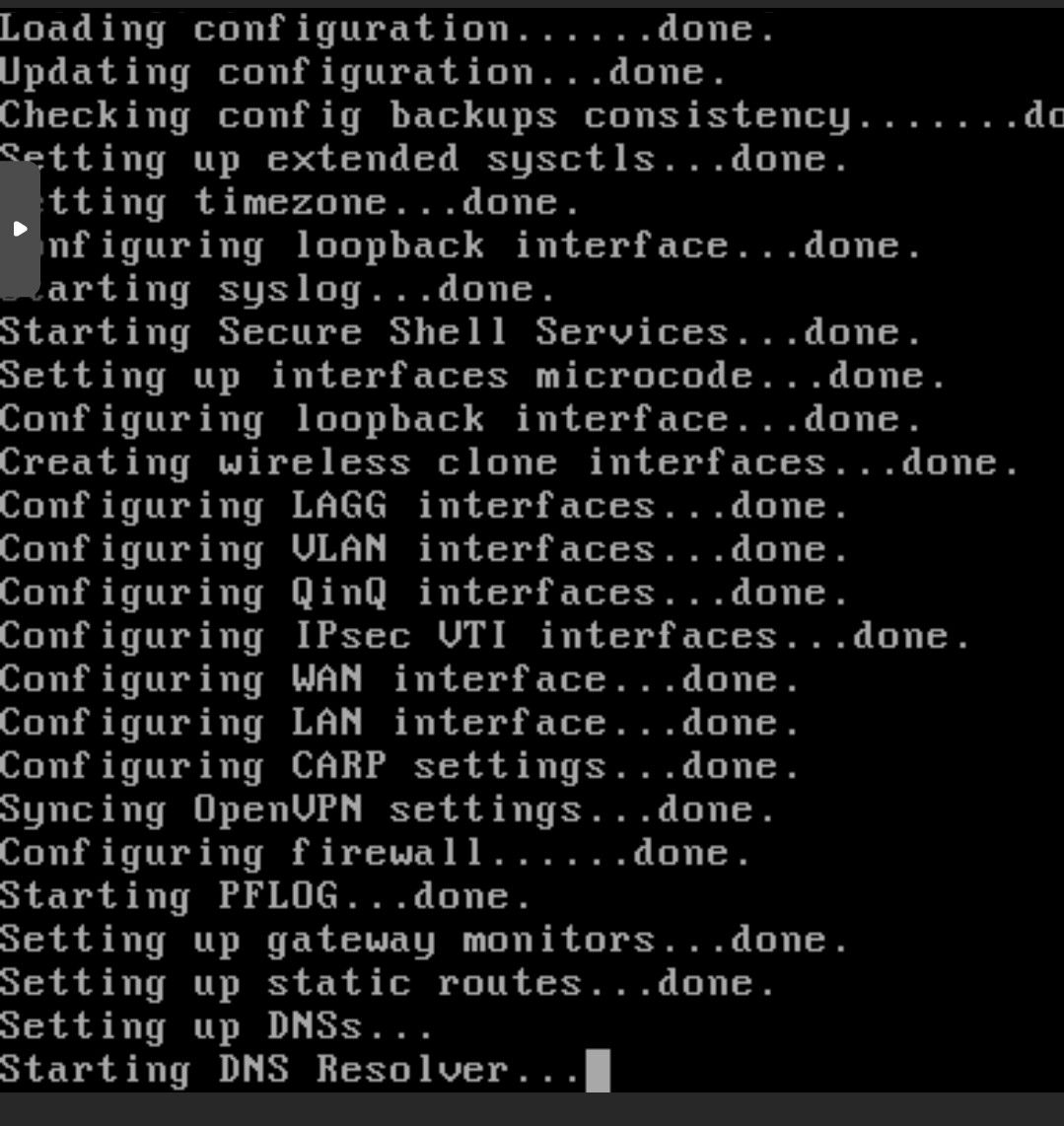

@viragomann, the installation is done fast but when It restarts it stucks here.

Here.

Here.

And here.

I have installed the latest version of PROXMOX and It is updated.

This is the KVM VM configuration:

root@proxmox:~# qm config 750 balloon: 0 bootdisk: scsi0 cores: 2 cpu: kvm64 ide2: local:iso/pfSense-CE-2.4.4-RELEASE-p3-amd64.iso,media=cdrom,size=680214K memory: 4096 net0: virtio=E6:9F:61:80:C9:6A,bridge=vmbr0 numa: 0 onboot: 1 ostype: l26 scsi0: local-lvm:vm-750-disk-0,size=15G scsihw: virtio-scsi-pci smbios1: uuid=204a6db6-26d7-4dc7-8839-4478c192179e sockets: 1 vmgenid: 832d7332-4977-47ae-9b02-0cf5826ba5f3 root@proxmox:~#I unknow what happen...

Regards,

Ramsés

-

You've selected a wrong OS Type, "other" should be used for pfSense.

Don't know if it matters, but I recommend to use the host cpu type.

Also you might be able to change the disk type to virtIO by editing the config file in nano.

-

I have several pfSense VMs running in Proxmox with various OS types set (now that I check!). All are running VirtIO SCSI without issue.

My PVE version is a little out of date though, 6.0-5.Steve

-

@viragomann, I had changed OS Type in the VM to the others Linux SO Type and the VM took the same time to booting.

@viragomann / @stephenw10, I had installed on a VM:

pfSense-CE-2.4.4-RELEASE-p3-amd64

And It took a lot of time to booting.

I have installed on another VM:

pfSense-CE-2.4.5-RELEASE-amd64

And It takes much less time to booting.

I have upgrade pfSense 2.4.4-p3 to 2.4.5 on first VM and It takes much less time to booting.

The VM config:

root@proxmox:/# qm config 750 balloon: 0 bootdisk: scsi0 cores: 2 cpu: kvm64 ide2: local:iso/pfSense-CE-2.4.5-RELEASE-amd64.iso,media=cdrom,size=720684K memory: 4096 net0: virtio=E6:9F:61:80:C9:6A,bridge=vmbr0 numa: 0 onboot: 1 ostype: l26 scsi0: local-lvm:vm-750-disk-1,iothread=1,size=15G scsihw: virtio-scsi-pci smbios1: uuid=204a6db6-26d7-4dc7-8839-4478c192179e sockets: 1 tablet: 0 vmgenid: 832d7332-4977-47ae-9b02-0cf5826ba5f3 root@proxmox:/#I think that the problem is of the pfSense 2.4.4-p3 version, isen't It?

Another question:

UFS or ZFS to do the install pfSense over the VM?.

I have tried / tested both but what do you think?

Regards,

Ramsés

-

On a VM the usual benefits of ZFS are often not there. Snaphots and the fact the host will probably stay up removes them.

Mine are all running UFS unless I'm testing ZFS specifically.

Steve

-

Hi again,

First time, thanks so much both by your answers.

Now, I have some questions:

@stephenw10 , would It have any problem of performance if I configure ZFS instead of UFS the Disk in the VM?

About how long should it takes to booting a KVM with pfSense in PROXMOX?

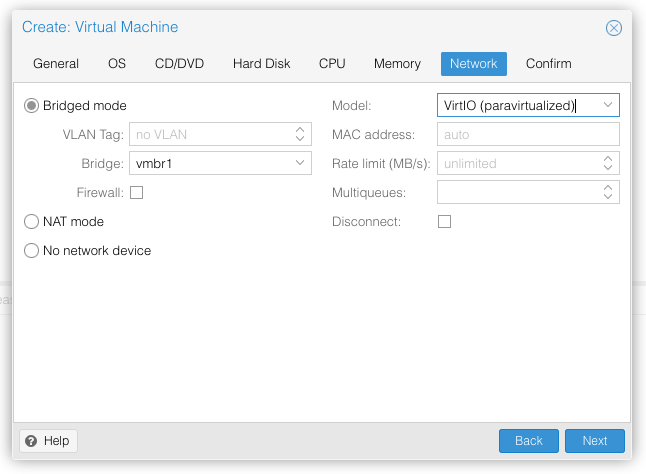

And I have found an incongruity in the documentation:

- In this link (Virtualizing pfSense with Proxmox):

https://docs.netgate.com/pfsense/en/latest/virtualization/virtualizing-pfsense-with-proxmox.html

Tells that configure the Network Interface Model VirtIO.

But in this other link (Configuring High Availability):

https://docs.netgate.com/pfsense/en/latest/highavailability/configuring-high-availability.html?highlight=high%20availability

Tells that configure the Network Interface Model E1000.

What should be the Network Interface Model configured really?

Regards,

Ramsés

-

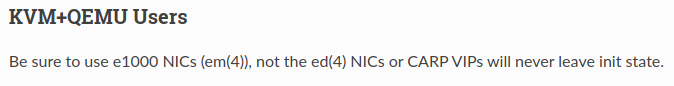

I'm using VirtIO NICs (vtnet) for everything and have never seen an issue.

The ed(4) driver is for ancient hardware. I don't think Proxmox supports that any longer. I imagine when that guide was written those may have been the only choices.Steve

-

@viragomann sorry, I had no see the CPU recommendation:

Host CPU Type gives better performance that KVM64 CPU Type in the VM?

Regards,

Ramsés

-

You will get the best benefits of the processor features, when using host type. This passes all the features of the processor through to the VM, while KVM64 provides only a small amount of common features. For instance, KVM64 doesn't make use of AES-NI, even if your host CPU supports it.

-

@viragomann said in Running pfSense 2.4.4 over a KVM VM in PROXMOX 6.1.5.:

You will get the best benefits of the processor features, when using host type. This passes all the features of the processor through to the VM, while KVM64 provides only a small amount of common features. For instance, KVM64 doesn't make use of AES-NI, even if your host CPU supports it.

with kvm64 you can set extra cpu flags though, including AES. All via proxmox gui.