@michmoor said in clarification needed on direction of rules and usefulness:

@bmeeks Understood. In my mind, because the firewall is stateful I was thinking that the rules although are evaluated from EXTERNAL to INTERNAL, there was some value of having the rule applied on the internal lan as the firewall would see the return traffic.

So are you saying it operates from a purely stateless way, rule evaluated from source to destination (external to internal) and that's it. There needs to be a rule matching from HOME to External?

Lastly, from your standpoint, PFblockerNG and some of the ET rule sets have IP blocking. I assume there is overlap. Does it matter if the suricata rules are enabled for those IPs as well? Assuming there is no resource constraint on the firewall.

I am not understanding why you bring in stateful connections and rules. It seems to me you are confusing the IDS/IPS with the firewall. They are not the same at all! In fact, most commercial enterprises run the IDS/IPS on a completely separate box and not on the firewall.

The IDS/IPS (Suricata in your case), is totally ignorant of firewall states and even firewall rules. It simply evaluates packets as they flow across the physical interface Suricata is monitoring. And it is only looking at the IP header info in the packet to determine how far to evaluate that packet against the Suricata rules (not the firewall rules, the firewall rules are totally irrelevant to Suricata).

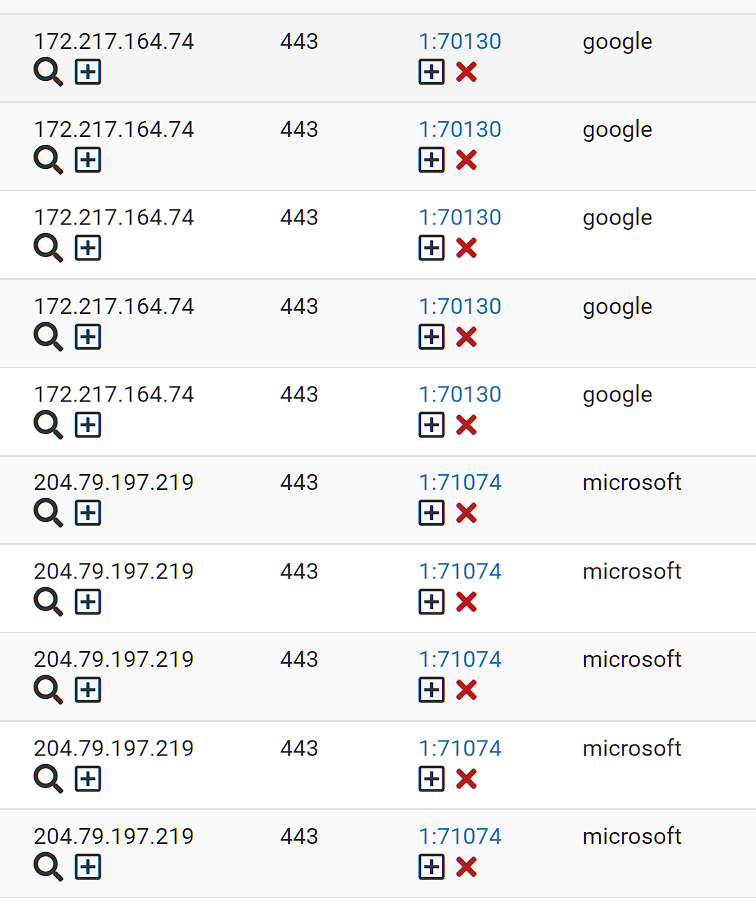

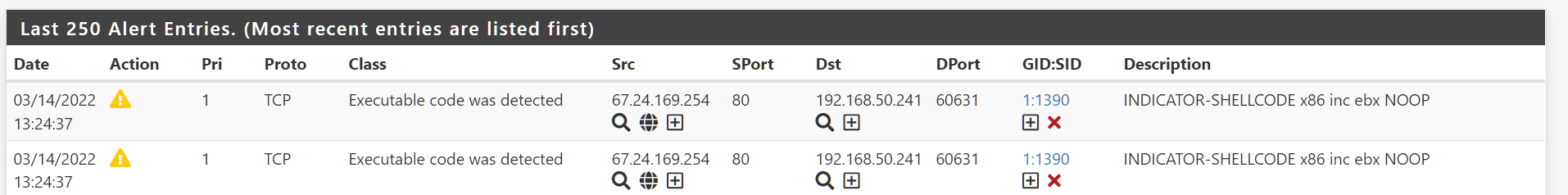

Suricata, especially when running in IPS mode, does not interact with the firewall engine at all. Not one bit. Actually Suricata sits out in front of the firewall. Here are two diagrams that show Suricata lives "outside", on the NIC-side of the firewall and thus sees traffic before the firewall rules have been applied.

[image: 1647271178377-ids-ips-network-flow-ips-mode.png]

[image: 1647271187125-ids-ips-network-flow-legacy-mode.png]

In terms of your pfBlocker question, if you use only simple IP lists in Suricata, then there would be perhaps 100% overlap between what it does and what pfBlocker does. But most folks would not do that. If you use pfBlocker, then you might apply other rules in Suricata that are not simple IP lists. But as we discussed much earlier in this thread, encryption severely hinders the ability of Suricata or any IDS/IPS package to peer into the packet payload.