@jimp On my installation, the default route is set to the cable modem static gateway, so no gateway group issue in play for me.

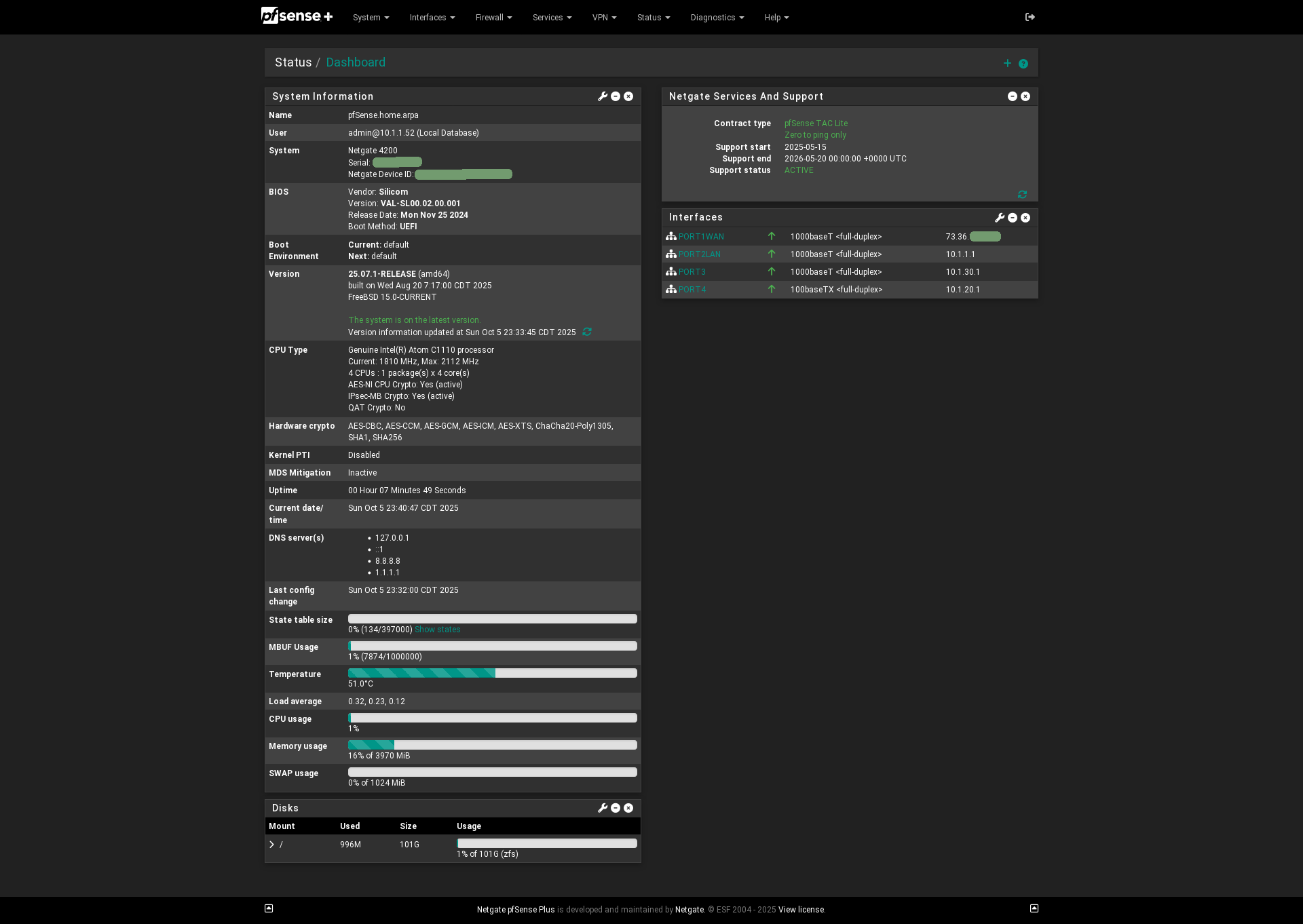

"Link#6" is igb5 which is my OPT4 interface going to the DSL modem. The modem has an IP of 192.168.254.254/24 (it's from Windstream), the pfSense box has .1, which we set statically.

"Link #2" is igb1 which is the static IP (/30) from Spectrum / Time Warner Business.

netstat -nr

Routing tables

Internet:

Destination Gateway Flags Netif Expire

default 70.60.x.y UGS igb1

1.0.0.1 192.168.254.254 UGHS igb5

1.1.1.1 70.60.x.y UGHS igb1

4.2.2.4 192.168.254.254 UGHS igb5

4.2.2.5 70.60.x.y UGHS igb1

8.8.4.4 192.168.254.254 UGHS igb5

8.8.8.8 70.60.x.y UGHS igb1

9.9.9.9 70.60.x.y UGHS igb1

10.17.0.0/24 link#1 U igb0

10.17.0.1 link#1 UHS lo0

10.254.254.0/24 10.254.254.2 UGS ovpns1

10.254.254.1 link#11 UHS lo0

10.254.254.2 link#11 UH ovpns1

70.60.x.w/30 link#2 U igb1

70.60.x.z link#2 UHS lo0

127.0.0.1 link#8 UH lo0

149.112.112.112 192.168.254.254 UGHS igb5

192.168.254.0/24 link#6 U igb5

192.168.254.1 link#6 UHS lo0

ping -c 4 192.168.254.254

PING 192.168.254.254 (192.168.254.254): 56 data bytes

64 bytes from 192.168.254.254: icmp_seq=0 ttl=64 time=1.170 ms

64 bytes from 192.168.254.254: icmp_seq=1 ttl=64 time=1.071 ms

64 bytes from 192.168.254.254: icmp_seq=2 ttl=64 time=1.084 ms

64 bytes from 192.168.254.254: icmp_seq=3 ttl=64 time=1.083 ms

--- 192.168.254.254 ping statistics ---

4 packets transmitted, 4 packets received, 0.0% packet loss

round-trip min/avg/max/stddev = 1.071/1.102/1.170/0.040 ms

arp -i igb5 -a

? (192.168.254.254) at 4c:17:eb:21:26:09 on igb5 expires in 1178 seconds [ethernet]

? (192.168.254.1) at 00:08:a2:09:5a:15 on igb5 permanent [ethernet]

traceroute -I -i igb5 checkip.dyndns.com

traceroute: Warning: checkip.dyndns.com has multiple addresses; using 216.146.43.71

traceroute to checkip.dyndns.com (216.146.43.71), 64 hops max, 48 byte packets

1 * * *

2 * * *

3 * * *

4 * * *

^C

ping -c 4 -S 192.168.254.1 checkip.dyndns.com

PING checkip.dyndns.com (216.146.43.71) from 192.168.254.1: 56 data bytes

--- checkip.dyndns.com ping statistics ---

4 packets transmitted, 0 packets received, 100.0% packet loss

[This seems like it might be some of the problem...]

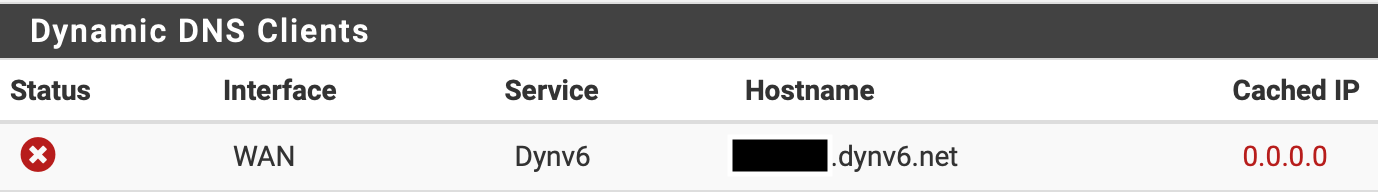

It seems like I can't get a traceroute (ICMP) through the crappy DSL modem. However, apinger is not complaining about the connection being down, and it is set to ping 4.2.2.4 from that interface (instead of using the gateway IP). So maybe there's an apinger bug in play here and my connection is actually down but not correctly showing it?

It would be immensely more helpful if BSD ping could be forced to send from a specific interface... I'm wondering if ping -S <int_ip> is trying to send the traffic out the wrong interface somehow and is maybe a red herring?

So let's play some more - adding a static route to 216.146.43.71 (which is one of the IPs for checkip.dyndns.com) to force it through the DSL gateway:

route add -host 216.146.43.71 192.168.254.254

add host 216.146.43.71: gateway 192.168.254.254

ping 216.146.43.71

PING 216.146.43.71 (216.146.43.71): 56 data bytes

64 bytes from 216.146.43.71: icmp_seq=0 ttl=49 time=149.241 ms

64 bytes from 216.146.43.71: icmp_seq=1 ttl=49 time=150.749 ms

64 bytes from 216.146.43.71: icmp_seq=2 ttl=49 time=151.432 ms

^C

--- 216.146.43.71 ping statistics ---

3 packets transmitted, 3 packets received, 0.0% packet loss

traceroute -I 216.146.43.71

traceroute to 216.146.43.71 (216.146.43.71), 64 hops max, 48 byte packets

1 192.168.254.254 (192.168.254.254) 1.165 ms 0.912 ms 0.930 ms

2 h3.176.142.40.ip.windstream.net (40.142.176.3) 20.753 ms 20.375 ms 19.908 ms

3 ae2-0.agr03.hdsn01-oh.us.windstream.net (40.136.113.108) 21.559 ms 22.031 ms 21.936 ms

4 et9-0-0-0.cr01.cley01-oh.us.windstream.net (40.136.97.135) 24.981 ms 20.902 ms 24.131 ms

5 et11-0-0-0.cr01.chcg01-il.us.windstream.net (40.128.248.71) 27.746 ms 30.224 ms 30.609 ms

6 chi-b21-link.telia.net (80.239.194.41) 30.788 ms 32.021 ms 28.424 ms

7 nyk-bb3-link.telia.net (80.91.246.163) 147.362 ms 149.769 ms 147.627 ms

8 ldn-bb3-link.telia.net (62.115.135.95) 144.829 ms 144.190 ms 144.030 ms

9 hbg-bb1-link.telia.net (80.91.249.11) 140.047 ms 132.839 ms 130.322 ms

10 war-b1-link.telia.net (62.115.135.187) 145.479 ms 146.117 ms 145.660 ms

11 dnsnet-ic-320436-war-b1.c.telia.net (213.248.68.135) 151.281 ms 147.401 ms 151.799 ms

12 checkip.dyndns.com (216.146.43.71) 150.409 ms 152.881 ms 151.245 ms

curl -v --interface igb5 http://216.146.43.71

* Rebuilt URL to: http://216.146.43.71/

* Trying 216.146.43.71...

* TCP_NODELAY set

* Local Interface igb5 is ip 192.168.254.1 using address family 2

* Local port: 0

* Connected to 216.146.43.71 (216.146.43.71) port 80 (#0)

> GET / HTTP/1.1

> Host: 216.146.43.71

> User-Agent: curl/7.61.1

> Accept: */*

>

< HTTP/1.1 200 OK

< Content-Type: text/html

< Server: DynDNS-CheckIP/1.0.1

< Connection: close

< Cache-Control: no-cache

< Pragma: no-cache

< Content-Length: 104

<

<html><head><title>Current IP Check</title></head><body>Current IP Address: 75.90.aaa.bbb</body></html>

* Closing connection 0

[!! WORKS !!]

Something really weird is going on here. For whatever reason the traffic is not correctly egressing through the specified interface when using curl --interface, and it's only going the way we want if I manually add a static route. I'm not exactly sure how the PHP code is hitting checkip.dyndns.com directly via a given interface, but something has changed behavior-wise that is making this fail. (Guessing maybe it's an underlying OS thing at this point, but I suppose it could also be something with curl if that is just being called via PHP?)